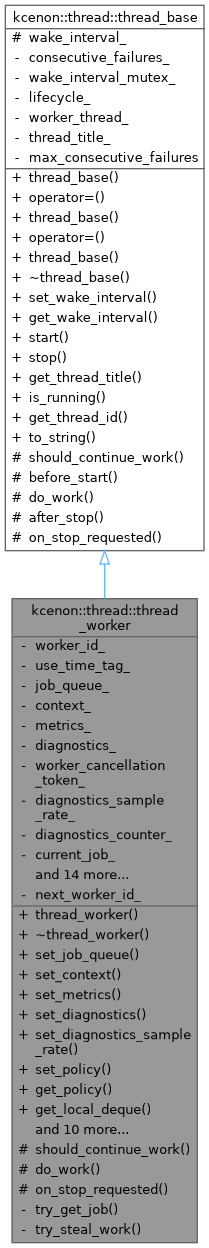

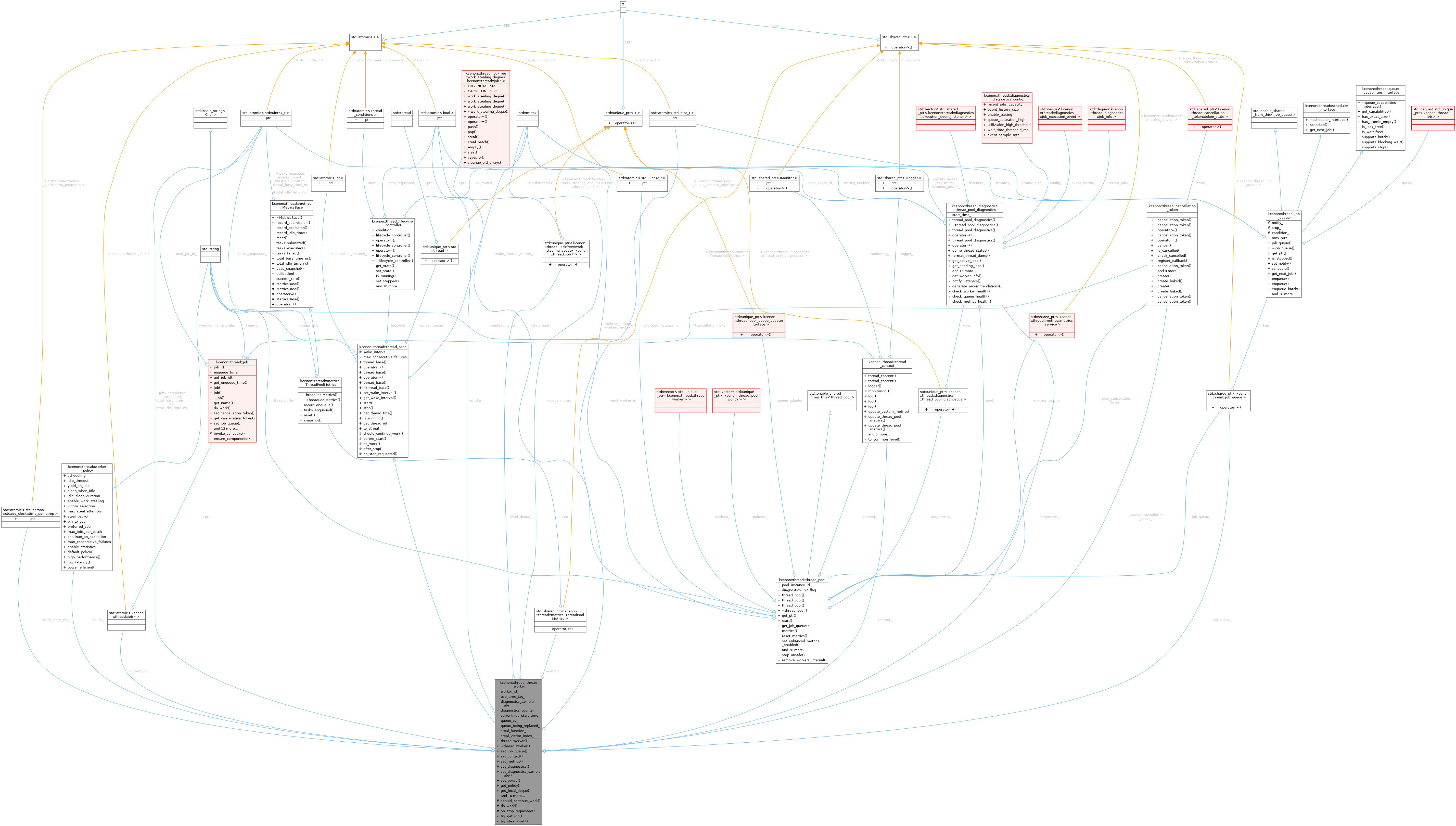

A specialized worker thread that processes jobs from a job_queue.

More...

#include <thread_worker.h>

Public Member Functions | |

| thread_worker (const bool &use_time_tag=true, const thread_context &context=thread_context()) | |

Constructs a new thread_worker. | |

| virtual | ~thread_worker (void) |

| Virtual destructor. Ensures the worker thread is stopped before destruction. | |

| auto | set_job_queue (std::shared_ptr< job_queue > job_queue) -> void |

Sets the job_queue that this worker should process. | |

| auto | set_context (const thread_context &context) -> void |

| Sets the thread context for this worker. | |

| void | set_metrics (std::shared_ptr< metrics::ThreadPoolMetrics > metrics) |

| Provide shared metrics storage for this worker. | |

| void | set_diagnostics (diagnostics::thread_pool_diagnostics *diag) |

| Set the diagnostics instance for event tracing. | |

| void | set_diagnostics_sample_rate (std::uint32_t rate) |

| Set the diagnostics sampling rate. | |

| void | set_policy (const worker_policy &policy) |

| Set the worker policy for this worker. | |

| const worker_policy & | get_policy () const |

| Get the current worker policy. | |

| lockfree::work_stealing_deque< job * > * | get_local_deque () noexcept |

| Get the local work-stealing deque for this worker. | |

| void | set_steal_function (std::function< job *(std::size_t)> steal_fn) |

| Set the steal function for finding other workers' deques. | |

| std::size_t | get_worker_id () const |

| Get the worker ID. | |

| auto | get_context (void) const -> const thread_context & |

| Gets the thread context for this worker. | |

| bool | is_idle () const noexcept |

| Checks if the worker is currently idle (not processing a job). | |

| std::uint64_t | get_jobs_completed () const noexcept |

| Gets the total number of jobs successfully completed by this worker. | |

| std::uint64_t | get_jobs_failed () const noexcept |

| Gets the total number of jobs that failed during execution. | |

| std::chrono::nanoseconds | get_total_busy_time () const noexcept |

| Gets the total time spent executing jobs (busy time). | |

| std::chrono::nanoseconds | get_total_idle_time () const noexcept |

| Gets the total time spent waiting for jobs (idle time). | |

| std::chrono::steady_clock::time_point | get_state_since () const noexcept |

| Gets the time when the worker entered its current state. | |

| std::optional< diagnostics::job_info > | get_current_job_info () const noexcept |

| Gets information about the currently executing job. | |

Public Member Functions inherited from kcenon::thread::thread_base Public Member Functions inherited from kcenon::thread::thread_base | |

| thread_base (const thread_base &)=delete | |

| thread_base & | operator= (const thread_base &)=delete |

| thread_base (thread_base &&)=delete | |

| thread_base & | operator= (thread_base &&)=delete |

| thread_base (const std::string &thread_title="thread_base") | |

Constructs a new thread_base object. | |

| virtual | ~thread_base (void) |

| Virtual destructor. Ensures proper cleanup of derived classes. | |

| auto | set_wake_interval (const std::optional< std::chrono::milliseconds > &wake_interval) -> void |

| Sets the interval at which the worker thread should wake up (if any). | |

| auto | get_wake_interval () const -> std::optional< std::chrono::milliseconds > |

| Gets the current wake interval setting. | |

| auto | start (void) -> common::VoidResult |

| Starts the worker thread. | |

| auto | stop (void) -> common::VoidResult |

| Requests the worker thread to stop and waits for it to finish. | |

| auto | get_thread_title () const -> std::string |

| Returns the worker thread's title. | |

| auto | is_running () const -> bool |

| Checks whether the worker thread is currently running. | |

| auto | get_thread_id () const -> std::thread::id |

| Gets the native thread ID of the worker thread. | |

| virtual auto | to_string (void) const -> std::string |

Returns a string representation of this thread_base object. | |

Protected Member Functions | |

| auto | should_continue_work () const -> bool override |

| Determines if there are jobs available in the queue to continue working on. | |

| auto | do_work () -> common::VoidResult override |

| Processes one or more jobs from the queue. | |

| auto | on_stop_requested () -> void override |

| Called when the worker is requested to stop. | |

Protected Member Functions inherited from kcenon::thread::thread_base Protected Member Functions inherited from kcenon::thread::thread_base | |

| virtual auto | before_start (void) -> common::VoidResult |

| Called just before the worker thread starts running. | |

| virtual auto | after_stop (void) -> common::VoidResult |

| Called immediately after the worker thread has stopped. | |

Private Member Functions | |

| std::unique_ptr< job > | try_get_job () |

| Try to get a job from local deque first, then global queue. | |

| std::unique_ptr< job > | try_steal_work () |

| Try to steal work from other workers. | |

Private Attributes | |

| std::size_t | worker_id_ {0} |

| Unique ID for this worker instance. | |

| bool | use_time_tag_ |

| Indicates whether to use time tags or timestamps for job processing. | |

| std::shared_ptr< job_queue > | job_queue_ |

| A shared pointer to the job queue from which this worker obtains jobs. | |

| thread_context | context_ |

| The thread context providing access to logging and monitoring services. | |

| std::shared_ptr< metrics::ThreadPoolMetrics > | metrics_ |

| Shared metrics aggregator provided by the owning thread pool. | |

| diagnostics::thread_pool_diagnostics * | diagnostics_ {nullptr} |

| Pointer to the diagnostics instance for event tracing. | |

| cancellation_token | worker_cancellation_token_ |

| Cancellation token for this worker. | |

| std::uint32_t | diagnostics_sample_rate_ {1} |

| Diagnostics sampling rate (record every Nth job). | |

| std::uint64_t | diagnostics_counter_ {0} |

| Counter for diagnostics sampling. | |

| std::atomic< job * > | current_job_ {nullptr} |

| Pointer to the currently executing job. | |

| std::atomic< bool > | is_idle_ {true} |

| Indicates whether the worker is currently idle (not processing a job). | |

| std::atomic< std::uint64_t > | jobs_completed_ {0} |

| Total number of jobs successfully completed by this worker. | |

| std::atomic< std::uint64_t > | jobs_failed_ {0} |

| Total number of jobs that failed during execution. | |

| std::atomic< std::uint64_t > | total_busy_time_ns_ {0} |

| Total time spent executing jobs (busy time) in nanoseconds. | |

| std::atomic< std::uint64_t > | total_idle_time_ns_ {0} |

| Total time spent waiting for jobs (idle time) in nanoseconds. | |

| std::atomic< std::chrono::steady_clock::time_point::rep > | state_since_rep_ |

| Time point when the worker entered its current state. | |

| std::chrono::steady_clock::time_point | current_job_start_time_ |

| Time point when the current job started executing. | |

| std::mutex | queue_mutex_ |

| Mutex protecting job queue replacement. | |

| std::condition_variable | queue_cv_ |

| Condition variable for queue replacement synchronization. | |

| bool | queue_being_replaced_ {false} |

| Indicates whether queue replacement is in progress. | |

| worker_policy | policy_ |

| Worker policy configuration. | |

| std::unique_ptr< lockfree::work_stealing_deque< job * > > | local_deque_ |

| Local work-stealing deque for this worker. | |

| std::function< job *(std::size_t)> | steal_function_ |

| Function to steal work from other workers. | |

| std::size_t | steal_victim_index_ {0} |

| Counter for round-robin steal victim selection. | |

Static Private Attributes | |

| static std::atomic< std::size_t > | next_worker_id_ {0} |

| Static counter for generating unique worker IDs. | |

Additional Inherited Members | |

Protected Attributes inherited from kcenon::thread::thread_base Protected Attributes inherited from kcenon::thread::thread_base | |

| std::optional< std::chrono::milliseconds > | wake_interval_ |

| Interval at which the thread is optionally awakened. | |

Detailed Description

A specialized worker thread that processes jobs from a job_queue.

The thread_worker class inherits from thread_base, leveraging its life-cycle control methods (start, stop, etc.) and provides an implementation for job processing using a shared job_queue. By overriding should_continue_work() and do_work(), it polls the queue for available jobs and executes them.

Typical Usage

- Examples

- crash_protection/main.cpp.

Definition at line 67 of file thread_worker.h.

Constructor & Destructor Documentation

◆ thread_worker()

| kcenon::thread::thread_worker::thread_worker | ( | const bool & | use_time_tag = true, |

| const thread_context & | context = thread_context() ) |

Constructs a new thread_worker.

Constructs a worker thread with optional timing capabilities.

- Parameters

-

use_time_tag If set to true(default), the worker may log or utilize timestamps/tags when processing jobs.context Optional thread context for logging and monitoring (defaults to empty context).

This flag can be used to measure job durations, implement logging with timestamps, or any other time-related features in your job processing. The context provides access to logging and monitoring services.

Implementation details:

- Inherits from thread_base to get thread management functionality

- Sets descriptive name "thread_worker" for debugging and logging

- Initializes timing flag for optional performance measurement

- Job queue is not set initially (must be set before starting work)

- Stores thread context for logging and monitoring

- Creates a cancellation token for job cancellation support

Performance Timing:

- When enabled, measures execution time for each job

- Uses high_resolution_clock for precise measurements

- Minimal overhead when disabled (single boolean check)

- Parameters

-

use_time_tag If true, enables timing measurements for job execution context Thread context providing logging and monitoring services

Definition at line 70 of file thread_worker.cpp.

◆ ~thread_worker()

|

virtual |

Virtual destructor. Ensures the worker thread is stopped before destruction.

Destroys the worker thread.

Implementation details:

- Base class destructor handles thread shutdown

- No manual cleanup required due to RAII design

- Shared pointer to job queue is automatically released

Definition at line 89 of file thread_worker.cpp.

Member Function Documentation

◆ do_work()

|

overrideprotectedvirtual |

Processes one or more jobs from the queue.

Executes a single work cycle by processing one job from the queue.

- Returns

common::VoidResultcontaining an error if the work fails, or success value otherwise.

This method fetches a job from the queue (if available), executes it, and may repeat depending on the implementation. If any job fails, an error is returned. Otherwise, return a success value.

Implementation details:

- Uses non-blocking try_dequeue() to avoid condition variable deadlock

- Polls the queue with minimal CPU overhead via short sleep intervals

- Validates job pointer before execution

- Optionally measures execution timing for performance analysis

- Associates job with queue for potential re-submission

- Logs execution results with appropriate detail level

Job Processing Workflow:

- Validate job queue availability

- Attempt non-blocking dequeue of next job

- If queue is empty: sleep briefly and return (will be called again)

- Validate dequeued job pointer

- Optionally record start time for measurement

- Associate job with queue for context

- Execute job's do_work() method

- Handle execution errors with detailed logging

- Log successful completion with timing info if enabled

Hybrid Wait Strategy:

- Phase 1: Bounded spin (16 iterations) with CPU pause hints for fast pickup

- Phase 2: Blocking dequeue() on job_queue's condition_variable

- Wakes immediately on enqueue (notify_one) or stop (notify_all)

- should_continue_work() determines when to exit (on queue stop)

Performance Characteristics:

- Sub-microsecond pickup when jobs arrive during spin phase

- Near-zero idle CPU usage (CV blocking, not polling)

- Immediate wake-up on enqueue (<100μs vs previous 10ms floor)

- No busy-waiting overhead when idle

Error Handling:

- Missing job queue: Returns resource allocation error

- Empty queue: Returns success after brief sleep (normal polling)

- Null job pointer: Returns job invalid error

- Job execution failure: Returns execution failed error with details

Performance Measurements:

- High-resolution timing when use_time_tag_ is enabled

- Nanosecond precision for accurate profiling

- Minimal overhead when timing is disabled

Logging Behavior:

- Standard success message when timing is disabled

- Timestamped success message when timing is enabled

- Error details are propagated up the call stack

- Returns

- result_void indicating success or detailed error information

Reimplemented from kcenon::thread::thread_base.

Definition at line 383 of file thread_worker.cpp.

References kcenon::thread::diagnostics::completed, kcenon::thread::diagnostics::dequeued, kcenon::thread::diagnostics::job_execution_event::error_code, kcenon::thread::diagnostics::job_execution_event::error_message, kcenon::thread::diagnostics::job_execution_event::execution_time, kcenon::thread::diagnostics::failed, kcenon::thread::utils::formatter::format(), kcenon::thread::job_execution_failed, kcenon::thread::diagnostics::job_execution_event::job_id, kcenon::thread::job_invalid, kcenon::thread::diagnostics::job_execution_event::job_name, kcenon::thread::resource_allocation_failed, kcenon::thread::diagnostics::started, kcenon::thread::diagnostics::job_execution_event::system_timestamp, kcenon::thread::diagnostics::job_execution_event::thread_id, kcenon::thread::diagnostics::job_execution_event::timestamp, kcenon::thread::diagnostics::job_execution_event::type, kcenon::thread::diagnostics::job_execution_event::wait_time, and kcenon::thread::diagnostics::job_execution_event::worker_id.

◆ get_context()

|

nodiscard |

Gets the thread context for this worker.

- Returns

- The thread context providing access to logging and monitoring services.

- The thread context providing access to logging and monitoring services

Definition at line 269 of file thread_worker.cpp.

◆ get_current_job_info()

|

nodiscardnoexcept |

Gets information about the currently executing job.

- Returns

- Optional job_info if a job is currently executing, std::nullopt otherwise.

Thread Safety:

- Safe to call from any thread

- Provides snapshot of current state

Definition at line 842 of file thread_worker.cpp.

References current_job_, current_job_start_time_, kcenon::thread::info, kcenon::thread::diagnostics::job_info::job_id, queue_mutex_, and kcenon::thread::diagnostics::running.

◆ get_jobs_completed()

|

nodiscardnoexcept |

Gets the total number of jobs successfully completed by this worker.

- Returns

- Count of successfully completed jobs.

Thread Safety:

- Safe to call from any thread

- Uses atomic load with relaxed memory ordering

Definition at line 814 of file thread_worker.cpp.

References jobs_completed_.

◆ get_jobs_failed()

|

nodiscardnoexcept |

Gets the total number of jobs that failed during execution.

- Returns

- Count of failed jobs.

Thread Safety:

- Safe to call from any thread

- Uses atomic load with relaxed memory ordering

Definition at line 819 of file thread_worker.cpp.

References jobs_failed_.

◆ get_local_deque()

|

nodiscardnoexcept |

Get the local work-stealing deque for this worker.

- Returns

- Pointer to the local deque (nullptr if work-stealing disabled).

This deque is used for work-stealing: other workers can steal jobs from this worker's local deque when they are idle.

Definition at line 199 of file thread_worker.cpp.

References local_deque_.

◆ get_policy()

|

nodiscard |

Get the current worker policy.

- Returns

- The worker policy configuration.

Definition at line 194 of file thread_worker.cpp.

References policy_.

◆ get_state_since()

|

nodiscardnoexcept |

Gets the time when the worker entered its current state.

- Returns

- Time point when current state was entered.

Thread Safety:

- Safe to call from any thread

- Uses atomic load with acquire memory ordering

Definition at line 834 of file thread_worker.cpp.

References state_since_rep_.

◆ get_total_busy_time()

|

nodiscardnoexcept |

Gets the total time spent executing jobs (busy time).

- Returns

- Duration of busy time in nanoseconds.

Thread Safety:

- Safe to call from any thread

- Uses atomic load with relaxed memory ordering

Definition at line 824 of file thread_worker.cpp.

References total_busy_time_ns_.

◆ get_total_idle_time()

|

nodiscardnoexcept |

Gets the total time spent waiting for jobs (idle time).

- Returns

- Duration of idle time in nanoseconds.

Thread Safety:

- Safe to call from any thread

- Uses atomic load with relaxed memory ordering

Definition at line 829 of file thread_worker.cpp.

References total_idle_time_ns_.

◆ get_worker_id()

|

nodiscard |

Get the worker ID.

- Returns

- The unique ID for this worker instance.

Definition at line 735 of file thread_worker.cpp.

References worker_id_.

◆ is_idle()

|

nodiscardnoexcept |

Checks if the worker is currently idle (not processing a job).

- Returns

trueif the worker is idle (waiting for jobs),falseif actively processing.

Thread Safety:

- Safe to call from any thread

- Uses atomic operations for lock-free access

- Provides snapshot of current state (may change immediately after return)

Use Cases:

- Thread pool statistics and monitoring

- Load balancing decisions

- Performance analysis

Implementation details:

- Returns current state of is_idle_ flag

- Relaxed memory ordering sufficient (advisory-only value)

- No synchronization needed (snapshot of current state)

- Returns

- true if worker is idle, false if actively processing a job

Definition at line 750 of file thread_worker.cpp.

References is_idle_.

◆ on_stop_requested()

|

overrideprotectedvirtual |

Called when the worker is requested to stop.

Propagates cancellation signal to the currently executing job.

Overrides the base class hook to propagate cancellation to the currently executing job (if any). This allows jobs to cooperatively cancel when the worker thread is stopped.

Thread Safety:

- Called from thread requesting stop (not worker thread)

- Safe concurrent access with do_work() via atomic operations

Implementation details:

- Called from thread_base::stop() when worker shutdown is requested

- First cancels the worker's cancellation token (affects all future jobs)

- Then directly cancels the current job's token if a job is running

- Uses mutex synchronization to prevent use-after-free race condition

Cancellation Propagation:

- Cancel worker_cancellation_token_ (prevents new jobs from starting)

- Acquire queue_mutex_ to safely access current job

- If a job is running, get its cancellation token and cancel it

- Job will detect cancellation on its next is_cancelled() check

Thread Safety (Issue #225 fix):

- Called from stop() thread (not worker thread)

- Uses queue_mutex_ to synchronize with do_work() job destruction

- Prevents data race between job destructor and virtual method call

- This fixes EXC_BAD_ACCESS on ARM64 caused by use-after-free

Race Condition Fixed:

- Before: on_stop_requested() could access job while do_work() was destroying it

- After: queue_mutex_ ensures job is not destroyed while being accessed

- do_work() now destroys job while holding the mutex

- Note

- This implements cooperative cancellation - the job must check its cancellation token periodically to actually stop execution.

Reimplemented from kcenon::thread::thread_base.

Definition at line 784 of file thread_worker.cpp.

References kcenon::thread::utils::formatter::format().

◆ set_context()

| auto kcenon::thread::thread_worker::set_context | ( | const thread_context & | context | ) | -> void |

Sets the thread context for this worker.

- Parameters

-

context The thread context providing access to logging and monitoring services.

Implementation details:

- Stores the context for use in logging and monitoring

- Should be called before starting the worker thread

- Context provides access to optional services

- Parameters

-

context Thread context with logging and monitoring services

Definition at line 163 of file thread_worker.cpp.

◆ set_diagnostics()

| void kcenon::thread::thread_worker::set_diagnostics | ( | diagnostics::thread_pool_diagnostics * | diag | ) |

Set the diagnostics instance for event tracing.

- Parameters

-

diag Pointer to the diagnostics instance.

When set, the worker will record execution events to the diagnostics instance if tracing is enabled. If nullptr, no events are recorded.

Definition at line 173 of file thread_worker.cpp.

References diagnostics_.

◆ set_diagnostics_sample_rate()

| void kcenon::thread::thread_worker::set_diagnostics_sample_rate | ( | std::uint32_t | rate | ) |

Set the diagnostics sampling rate.

- Parameters

-

rate Record diagnostics events every Nth job (1 = every job).

When rate > 1, only every Nth job records diagnostic events, reducing clock-read overhead while still providing representative data. The is_tracing_enabled() check remains the top-level gate.

Definition at line 178 of file thread_worker.cpp.

References diagnostics_sample_rate_.

◆ set_job_queue()

| auto kcenon::thread::thread_worker::set_job_queue | ( | std::shared_ptr< job_queue > | job_queue | ) | -> void |

Sets the job_queue that this worker should process.

Associates this worker with a job queue for processing.

- Parameters

-

job_queue A shared pointer to the queue containing jobs.

Once the queue is set and start() is called, the worker will repeatedly poll the queue for new jobs and process them.

Implementation details:

- Stores shared pointer to enable job dequeuing

- Thread-safe queue replacement with proper synchronization

- Waits for current job completion before replacing queue

- Multiple workers can share the same job queue

Queue Replacement Synchronization:

- Acquires mutex to prevent concurrent do_work() access

- Sets replacement flag to prevent new job processing

- Waits for current job to complete (current_job_ == nullptr)

- Replaces queue pointer atomically

- Notifies worker thread to resume

Thread Safety:

- Safe to call from any thread

- Coordinates with do_work() via mutex and condition variable

- Prevents use-after-free during queue replacement

- Parameters

-

job_queue Shared pointer to the job queue for this worker

Definition at line 114 of file thread_worker.cpp.

◆ set_metrics()

| void kcenon::thread::thread_worker::set_metrics | ( | std::shared_ptr< metrics::ThreadPoolMetrics > | metrics | ) |

Provide shared metrics storage for this worker.

Definition at line 168 of file thread_worker.cpp.

References metrics_.

◆ set_policy()

| void kcenon::thread::thread_worker::set_policy | ( | const worker_policy & | policy | ) |

Set the worker policy for this worker.

- Parameters

-

policy The worker policy configuration.

Definition at line 183 of file thread_worker.cpp.

References kcenon::thread::worker_policy::enable_work_stealing, local_deque_, and policy_.

◆ set_steal_function()

| void kcenon::thread::thread_worker::set_steal_function | ( | std::function< job *(std::size_t)> | steal_fn | ) |

Set the steal function for finding other workers' deques.

- Parameters

-

steal_fn Function that returns a job to steal, or nullptr.

The steal function is called when this worker's local deque and the global queue are both empty. It should try to steal work from other workers.

Definition at line 204 of file thread_worker.cpp.

References steal_function_.

◆ should_continue_work()

|

nodiscardoverrideprotectedvirtual |

Determines if there are jobs available in the queue to continue working on.

Determines if the worker should continue processing jobs.

- Returns

trueif there is work in the queue,falseotherwise.

Called in the thread's main loop (defined by thread_base) to decide if do_work() should be invoked. Returns true if the job queue is not empty; otherwise, false.

Implementation details:

- Used by thread_base to control the work loop

- Returns false if no job queue is set (prevents infinite loop)

- Returns true while queue is not stopped (even if empty)

- Actual job waiting is handled by non-blocking polling in do_work()

- Thread-safe operation (job_queue methods are thread-safe)

Work Loop Control:

- Worker continues until queue is explicitly stopped

- Empty queue does NOT cause worker exit - do_work() will poll for jobs

- This prevents premature worker termination before jobs arrive

- Worker exits gracefully only when queue is stopped

Design Rationale - Solving the Two-Level Condition Variable Problem:

- Thread_base waits on its own condition variable (worker_condition_)

- Job_queue notifies its own condition variable (different object!)

- If should_continue_work() returns false on empty queue:

- thread_base waits on worker_condition_

- job enqueue notifies job_queue's condition variable

- Worker never wakes up - deadlock situation

- By returning true until stopped, worker enters do_work() immediately

- do_work() uses non-blocking try_dequeue() with polling

- This completely avoids the two-level CV problem

- CPU overhead is minimal due to sleep between polling attempts

Shutdown Safety:

- thread_pool::stop() can call operations in any order without race conditions

- Queue stop sets is_stopped() = true (atomic operation)

- Worker sees stopped flag and exits cleanly

- No dependency on operation ordering

Thread Safety:

- Synchronizes access to job_queue_ with queue_mutex_

- Prevents race conditions with set_job_queue() and do_work()

- Uses lock_guard for RAII-based exception safety

- Mutex marked mutable to allow locking in const method

- Returns

- true if worker should continue processing, false to exit

Reimplemented from kcenon::thread::thread_base.

Definition at line 317 of file thread_worker.cpp.

References job_queue_, and queue_mutex_.

◆ try_get_job()

|

nodiscardprivate |

Try to get a job from local deque first, then global queue.

- Returns

- A unique_ptr to the job, or nullptr if no work available.

Definition at line 209 of file thread_worker.cpp.

References kcenon::thread::worker_policy::enable_work_stealing, job_queue_, local_deque_, policy_, and queue_mutex_.

◆ try_steal_work()

|

nodiscardprivate |

Try to steal work from other workers.

- Returns

- A unique_ptr to the stolen job, or nullptr if stealing failed.

Definition at line 237 of file thread_worker.cpp.

References kcenon::thread::worker_policy::enable_work_stealing, kcenon::thread::worker_policy::max_steal_attempts, policy_, kcenon::thread::worker_policy::steal_backoff, steal_function_, and worker_id_.

Member Data Documentation

◆ context_

|

private |

The thread context providing access to logging and monitoring services.

This context enables the worker to log messages and report metrics through the configured services.

Definition at line 314 of file thread_worker.h.

◆ current_job_

|

private |

Pointer to the currently executing job.

This is set atomically at the start of job execution and cleared when the job completes. Used by on_stop_requested() to cancel the running job.

Memory Ordering:

- Release when storing (ensures job state visible to cancellation thread)

- Acquire when loading (ensures we see the correct job state)

- Note

- Raw pointer used because we don't own the job (it's owned by unique_ptr in do_work()). Safe because job lifetime is guaranteed during execution.

Definition at line 366 of file thread_worker.h.

Referenced by get_current_job_info().

◆ current_job_start_time_

|

private |

Time point when the current job started executing.

Used to track job execution time for diagnostics. Only valid when a job is currently executing.

Definition at line 426 of file thread_worker.h.

Referenced by get_current_job_info().

◆ diagnostics_

|

private |

Pointer to the diagnostics instance for event tracing.

When set, the worker records execution events if tracing is enabled. Raw pointer because the diagnostics outlives the worker.

Definition at line 327 of file thread_worker.h.

Referenced by set_diagnostics().

◆ diagnostics_counter_

|

private |

Counter for diagnostics sampling.

Incremented on each job execution. Diagnostics events are recorded when (diagnostics_counter_ % diagnostics_sample_rate_ == 0).

Definition at line 351 of file thread_worker.h.

◆ diagnostics_sample_rate_

|

private |

Diagnostics sampling rate (record every Nth job).

Default is 1 (every job) for backward compatibility.

Definition at line 343 of file thread_worker.h.

Referenced by set_diagnostics_sample_rate().

◆ is_idle_

|

private |

Indicates whether the worker is currently idle (not processing a job).

This flag is set to true when the worker is waiting for jobs and false when actively processing a job. Updated atomically for thread-safe access.

Memory Ordering:

- Relaxed ordering sufficient (no synchronization dependencies)

- Value is advisory only (race conditions between check and state change are acceptable)

- Note

- Used by thread pool for statistics and monitoring purposes.

Definition at line 380 of file thread_worker.h.

Referenced by is_idle().

◆ job_queue_

|

private |

A shared pointer to the job queue from which this worker obtains jobs.

Multiple workers can share the same queue, enabling concurrent processing of queued jobs.

Definition at line 306 of file thread_worker.h.

Referenced by should_continue_work(), and try_get_job().

◆ jobs_completed_

|

private |

Total number of jobs successfully completed by this worker.

Incremented atomically after each successful job execution.

Definition at line 387 of file thread_worker.h.

Referenced by get_jobs_completed().

◆ jobs_failed_

|

private |

Total number of jobs that failed during execution.

Incremented atomically when a job's do_work() returns an error.

Definition at line 394 of file thread_worker.h.

Referenced by get_jobs_failed().

◆ local_deque_

|

private |

Local work-stealing deque for this worker.

When work-stealing is enabled, jobs submitted to this worker are stored in this deque. The owner (this worker) can push/pop from the bottom (LIFO), while other workers can steal from the top (FIFO).

Definition at line 469 of file thread_worker.h.

Referenced by get_local_deque(), set_policy(), and try_get_job().

◆ metrics_

|

private |

Shared metrics aggregator provided by the owning thread pool.

Definition at line 319 of file thread_worker.h.

Referenced by set_metrics().

◆ next_worker_id_

|

staticprivate |

Static counter for generating unique worker IDs.

Definition at line 284 of file thread_worker.h.

◆ policy_

|

private |

Worker policy configuration.

Controls worker behavior including work-stealing settings.

Definition at line 459 of file thread_worker.h.

Referenced by get_policy(), set_policy(), try_get_job(), and try_steal_work().

◆ queue_being_replaced_

|

private |

Indicates whether queue replacement is in progress.

When true, the worker thread should wait before accessing the queue. Protected by queue_mutex_.

Definition at line 452 of file thread_worker.h.

◆ queue_cv_

|

private |

Condition variable for queue replacement synchronization.

Used to wait for current job completion before replacing the queue.

Definition at line 444 of file thread_worker.h.

◆ queue_mutex_

|

mutableprivate |

Mutex protecting job queue replacement.

This mutex synchronizes access to job_queue_ during replacement operations to prevent race conditions between do_work(), set_job_queue(), and should_continue_work().

- Note

- Marked mutable to allow locking in const methods like should_continue_work(). The const qualifier applies to the logical state, not the mutex itself.

Definition at line 437 of file thread_worker.h.

Referenced by get_current_job_info(), should_continue_work(), and try_get_job().

◆ state_since_rep_

|

private |

Time point when the worker entered its current state.

Updated when transitioning between idle and busy states. Used to calculate current state duration.

Definition at line 416 of file thread_worker.h.

Referenced by get_state_since().

◆ steal_function_

|

private |

Function to steal work from other workers.

This function is provided by the thread pool and returns a stolen job from another worker's deque, or nullptr if no work available.

Definition at line 477 of file thread_worker.h.

Referenced by set_steal_function(), and try_steal_work().

◆ steal_victim_index_

|

private |

Counter for round-robin steal victim selection.

Definition at line 482 of file thread_worker.h.

◆ total_busy_time_ns_

|

private |

Total time spent executing jobs (busy time) in nanoseconds.

Accumulated after each job execution with the job's execution duration.

Definition at line 401 of file thread_worker.h.

Referenced by get_total_busy_time().

◆ total_idle_time_ns_

|

private |

Total time spent waiting for jobs (idle time) in nanoseconds.

Accumulated when transitioning from idle to busy state.

Definition at line 408 of file thread_worker.h.

Referenced by get_total_idle_time().

◆ use_time_tag_

|

private |

Indicates whether to use time tags or timestamps for job processing.

When true, the worker might record timestamps (e.g., job start/end times) or log them for debugging/monitoring. The exact usage depends on the job and override details in derived classes.

Definition at line 298 of file thread_worker.h.

◆ worker_cancellation_token_

|

private |

Cancellation token for this worker.

This token is propagated to jobs during execution, allowing them to cooperatively cancel when the worker is stopped. The token is cancelled in on_stop_requested().

Definition at line 336 of file thread_worker.h.

◆ worker_id_

|

private |

Unique ID for this worker instance.

Definition at line 289 of file thread_worker.h.

Referenced by get_worker_id(), and try_steal_work().

The documentation for this class was generated from the following files:

- include/kcenon/thread/core/thread_worker.h

- src/impl/thread_pool/thread_worker.cpp