NUMA-aware work stealer with enhanced victim selection policies. More...

#include <numa_work_stealer.h>

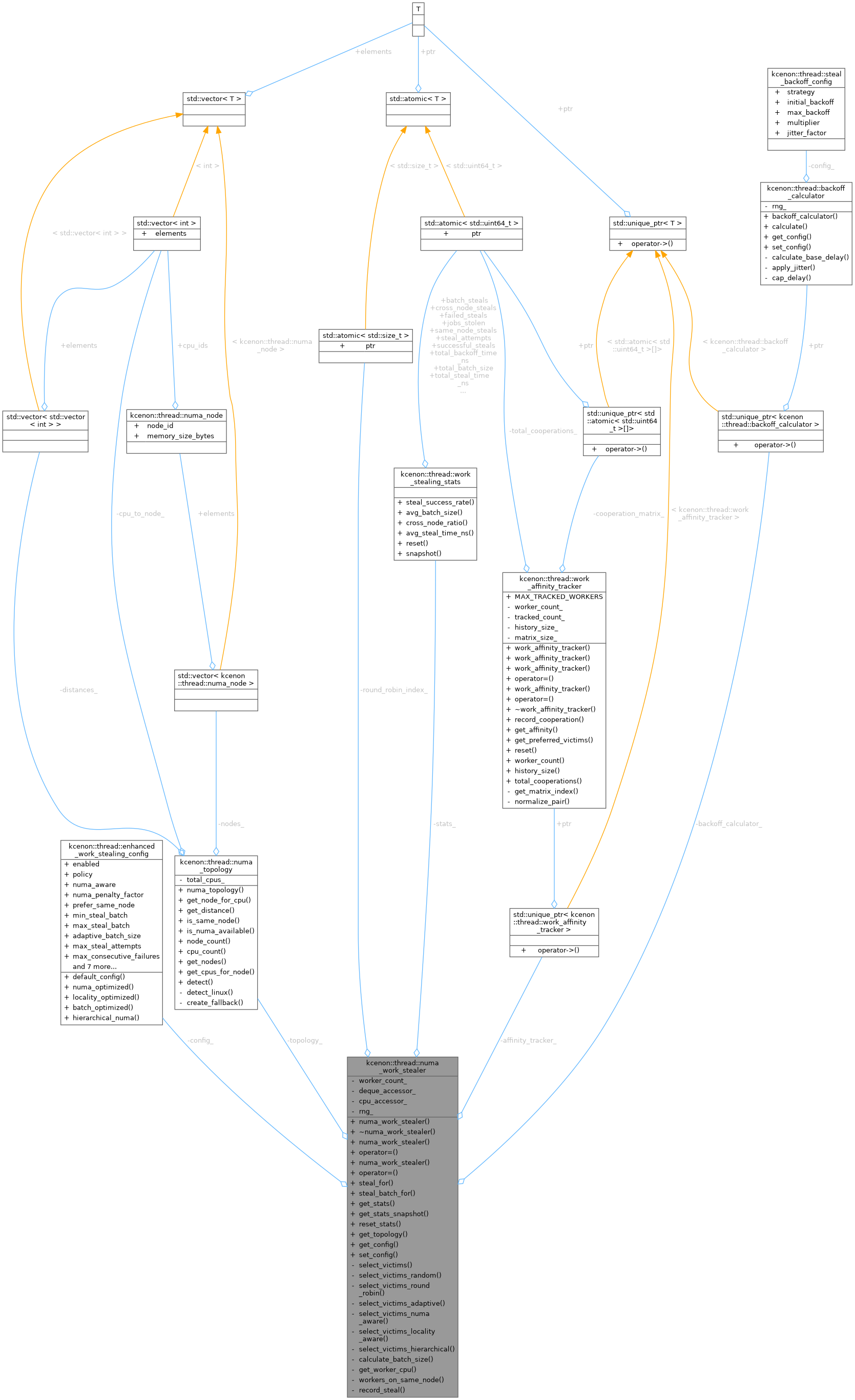

Public Types | |

| using | deque_accessor_fn = std::function<lockfree::work_stealing_deque<job*>*(std::size_t)> |

| Function type for accessing worker's local deque. | |

| using | cpu_accessor_fn = std::function<int(std::size_t)> |

| Function type for getting worker's CPU affinity. | |

Public Member Functions | |

| numa_work_stealer (std::size_t worker_count, deque_accessor_fn deque_accessor, cpu_accessor_fn cpu_accessor, enhanced_work_stealing_config config={}) | |

| Construct a NUMA-aware work stealer. | |

| ~numa_work_stealer ()=default | |

| Destructor. | |

| numa_work_stealer (const numa_work_stealer &)=delete | |

| numa_work_stealer & | operator= (const numa_work_stealer &)=delete |

| numa_work_stealer (numa_work_stealer &&)=delete | |

| numa_work_stealer & | operator= (numa_work_stealer &&)=delete |

| auto | steal_for (std::size_t worker_id) -> job * |

| Attempt to steal work for a worker. | |

| auto | steal_batch_for (std::size_t worker_id, std::size_t max_count) -> std::vector< job * > |

| Attempt to steal multiple jobs for a worker. | |

| auto | get_stats () const -> const work_stealing_stats & |

| Get the current statistics. | |

| auto | get_stats_snapshot () const -> work_stealing_stats_snapshot |

| Get a snapshot of current statistics. | |

| void | reset_stats () |

| Reset all statistics to zero. | |

| auto | get_topology () const -> const numa_topology & |

| Get the NUMA topology information. | |

| auto | get_config () const -> const enhanced_work_stealing_config & |

| Get the current configuration. | |

| void | set_config (const enhanced_work_stealing_config &config) |

| Update the configuration. | |

Private Member Functions | |

| auto | select_victims (std::size_t requester_id, std::size_t count) -> std::vector< std::size_t > |

| Select victim workers based on the configured policy. | |

| auto | select_victims_random (std::size_t requester_id, std::size_t count) -> std::vector< std::size_t > |

| Select victims using random policy. | |

| auto | select_victims_round_robin (std::size_t requester_id, std::size_t count) -> std::vector< std::size_t > |

| Select victims using round-robin policy. | |

| auto | select_victims_adaptive (std::size_t requester_id, std::size_t count) -> std::vector< std::size_t > |

| Select victims using adaptive (queue-size based) policy. | |

| auto | select_victims_numa_aware (std::size_t requester_id, std::size_t count) -> std::vector< std::size_t > |

| Select victims using NUMA-aware policy. | |

| auto | select_victims_locality_aware (std::size_t requester_id, std::size_t count) -> std::vector< std::size_t > |

| Select victims using locality-aware policy. | |

| auto | select_victims_hierarchical (std::size_t requester_id, std::size_t count) -> std::vector< std::size_t > |

| Select victims using hierarchical policy. | |

| auto | calculate_batch_size (std::size_t victim_queue_size) const -> std::size_t |

| Calculate batch size based on configuration and victim queue depth. | |

| auto | get_worker_cpu (std::size_t worker_id) const -> int |

| Get the CPU ID for a worker. | |

| auto | workers_on_same_node (std::size_t worker_a, std::size_t worker_b) const -> bool |

| Check if two workers are on the same NUMA node. | |

| void | record_steal (std::size_t thief_id, std::size_t victim_id) |

| Record a successful steal for affinity tracking. | |

Private Attributes | |

| std::size_t | worker_count_ |

| deque_accessor_fn | deque_accessor_ |

| cpu_accessor_fn | cpu_accessor_ |

| enhanced_work_stealing_config | config_ |

| numa_topology | topology_ |

| work_stealing_stats | stats_ |

| std::unique_ptr< work_affinity_tracker > | affinity_tracker_ |

| std::unique_ptr< backoff_calculator > | backoff_calculator_ |

| std::mt19937_64 | rng_ |

| std::atomic< std::size_t > | round_robin_index_ {0} |

Detailed Description

NUMA-aware work stealer with enhanced victim selection policies.

This class implements advanced work-stealing strategies with NUMA awareness, locality tracking, batch stealing, and comprehensive statistics collection. It coordinates stealing across multiple workers using configurable policies.

Design Goals

- Minimize cross-NUMA node memory access

- Maximize cache locality through affinity tracking

- Reduce contention through intelligent victim selection

- Provide detailed statistics for performance analysis

Thread Safety

All public methods are thread-safe and can be called concurrently from multiple worker threads. Statistics updates use atomic operations.

Memory Model

- Victim selection: sequential consistency for correctness

- Statistics: relaxed ordering for performance

- Topology access: read-only after construction

Usage Example

Definition at line 98 of file numa_work_stealer.h.

Member Typedef Documentation

◆ cpu_accessor_fn

| using kcenon::thread::numa_work_stealer::cpu_accessor_fn = std::function<int(std::size_t)> |

Function type for getting worker's CPU affinity.

- Parameters

-

worker_id The worker ID

- Returns

- Preferred CPU for the worker, or -1 if no preference

Definition at line 113 of file numa_work_stealer.h.

◆ deque_accessor_fn

| using kcenon::thread::numa_work_stealer::deque_accessor_fn = std::function<lockfree::work_stealing_deque<job*>*(std::size_t)> |

Function type for accessing worker's local deque.

- Parameters

-

worker_id The worker ID

- Returns

- Pointer to the worker's local deque, or nullptr if not available

Definition at line 106 of file numa_work_stealer.h.

Constructor & Destructor Documentation

◆ numa_work_stealer() [1/3]

| kcenon::thread::numa_work_stealer::numa_work_stealer | ( | std::size_t | worker_count, |

| deque_accessor_fn | deque_accessor, | ||

| cpu_accessor_fn | cpu_accessor, | ||

| enhanced_work_stealing_config | config = {} ) |

Construct a NUMA-aware work stealer.

- Parameters

-

worker_count Number of workers in the pool deque_accessor Function to access worker deques cpu_accessor Function to get worker CPU affinity config Configuration for work stealing

- Note

- The accessor functions must remain valid for the lifetime of this object.

Definition at line 16 of file numa_work_stealer.cpp.

References backoff_calculator_, kcenon::thread::enhanced_work_stealing_config::backoff_multiplier, kcenon::thread::enhanced_work_stealing_config::backoff_strategy, config_, kcenon::thread::enhanced_work_stealing_config::initial_backoff, kcenon::thread::enhanced_work_stealing_config::max_backoff, kcenon::thread::steal_backoff_config::strategy, kcenon::thread::enhanced_work_stealing_config::track_locality, and worker_count_.

◆ ~numa_work_stealer()

|

default |

Destructor.

◆ numa_work_stealer() [2/3]

|

delete |

◆ numa_work_stealer() [3/3]

|

delete |

Member Function Documentation

◆ calculate_batch_size()

|

nodiscardprivate |

Calculate batch size based on configuration and victim queue depth.

Definition at line 570 of file numa_work_stealer.cpp.

◆ get_config()

|

nodiscard |

Get the current configuration.

- Returns

- Reference to the work-stealing configuration

Definition at line 253 of file numa_work_stealer.cpp.

References config_.

◆ get_stats()

|

nodiscard |

Get the current statistics.

- Returns

- Reference to the work-stealing statistics

Definition at line 233 of file numa_work_stealer.cpp.

References stats_.

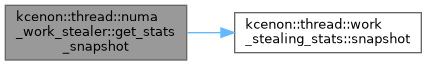

◆ get_stats_snapshot()

|

nodiscard |

Get a snapshot of current statistics.

- Returns

- Non-atomic copy of statistics for safe reading

Definition at line 238 of file numa_work_stealer.cpp.

References kcenon::thread::work_stealing_stats::snapshot(), and stats_.

◆ get_topology()

|

nodiscard |

Get the NUMA topology information.

- Returns

- Reference to the detected NUMA topology

Definition at line 248 of file numa_work_stealer.cpp.

References topology_.

◆ get_worker_cpu()

|

nodiscardprivate |

Get the CPU ID for a worker.

Definition at line 587 of file numa_work_stealer.cpp.

◆ operator=() [1/2]

|

delete |

◆ operator=() [2/2]

|

delete |

◆ record_steal()

|

private |

Record a successful steal for affinity tracking.

Definition at line 610 of file numa_work_stealer.cpp.

References affinity_tracker_, config_, and kcenon::thread::enhanced_work_stealing_config::track_locality.

◆ reset_stats()

| void kcenon::thread::numa_work_stealer::reset_stats | ( | ) |

Reset all statistics to zero.

Definition at line 243 of file numa_work_stealer.cpp.

References kcenon::thread::work_stealing_stats::reset(), and stats_.

◆ select_victims()

|

nodiscardprivate |

Select victim workers based on the configured policy.

- Parameters

-

requester_id Worker requesting victims count Maximum number of victims to select

- Returns

- Vector of worker IDs to attempt stealing from

Definition at line 282 of file numa_work_stealer.cpp.

References kcenon::thread::adaptive, kcenon::thread::hierarchical, kcenon::thread::locality_aware, kcenon::thread::numa_aware, kcenon::thread::random, and kcenon::thread::round_robin.

◆ select_victims_adaptive()

|

nodiscardprivate |

Select victims using adaptive (queue-size based) policy.

Definition at line 356 of file numa_work_stealer.cpp.

◆ select_victims_hierarchical()

|

nodiscardprivate |

Select victims using hierarchical policy.

Definition at line 505 of file numa_work_stealer.cpp.

◆ select_victims_locality_aware()

|

nodiscardprivate |

Select victims using locality-aware policy.

Definition at line 474 of file numa_work_stealer.cpp.

◆ select_victims_numa_aware()

|

nodiscardprivate |

Select victims using NUMA-aware policy.

Definition at line 415 of file numa_work_stealer.cpp.

◆ select_victims_random()

|

nodiscardprivate |

Select victims using random policy.

Definition at line 310 of file numa_work_stealer.cpp.

◆ select_victims_round_robin()

|

nodiscardprivate |

Select victims using round-robin policy.

Definition at line 336 of file numa_work_stealer.cpp.

◆ set_config()

| void kcenon::thread::numa_work_stealer::set_config | ( | const enhanced_work_stealing_config & | config | ) |

Update the configuration.

- Parameters

-

config New configuration to use

- Note

- Changes take effect immediately. Be cautious when changing configuration while workers are actively stealing.

Definition at line 258 of file numa_work_stealer.cpp.

References affinity_tracker_, backoff_calculator_, kcenon::thread::enhanced_work_stealing_config::backoff_multiplier, kcenon::thread::enhanced_work_stealing_config::backoff_strategy, config_, kcenon::thread::enhanced_work_stealing_config::initial_backoff, kcenon::thread::steal_backoff_config::initial_backoff, kcenon::thread::enhanced_work_stealing_config::locality_history_size, kcenon::thread::enhanced_work_stealing_config::max_backoff, kcenon::thread::steal_backoff_config::max_backoff, kcenon::thread::steal_backoff_config::multiplier, kcenon::thread::steal_backoff_config::strategy, kcenon::thread::enhanced_work_stealing_config::track_locality, and worker_count_.

◆ steal_batch_for()

|

nodiscard |

Attempt to steal multiple jobs for a worker.

- Parameters

-

worker_id The worker requesting work max_count Maximum number of jobs to steal

- Returns

- Vector of stolen job pointers (may be empty or smaller than max_count)

Batch stealing is more efficient when multiple jobs need to be transferred. The actual batch size is determined by configuration and victim queue depth.

Thread Safety:

- Safe to call concurrently from multiple workers

- Statistics are updated atomically

Definition at line 129 of file numa_work_stealer.cpp.

References kcenon::thread::delay.

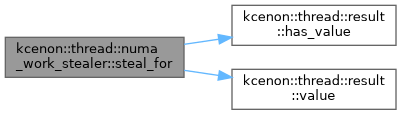

◆ steal_for()

|

nodiscard |

Attempt to steal work for a worker.

- Parameters

-

worker_id The worker requesting work

- Returns

- Stolen job pointer, or nullptr if no work available

This method selects victims based on the configured policy and attempts to steal a single job. NUMA awareness and affinity are considered when selecting victims.

Thread Safety:

- Safe to call concurrently from multiple workers

- Statistics are updated atomically

Definition at line 41 of file numa_work_stealer.cpp.

References kcenon::thread::delay, kcenon::thread::result< T >::has_value(), and kcenon::thread::result< T >::value().

◆ workers_on_same_node()

|

nodiscardprivate |

Check if two workers are on the same NUMA node.

Definition at line 596 of file numa_work_stealer.cpp.

Member Data Documentation

◆ affinity_tracker_

|

private |

Definition at line 282 of file numa_work_stealer.h.

Referenced by record_steal(), and set_config().

◆ backoff_calculator_

|

private |

Definition at line 283 of file numa_work_stealer.h.

Referenced by numa_work_stealer(), and set_config().

◆ config_

|

private |

Definition at line 279 of file numa_work_stealer.h.

Referenced by get_config(), numa_work_stealer(), record_steal(), and set_config().

◆ cpu_accessor_

|

private |

Definition at line 278 of file numa_work_stealer.h.

◆ deque_accessor_

|

private |

Definition at line 277 of file numa_work_stealer.h.

◆ rng_

|

mutableprivate |

Definition at line 286 of file numa_work_stealer.h.

◆ round_robin_index_

|

mutableprivate |

Definition at line 289 of file numa_work_stealer.h.

◆ stats_

|

private |

Definition at line 281 of file numa_work_stealer.h.

Referenced by get_stats(), get_stats_snapshot(), and reset_stats().

◆ topology_

|

private |

Definition at line 280 of file numa_work_stealer.h.

Referenced by get_topology().

◆ worker_count_

|

private |

Definition at line 276 of file numa_work_stealer.h.

Referenced by numa_work_stealer(), and set_config().

The documentation for this class was generated from the following files:

- include/kcenon/thread/stealing/numa_work_stealer.h

- src/stealing/numa_work_stealer.cpp