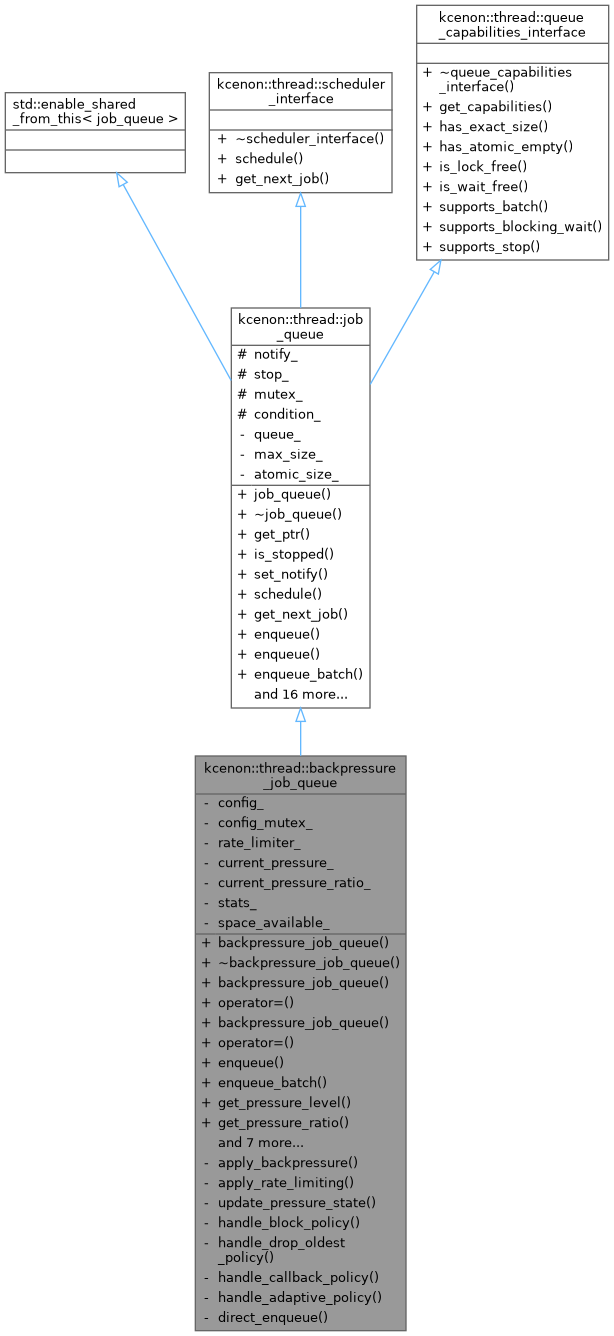

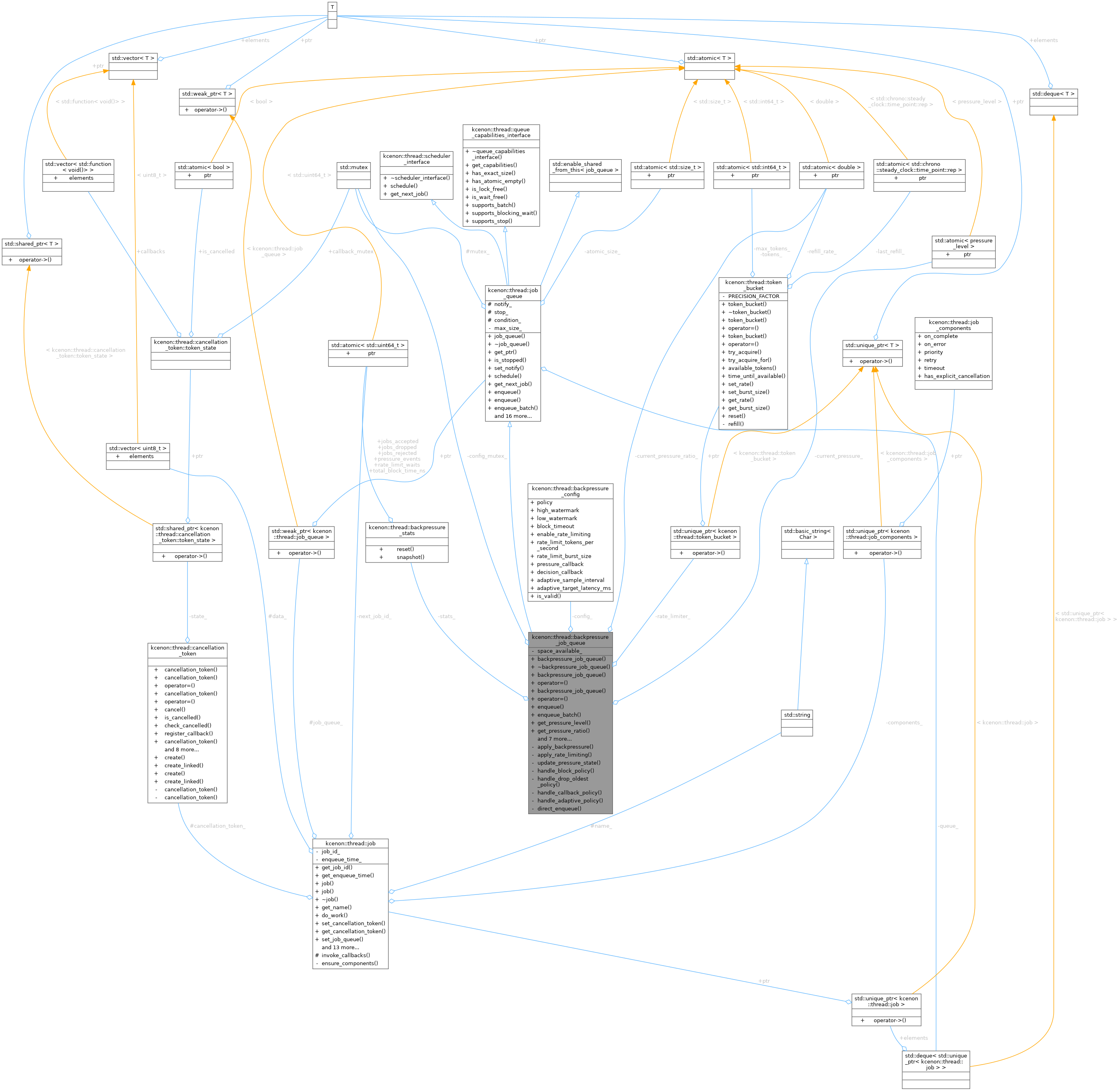

A job queue with comprehensive backpressure mechanisms. More...

#include <backpressure_job_queue.h>

Public Member Functions | |

| backpressure_job_queue (std::size_t max_size, backpressure_config config=backpressure_config{}) | |

| Constructs a backpressure-aware job queue. | |

| ~backpressure_job_queue () override | |

| Virtual destructor. | |

| backpressure_job_queue (const backpressure_job_queue &)=delete | |

| backpressure_job_queue & | operator= (const backpressure_job_queue &)=delete |

| backpressure_job_queue (backpressure_job_queue &&)=delete | |

| backpressure_job_queue & | operator= (backpressure_job_queue &&)=delete |

| auto | enqueue (std::unique_ptr< job > &&value) -> common::VoidResult override |

| Enqueues a job with backpressure handling. | |

| auto | enqueue_batch (std::vector< std::unique_ptr< job > > &&jobs) -> common::VoidResult override |

| Enqueues a batch of jobs with backpressure handling. | |

| auto | get_pressure_level () const -> pressure_level |

| Returns the current pressure level. | |

| auto | get_pressure_ratio () const -> double |

| Returns the current pressure as a ratio. | |

| auto | set_backpressure_config (backpressure_config config) -> void |

| Sets the backpressure configuration. | |

| auto | get_backpressure_config () const -> const backpressure_config & |

| Returns the current backpressure configuration. | |

| auto | is_rate_limited () const -> bool |

| Checks if rate limiting is causing delays. | |

| auto | get_available_tokens () const -> std::size_t |

| Returns available rate limit tokens. | |

| auto | get_backpressure_stats () const -> backpressure_stats_snapshot |

| Returns backpressure statistics snapshot. | |

| auto | reset_stats () -> void |

| Resets backpressure statistics. | |

| auto | to_string () const -> std::string override |

| Returns string representation including backpressure state. | |

Public Member Functions inherited from kcenon::thread::job_queue Public Member Functions inherited from kcenon::thread::job_queue | |

| job_queue (std::optional< std::size_t > max_size=std::nullopt) | |

Constructs a new, empty job_queue. | |

| virtual | ~job_queue (void) |

Virtual destructor. Cleans up resources used by the job_queue. | |

| auto | get_ptr (void) -> std::shared_ptr< job_queue > |

Obtains a std::shared_ptr that points to this queue instance. | |

| auto | is_stopped () const -> bool |

| Checks if the queue is in a "stopped" state. | |

| auto | set_notify (bool notify) -> void |

| Sets the 'notify' flag for this queue. | |

| auto | schedule (std::unique_ptr< job > &&value) -> common::VoidResult override |

| scheduler_interface implementation: schedule a job | |

| auto | get_next_job () -> common::Result< std::unique_ptr< job > > override |

| scheduler_interface implementation: get next job | |

| template<typename JobType , typename = std::enable_if_t<std::is_base_of_v<job, JobType>>> | |

| auto | enqueue (std::unique_ptr< JobType > &&value) -> common::VoidResult |

| Type-safe enqueue for job subclasses. | |

| virtual auto | dequeue (void) -> common::Result< std::unique_ptr< job > > |

| Dequeues a job from the queue in FIFO order (blocking operation). | |

| virtual auto | try_dequeue (void) -> common::Result< std::unique_ptr< job > > |

| Attempts to dequeue a job from the queue without blocking. | |

| virtual auto | dequeue_batch (void) -> std::deque< std::unique_ptr< job > > |

| Dequeues all remaining jobs from the queue without processing them. | |

| virtual auto | dequeue_batch_limited (std::size_t max_count) -> std::deque< std::unique_ptr< job > > |

| Dequeues up to N jobs in a single operation (micro-batching). | |

| virtual auto | clear (void) -> void |

| Removes all jobs currently in the queue without processing them. | |

| auto | empty (void) const -> bool |

| Checks if the queue is currently empty. | |

| auto | size (void) const -> std::size_t |

| Returns the current number of jobs in the queue. | |

| auto | get_memory_stats () const -> memory_stats |

| Get memory footprint statistics for debugging and monitoring. | |

| auto | stop (void) -> void |

| Signals the queue to stop waiting for new jobs (e.g., during shutdown). | |

| auto | get_capabilities () const -> queue_capabilities override |

| Returns capabilities of this job_queue implementation. | |

| auto | is_bounded () const -> bool |

| Check if queue has a size limit. | |

| auto | get_max_size () const -> std::optional< std::size_t > |

| Get the maximum queue size. | |

| auto | set_max_size (std::optional< std::size_t > max_size) -> void |

| Set maximum queue size. | |

| auto | is_full () const -> bool |

| Check if queue is at capacity. | |

| virtual auto | inspect_pending_jobs (std::size_t limit=100) const -> std::vector< diagnostics::job_info > |

| Inspects pending jobs in the queue without removing them. | |

Public Member Functions inherited from kcenon::thread::scheduler_interface Public Member Functions inherited from kcenon::thread::scheduler_interface | |

| virtual | ~scheduler_interface ()=default |

Public Member Functions inherited from kcenon::thread::queue_capabilities_interface Public Member Functions inherited from kcenon::thread::queue_capabilities_interface | |

| virtual | ~queue_capabilities_interface ()=default |

| auto | has_exact_size () const -> bool |

| Check if size() returns exact values. | |

| auto | has_atomic_empty () const -> bool |

| Check if empty() check is atomic. | |

| auto | is_lock_free () const -> bool |

| Check if this is a lock-free implementation. | |

| auto | is_wait_free () const -> bool |

| Check if this is a wait-free implementation. | |

| auto | supports_batch () const -> bool |

| Check if batch operations are supported. | |

| auto | supports_blocking_wait () const -> bool |

| Check if blocking wait is supported. | |

| auto | supports_stop () const -> bool |

| Check if stop signaling is supported. | |

Private Member Functions | |

| auto | apply_backpressure (std::unique_ptr< job > &&value) -> common::VoidResult |

| Applies backpressure logic for a single job. | |

| auto | apply_rate_limiting () -> bool |

| Applies rate limiting check. | |

| auto | update_pressure_state () -> void |

| Updates pressure level and triggers callbacks if changed. | |

| auto | handle_block_policy (std::unique_ptr< job > &&value) -> common::VoidResult |

| Handles blocking policy with timeout. | |

| auto | handle_drop_oldest_policy (std::unique_ptr< job > &&value) -> common::VoidResult |

| Handles drop_oldest policy. | |

| auto | handle_callback_policy (std::unique_ptr< job > &&value) -> common::VoidResult |

| Handles callback policy. | |

| auto | handle_adaptive_policy (std::unique_ptr< job > &&value) -> common::VoidResult |

| Handles adaptive policy. | |

| auto | direct_enqueue (std::unique_ptr< job > &&value) -> common::VoidResult |

| Directly enqueues without backpressure (internal use). | |

Private Attributes | |

| backpressure_config | config_ |

| Backpressure configuration. | |

| std::mutex | config_mutex_ |

| Mutex for configuration access. | |

| std::unique_ptr< token_bucket > | rate_limiter_ |

| Token bucket rate limiter (nullptr if disabled). | |

| std::atomic< pressure_level > | current_pressure_ {pressure_level::none} |

| Current pressure level (atomic for lock-free reads). | |

| std::atomic< double > | current_pressure_ratio_ {0.0} |

| Current pressure ratio (atomic for lock-free reads). | |

| backpressure_stats | stats_ |

| Backpressure statistics. | |

| std::condition_variable | space_available_ |

| Condition variable for blocking policy wait. | |

Additional Inherited Members | |

Protected Attributes inherited from kcenon::thread::job_queue Protected Attributes inherited from kcenon::thread::job_queue | |

| std::atomic_bool | notify_ |

If true, threads waiting for new jobs are notified when a new job is enqueued. If false, enqueuing does not automatically trigger a notification. | |

| std::atomic_bool | stop_ |

| Indicates whether the queue has been signaled to stop. | |

| std::mutex | mutex_ |

Mutex to protect access to the underlying queue_ container and related state. | |

| std::condition_variable | condition_ |

| Condition variable used to signal worker threads. | |

Detailed Description

A job queue with comprehensive backpressure mechanisms.

Extends job_queue with backpressure handling to prevent resource exhaustion and provide graceful degradation under high load.

Features

- Multiple Policies: Block, drop_oldest, drop_newest, callback, adaptive

- Watermark-Based Pressure: Graduated response based on queue depth

- Rate Limiting: Token bucket algorithm for sustained throughput control

- Adaptive Control: Auto-adjusts based on latency targets

- Statistics: Comprehensive metrics for monitoring

Pressure Response

Thread Safety

All methods are thread-safe. The queue inherits mutex-based synchronization from job_queue.

Usage Example

- See also

- job_queue Base queue class

- backpressure_config Configuration options

- token_bucket Rate limiting implementation

Definition at line 87 of file backpressure_job_queue.h.

Constructor & Destructor Documentation

◆ backpressure_job_queue() [1/3]

|

explicit |

Constructs a backpressure-aware job queue.

- Parameters

-

max_size Maximum queue capacity. config Backpressure configuration.

The queue is immediately ready for use after construction. If rate limiting is enabled, the token bucket is initialized with the specified parameters.

Implementation details:

- Calls base job_queue constructor with max_size

- Stores configuration

- Creates token bucket if rate limiting is enabled

- Initializes pressure state to none

- Parameters

-

max_size Maximum queue capacity config Backpressure configuration

Definition at line 34 of file backpressure_job_queue.cpp.

References config_, and kcenon::thread::backpressure_config::enable_rate_limiting.

◆ ~backpressure_job_queue()

|

overridedefault |

Virtual destructor.

Destructor.

◆ backpressure_job_queue() [2/3]

|

delete |

◆ backpressure_job_queue() [3/3]

|

delete |

Member Function Documentation

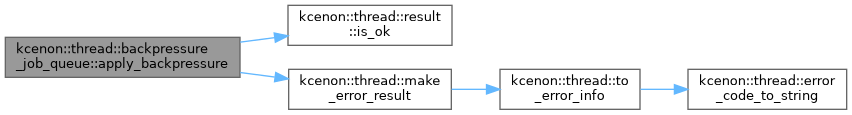

◆ apply_backpressure()

|

nodiscardprivate |

Applies backpressure logic for a single job.

Core backpressure implementation for single job.

- Parameters

-

value The job to potentially enqueue.

- Returns

- VoidResult indicating success or error.

This is the core backpressure implementation that handles all policy logic, rate limiting, and statistics tracking.

Implementation details:

- Applies rate limiting first

- Checks capacity before attempting enqueue

- Routes to policy handler if queue is full

- Updates statistics

- Parameters

-

value The job to enqueue

- Returns

- VoidResult indicating success or error

Definition at line 252 of file backpressure_job_queue.cpp.

References kcenon::thread::adaptive, kcenon::thread::block, kcenon::thread::callback, kcenon::thread::drop_newest, kcenon::thread::drop_oldest, kcenon::thread::result< T >::is_ok(), kcenon::thread::make_error_result(), kcenon::thread::operation_timeout, and kcenon::thread::queue_full.

◆ apply_rate_limiting()

|

nodiscardprivate |

Applies rate limiting check.

Applies rate limiting using token bucket.

- Returns

- true if rate limit allows operation, false if should wait.

- true if allowed to proceed, false if rate limited

Definition at line 315 of file backpressure_job_queue.cpp.

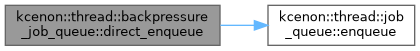

◆ direct_enqueue()

|

nodiscardprivate |

Directly enqueues without backpressure (internal use).

Directly enqueues to base class without backpressure.

- Parameters

-

value The job to enqueue.

- Returns

- VoidResult from base class enqueue.

Definition at line 598 of file backpressure_job_queue.cpp.

References kcenon::thread::job_queue::enqueue().

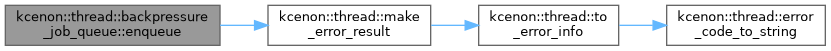

◆ enqueue()

|

nodiscardoverridevirtual |

Enqueues a job with backpressure handling.

- Parameters

-

value The job to enqueue.

- Returns

- VoidResult indicating success or error.

Depending on the configured policy:

- block: Waits up to block_timeout for space

- drop_oldest: Removes oldest job if full

- drop_newest: Rejects new job if full

- callback: Calls decision_callback for custom handling

- adaptive: Adjusts behavior based on current conditions

Rate limiting (if enabled) is applied before policy-based handling.

Error Codes:

- queue_full: Queue at capacity and job rejected

- queue_stopped: Queue has been stopped

- operation_timeout: Block timeout expired

- invalid_argument: Null job provided

Implementation details:

- Early validation (stopped, null check)

- Apply rate limiting if enabled

- Route to policy-specific handler based on config

- Update pressure state and statistics

- Parameters

-

value The job to enqueue

- Returns

- VoidResult indicating success or error

Reimplemented from kcenon::thread::job_queue.

Definition at line 68 of file backpressure_job_queue.cpp.

References kcenon::thread::invalid_argument, kcenon::thread::make_error_result(), and kcenon::thread::queue_stopped.

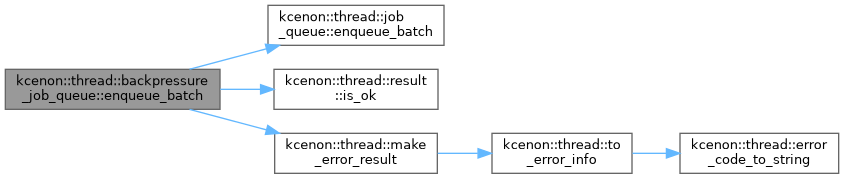

◆ enqueue_batch()

|

nodiscardoverridevirtual |

Enqueues a batch of jobs with backpressure handling.

- Parameters

-

jobs Vector of jobs to enqueue.

- Returns

- VoidResult indicating success or error.

Batch enqueue respects backpressure settings. If the batch would exceed capacity, behavior depends on policy:

- block/drop_newest: Entire batch rejected

- drop_oldest: Oldest jobs dropped to make room

- callback: Called once for the batch decision

- adaptive: May partially accept (future enhancement)

Implementation details:

- Validates batch (stopped, empty, null jobs)

- Checks if batch fits within capacity constraints

- For drop_oldest: drops enough jobs to fit batch

- For other policies: rejects entire batch if won't fit

- Parameters

-

jobs Vector of jobs to enqueue

- Returns

- VoidResult indicating success or error

Reimplemented from kcenon::thread::job_queue.

Definition at line 97 of file backpressure_job_queue.cpp.

References kcenon::thread::block, kcenon::thread::drop_oldest, kcenon::thread::job_queue::enqueue_batch(), kcenon::thread::invalid_argument, kcenon::thread::result< T >::is_ok(), kcenon::thread::make_error_result(), kcenon::thread::operation_timeout, kcenon::thread::queue_full, and kcenon::thread::queue_stopped.

◆ get_available_tokens()

|

nodiscard |

Returns available rate limit tokens.

- Returns

- Available tokens, or max if rate limiting disabled.

Definition at line 684 of file backpressure_job_queue.cpp.

References config_, kcenon::thread::backpressure_config::enable_rate_limiting, and rate_limiter_.

◆ get_backpressure_config()

|

nodiscard |

Returns the current backpressure configuration.

Returns current configuration.

- Returns

- Reference to current configuration.

Definition at line 662 of file backpressure_job_queue.cpp.

References config_.

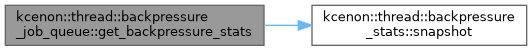

◆ get_backpressure_stats()

|

nodiscard |

Returns backpressure statistics snapshot.

- Returns

- Snapshot of current statistics.

Returns a snapshot of current stats. For ongoing monitoring, call periodically.

Definition at line 696 of file backpressure_job_queue.cpp.

References kcenon::thread::backpressure_stats::snapshot(), and stats_.

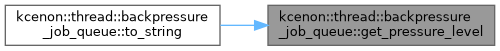

◆ get_pressure_level()

|

nodiscard |

Returns the current pressure level.

Returns current pressure level.

- Returns

- Current pressure_level enum value.

Calculated based on current queue depth relative to watermarks:

- none: depth < low_watermark * max_size

- low: depth < high_watermark * max_size

- high: depth < max_size

- critical: depth >= max_size

Definition at line 607 of file backpressure_job_queue.cpp.

References current_pressure_.

Referenced by to_string().

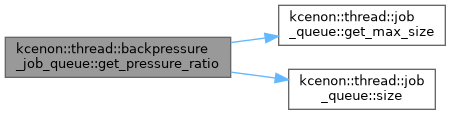

◆ get_pressure_ratio()

|

nodiscard |

Returns the current pressure as a ratio.

Returns current pressure ratio.

- Returns

- Ratio of current depth to max_size (0.0 to 1.0+).

Can exceed 1.0 if queue somehow exceeds max_size (shouldn't happen with proper backpressure, but included for robustness).

Definition at line 615 of file backpressure_job_queue.cpp.

References kcenon::thread::job_queue::get_max_size(), and kcenon::thread::job_queue::size().

Referenced by to_string().

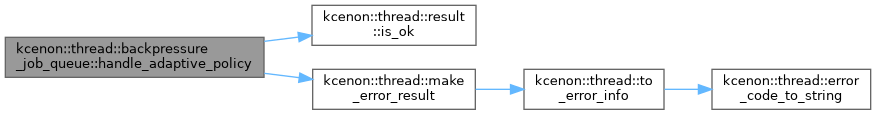

◆ handle_adaptive_policy()

|

nodiscardprivate |

Handles adaptive policy.

- Parameters

-

value The job to potentially enqueue.

- Returns

- VoidResult based on adaptive decision.

Implementation details:

- Below high_watermark: accept normally

- Above high_watermark: probabilistic acceptance based on pressure

- At capacity: block briefly then reject

Definition at line 525 of file backpressure_job_queue.cpp.

References kcenon::thread::result< T >::is_ok(), kcenon::thread::make_error_result(), and kcenon::thread::queue_full.

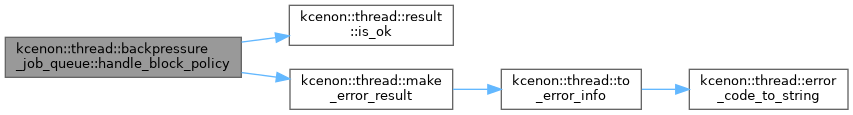

◆ handle_block_policy()

|

nodiscardprivate |

Handles blocking policy with timeout.

- Parameters

-

value The job to enqueue.

- Returns

- VoidResult indicating success or timeout.

Definition at line 405 of file backpressure_job_queue.cpp.

References kcenon::thread::result< T >::is_ok(), kcenon::thread::make_error_result(), and kcenon::thread::operation_timeout.

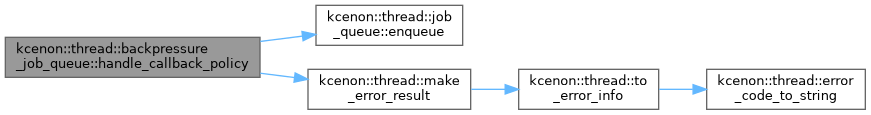

◆ handle_callback_policy()

|

nodiscardprivate |

Handles callback policy.

- Parameters

-

value The job to potentially enqueue.

- Returns

- VoidResult based on callback decision.

Definition at line 477 of file backpressure_job_queue.cpp.

References kcenon::thread::accept, kcenon::thread::delay, kcenon::thread::drop_and_accept, kcenon::thread::job_queue::enqueue(), kcenon::thread::make_error_result(), kcenon::thread::queue_busy, kcenon::thread::queue_full, and kcenon::thread::reject.

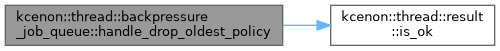

◆ handle_drop_oldest_policy()

|

nodiscardprivate |

Handles drop_oldest policy.

- Parameters

-

value The job to enqueue after dropping oldest.

- Returns

- VoidResult (always succeeds unless stopped).

Definition at line 457 of file backpressure_job_queue.cpp.

References kcenon::thread::result< T >::is_ok().

◆ is_rate_limited()

|

nodiscard |

Checks if rate limiting is causing delays.

Checks if rate limiting is active.

- Returns

- true if rate limiter is constraining throughput.

Returns false if rate limiting is disabled.

Definition at line 670 of file backpressure_job_queue.cpp.

References config_, kcenon::thread::backpressure_config::enable_rate_limiting, kcenon::thread::backpressure_config::rate_limit_burst_size, and rate_limiter_.

◆ operator=() [1/2]

|

delete |

◆ operator=() [2/2]

|

delete |

◆ reset_stats()

| auto kcenon::thread::backpressure_job_queue::reset_stats | ( | ) | -> void |

Resets backpressure statistics.

Resets statistics.

Definition at line 704 of file backpressure_job_queue.cpp.

◆ set_backpressure_config()

| auto kcenon::thread::backpressure_job_queue::set_backpressure_config | ( | backpressure_config | config | ) | -> void |

Sets the backpressure configuration.

Sets backpressure configuration.

- Parameters

-

config New configuration to apply.

Updates take effect immediately. If rate limiting is being enabled or its parameters change, the token bucket is recreated or updated.

Definition at line 629 of file backpressure_job_queue.cpp.

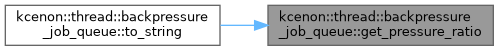

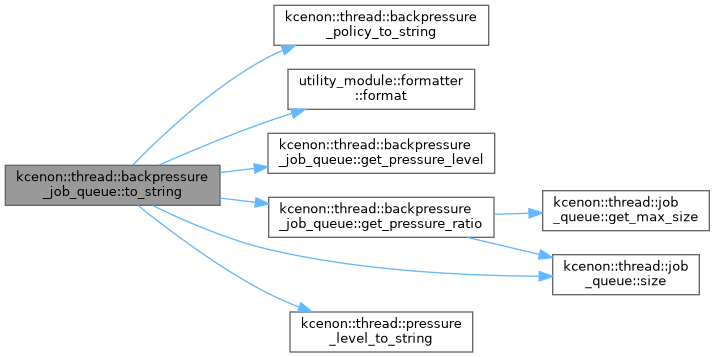

◆ to_string()

|

nodiscardoverridevirtual |

Returns string representation including backpressure state.

Returns string representation.

- Returns

- Formatted string with queue and backpressure information.

Reimplemented from kcenon::thread::job_queue.

Definition at line 712 of file backpressure_job_queue.cpp.

References kcenon::thread::backpressure_policy_to_string(), config_, utility_module::formatter::format(), get_pressure_level(), get_pressure_ratio(), kcenon::thread::backpressure_config::policy, kcenon::thread::pressure_level_to_string(), and kcenon::thread::job_queue::size().

◆ update_pressure_state()

|

private |

Updates pressure level and triggers callbacks if changed.

Updates pressure state and triggers callbacks.

Definition at line 345 of file backpressure_job_queue.cpp.

References kcenon::thread::critical, kcenon::thread::high, kcenon::thread::low, and kcenon::thread::none.

Member Data Documentation

◆ config_

|

private |

Backpressure configuration.

Definition at line 302 of file backpressure_job_queue.h.

Referenced by backpressure_job_queue(), get_available_tokens(), get_backpressure_config(), is_rate_limited(), and to_string().

◆ config_mutex_

|

mutableprivate |

Mutex for configuration access.

Definition at line 307 of file backpressure_job_queue.h.

◆ current_pressure_

|

private |

Current pressure level (atomic for lock-free reads).

Definition at line 317 of file backpressure_job_queue.h.

Referenced by get_pressure_level().

◆ current_pressure_ratio_

|

private |

Current pressure ratio (atomic for lock-free reads).

Definition at line 322 of file backpressure_job_queue.h.

◆ rate_limiter_

|

private |

Token bucket rate limiter (nullptr if disabled).

Definition at line 312 of file backpressure_job_queue.h.

Referenced by get_available_tokens(), and is_rate_limited().

◆ space_available_

|

private |

Condition variable for blocking policy wait.

Definition at line 332 of file backpressure_job_queue.h.

◆ stats_

|

private |

Backpressure statistics.

Definition at line 327 of file backpressure_job_queue.h.

Referenced by get_backpressure_stats().

The documentation for this class was generated from the following files:

- include/kcenon/thread/core/backpressure_job_queue.h

- src/core/backpressure_job_queue.cpp