Public Member Functions | |

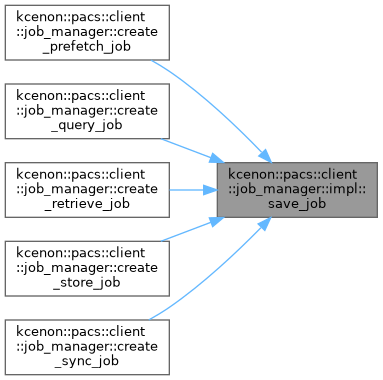

| void | save_job (const job_record &job) |

| std::optional< job_record > | get_job_from_cache (std::string_view job_id) const |

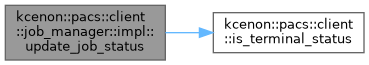

| void | update_job_status (const std::string &job_id, job_status status, const std::string &error_msg="", const std::string &error_details="") |

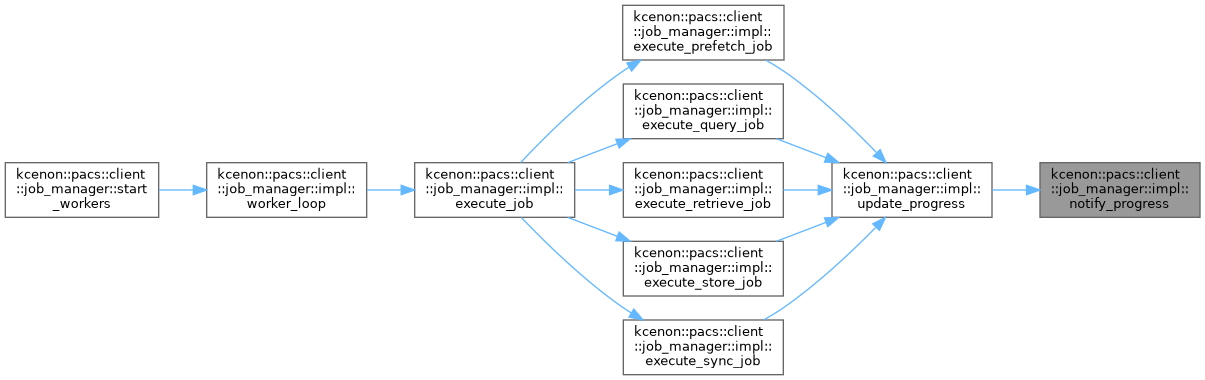

| void | notify_progress (const std::string &job_id, const job_progress &progress) |

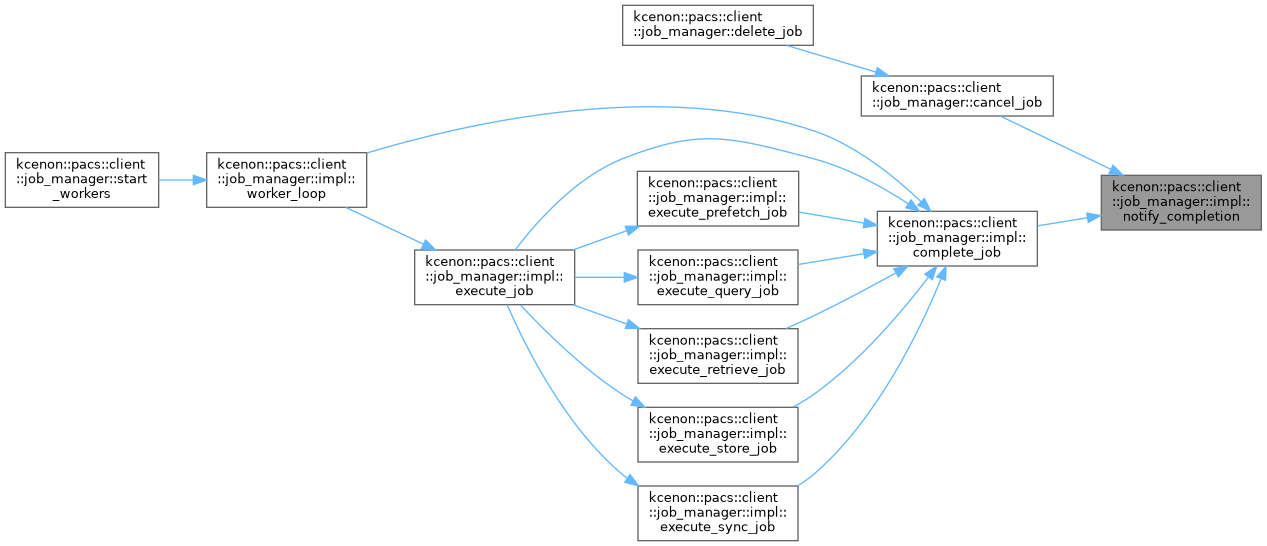

| void | notify_completion (const std::string &job_id, const job_record &record) |

| bool | is_job_cancelled (const std::string &job_id) |

| bool | is_job_paused (const std::string &job_id) |

| void | mark_job_active (const std::string &job_id) |

| void | mark_job_inactive (const std::string &job_id) |

| void | enqueue_job (const std::string &job_id, job_priority priority) |

| void | worker_loop () |

| void | execute_job (job_record &job) |

| void | execute_retrieve_job (job_record &job) |

| void | execute_store_job (job_record &job) |

| void | execute_query_job (job_record &job) |

| void | execute_sync_job (job_record &job) |

| void | execute_prefetch_job (job_record &job) |

| void | update_progress (const std::string &job_id, const job_progress &progress) |

| void | complete_job (const std::string &job_id, job_status status, const std::string &error_msg="") |

| void | load_pending_jobs_from_repo () |

Public Attributes | |

| job_manager_config | config |

| std::shared_ptr< storage::job_repository > | repo |

| std::shared_ptr< remote_node_manager > | node_manager |

| std::shared_ptr< di::ILogger > | logger |

| std::unordered_map< std::string, job_record > | job_cache |

| std::shared_mutex | cache_mutex |

| std::priority_queue< queue_entry > | job_queue |

| std::mutex | queue_mutex |

| std::condition_variable | queue_cv |

| std::unordered_set< std::string > | active_job_ids |

| std::mutex | active_mutex |

| std::unordered_set< std::string > | paused_job_ids |

| std::mutex | paused_mutex |

| std::unordered_set< std::string > | cancelled_job_ids |

| std::mutex | cancelled_mutex |

| std::vector< std::thread > | workers |

| std::atomic< bool > | running {false} |

| job_progress_callback | progress_callback |

| job_completion_callback | completion_callback |

| std::shared_mutex | callbacks_mutex |

| std::unordered_map< std::string, std::shared_ptr< std::promise< job_record > > > | completion_promises |

| std::mutex | promises_mutex |

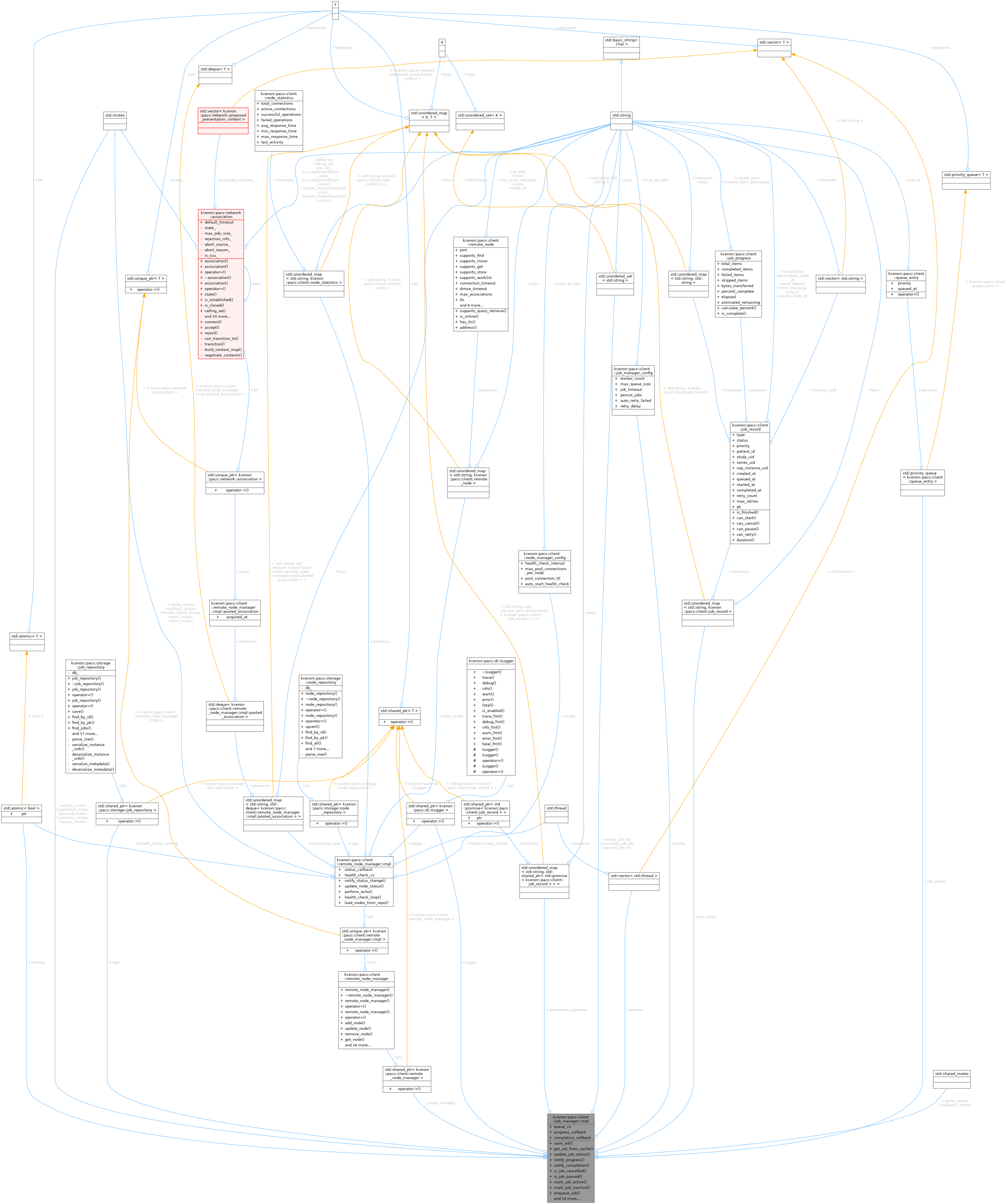

Detailed Description

Definition at line 102 of file job_manager.cpp.

Member Function Documentation

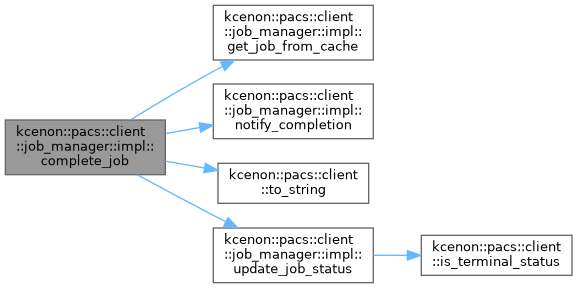

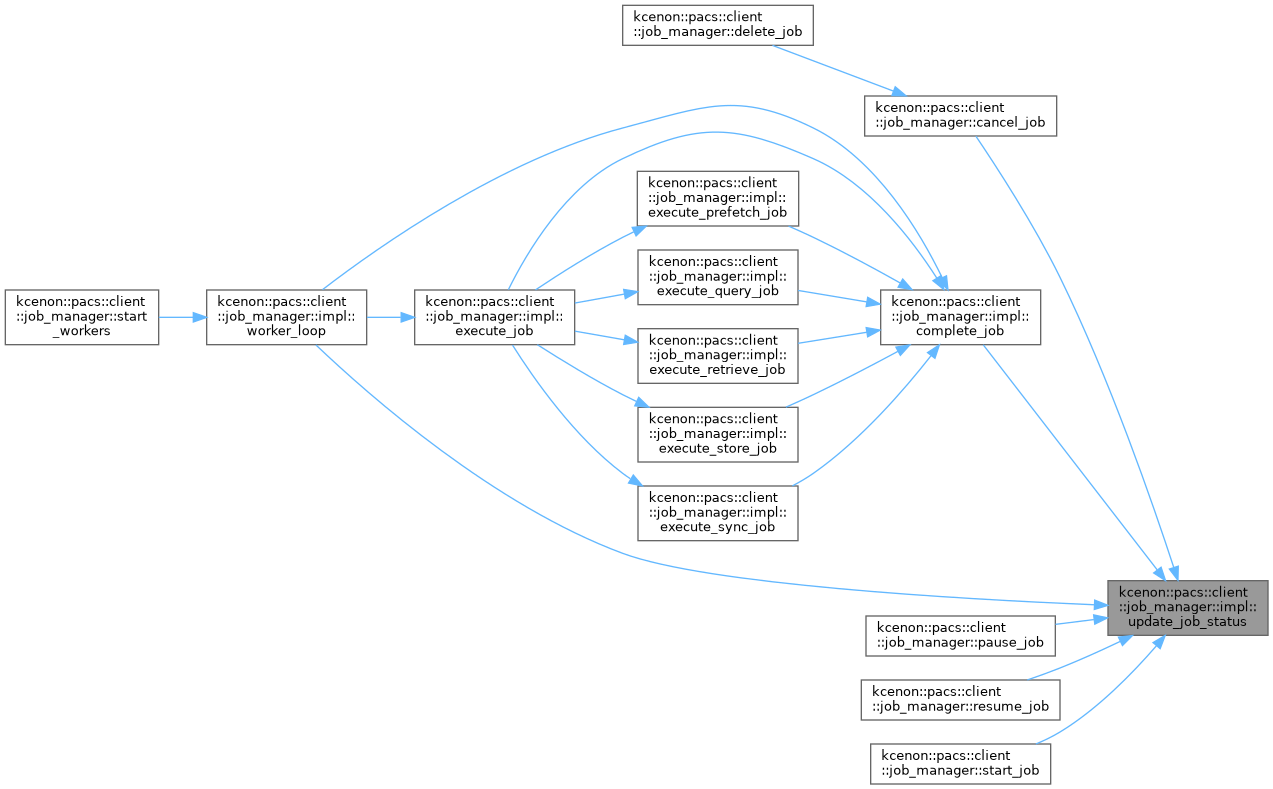

◆ complete_job()

|

inline |

Definition at line 1080 of file job_manager.cpp.

References kcenon::pacs::client::cancelled, cancelled_job_ids, cancelled_mutex, get_job_from_cache(), logger, notify_completion(), kcenon::pacs::client::to_string(), and update_job_status().

Referenced by execute_job(), execute_prefetch_job(), execute_query_job(), execute_retrieve_job(), execute_store_job(), execute_sync_job(), and worker_loop().

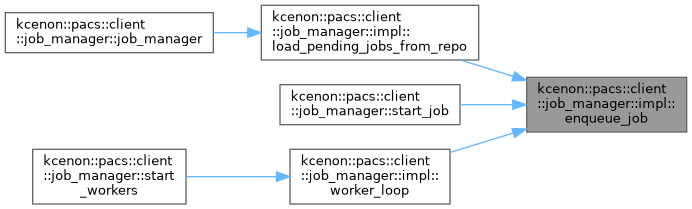

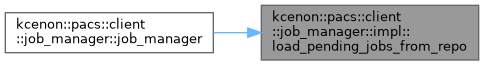

◆ enqueue_job()

|

inline |

Definition at line 257 of file job_manager.cpp.

References job_queue, queue_cv, and queue_mutex.

Referenced by load_pending_jobs_from_repo(), kcenon::pacs::client::job_manager::start_job(), and worker_loop().

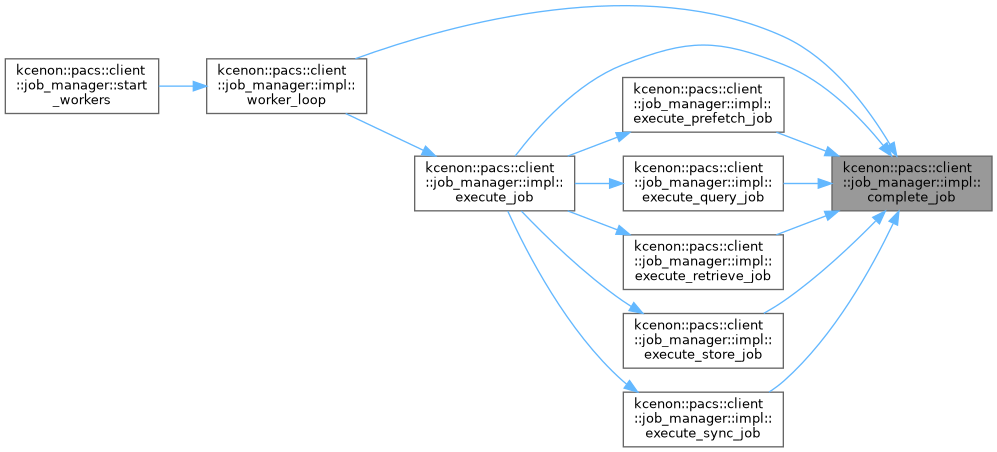

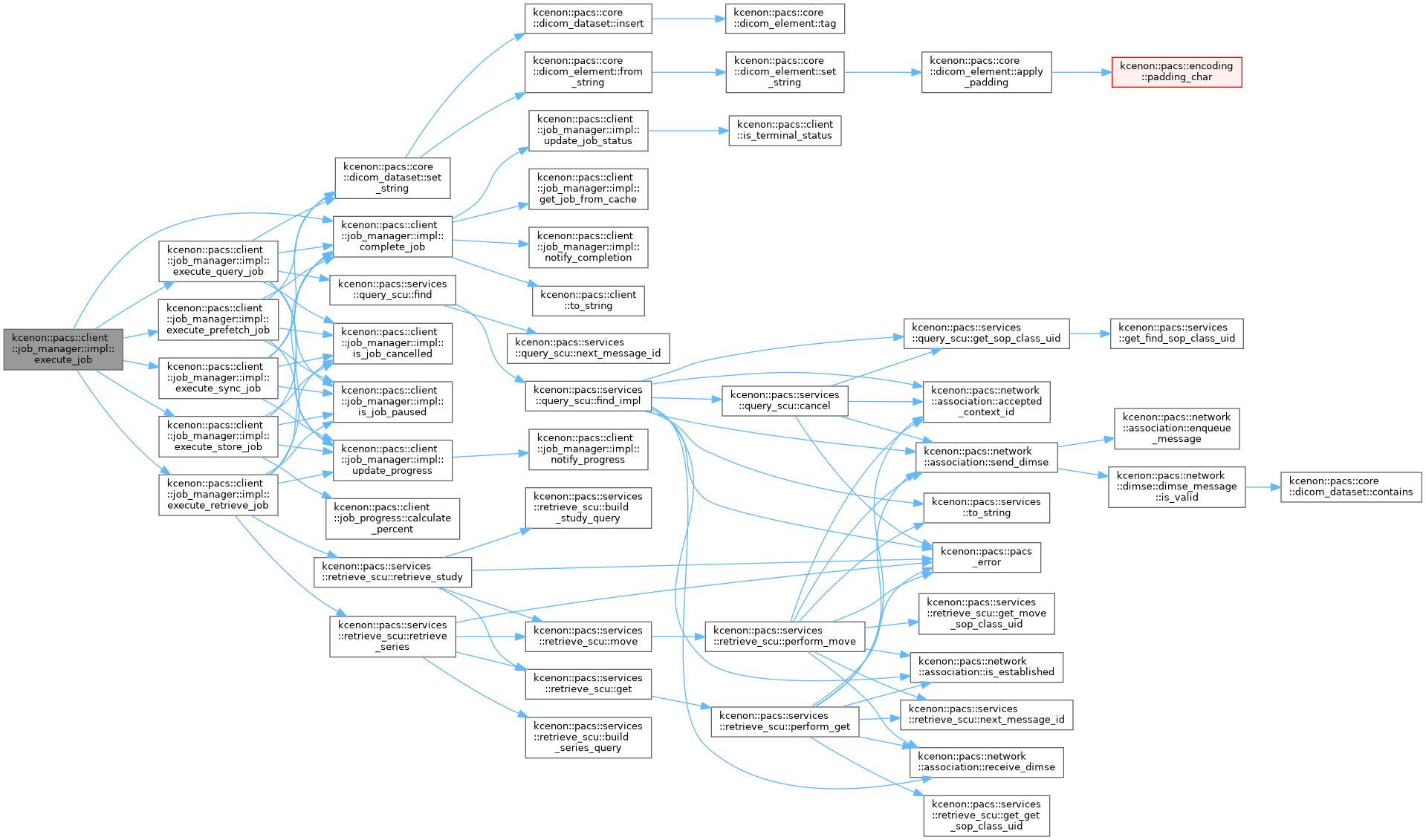

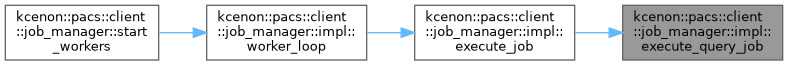

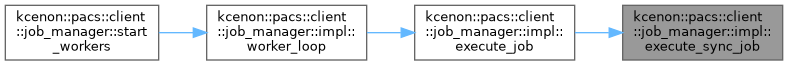

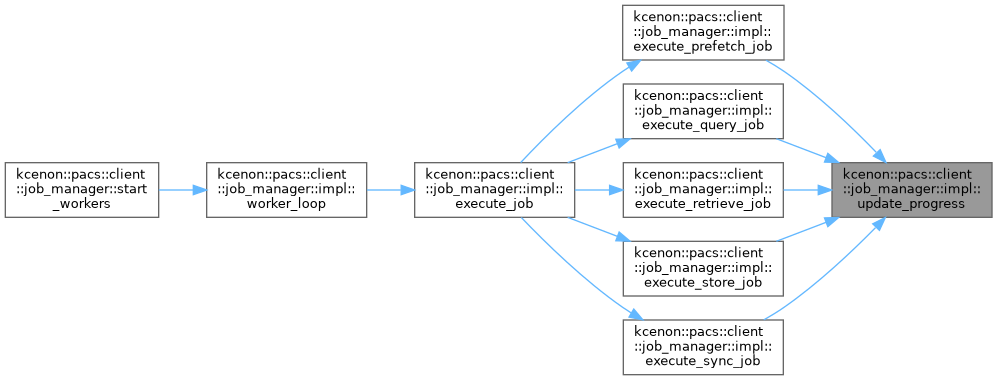

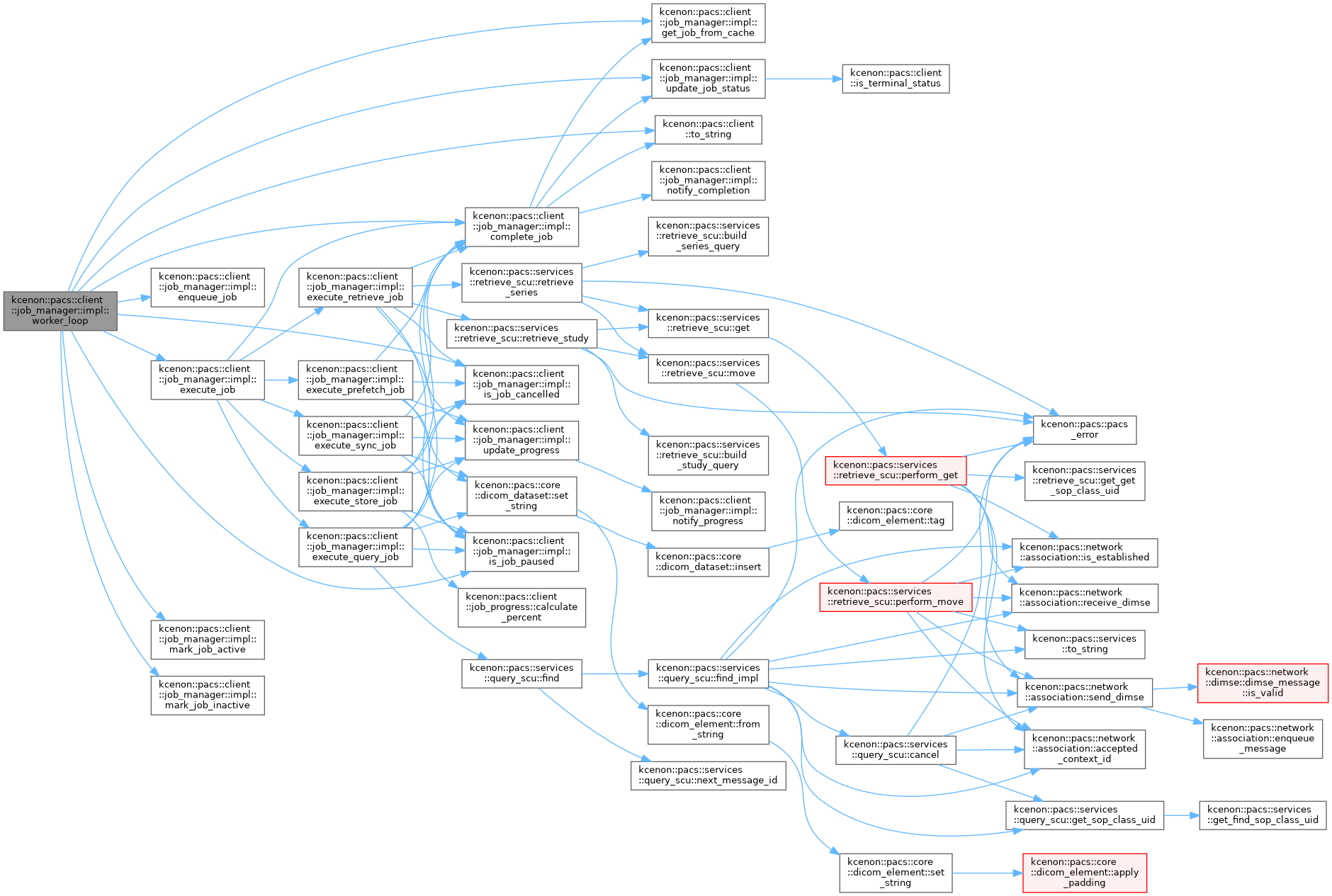

◆ execute_job()

|

inline |

Definition at line 335 of file job_manager.cpp.

References complete_job(), execute_prefetch_job(), execute_query_job(), execute_retrieve_job(), execute_store_job(), execute_sync_job(), kcenon::pacs::client::failed, kcenon::pacs::client::prefetch, kcenon::pacs::client::query, kcenon::pacs::client::retrieve, kcenon::pacs::client::store, and kcenon::pacs::client::sync.

Referenced by worker_loop().

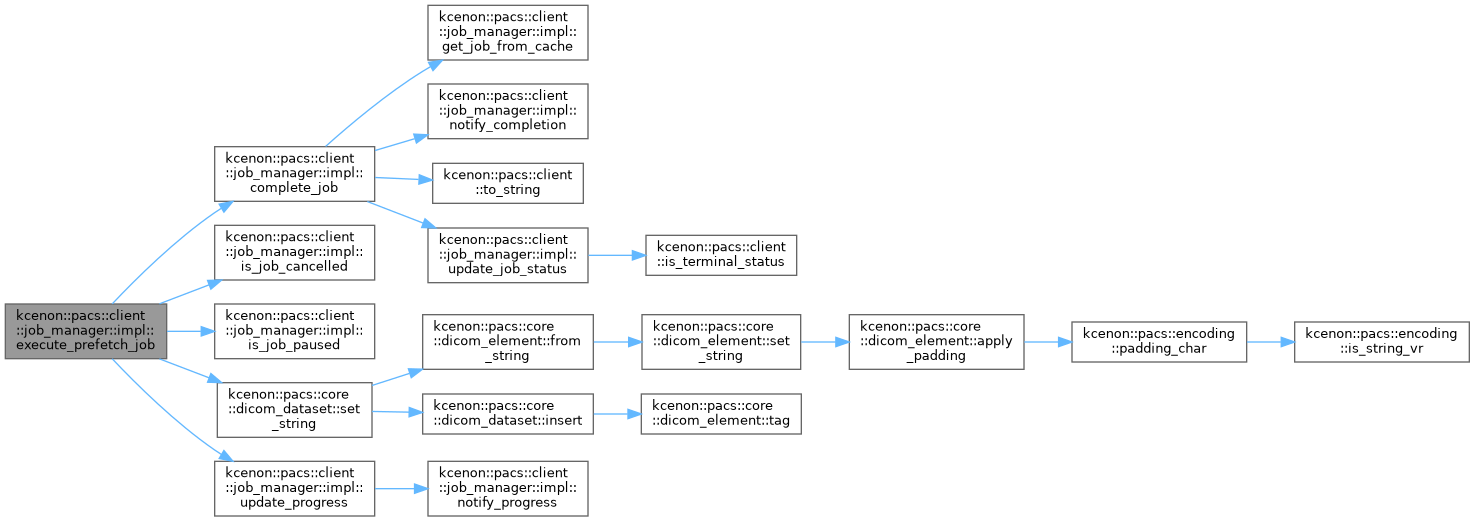

◆ execute_prefetch_job()

|

inline |

Definition at line 931 of file job_manager.cpp.

References cache_mutex, kcenon::pacs::client::cancelled, complete_job(), kcenon::pacs::client::completed, kcenon::pacs::client::job_progress::completed_items, kcenon::pacs::encoding::CS, kcenon::pacs::client::job_progress::current_item_description, kcenon::pacs::encoding::DA, kcenon::pacs::client::failed, is_job_cancelled(), is_job_paused(), job_cache, kcenon::pacs::services::query_scu_config::level, kcenon::pacs::encoding::LO, logger, kcenon::pacs::core::tags::modality, kcenon::pacs::services::query_scu_config::model, node_manager, kcenon::pacs::core::tags::patient_id, kcenon::pacs::client::job_progress::percent_complete, kcenon::pacs::core::tags::query_retrieve_level, kcenon::pacs::core::dicom_dataset::set_string(), kcenon::pacs::services::study, kcenon::pacs::core::tags::study_date, kcenon::pacs::core::tags::study_instance_uid, kcenon::pacs::services::study_root, kcenon::pacs::services::study_root_find_sop_class_uid, kcenon::pacs::client::job_progress::total_items, kcenon::pacs::encoding::UI, and update_progress().

Referenced by execute_job().

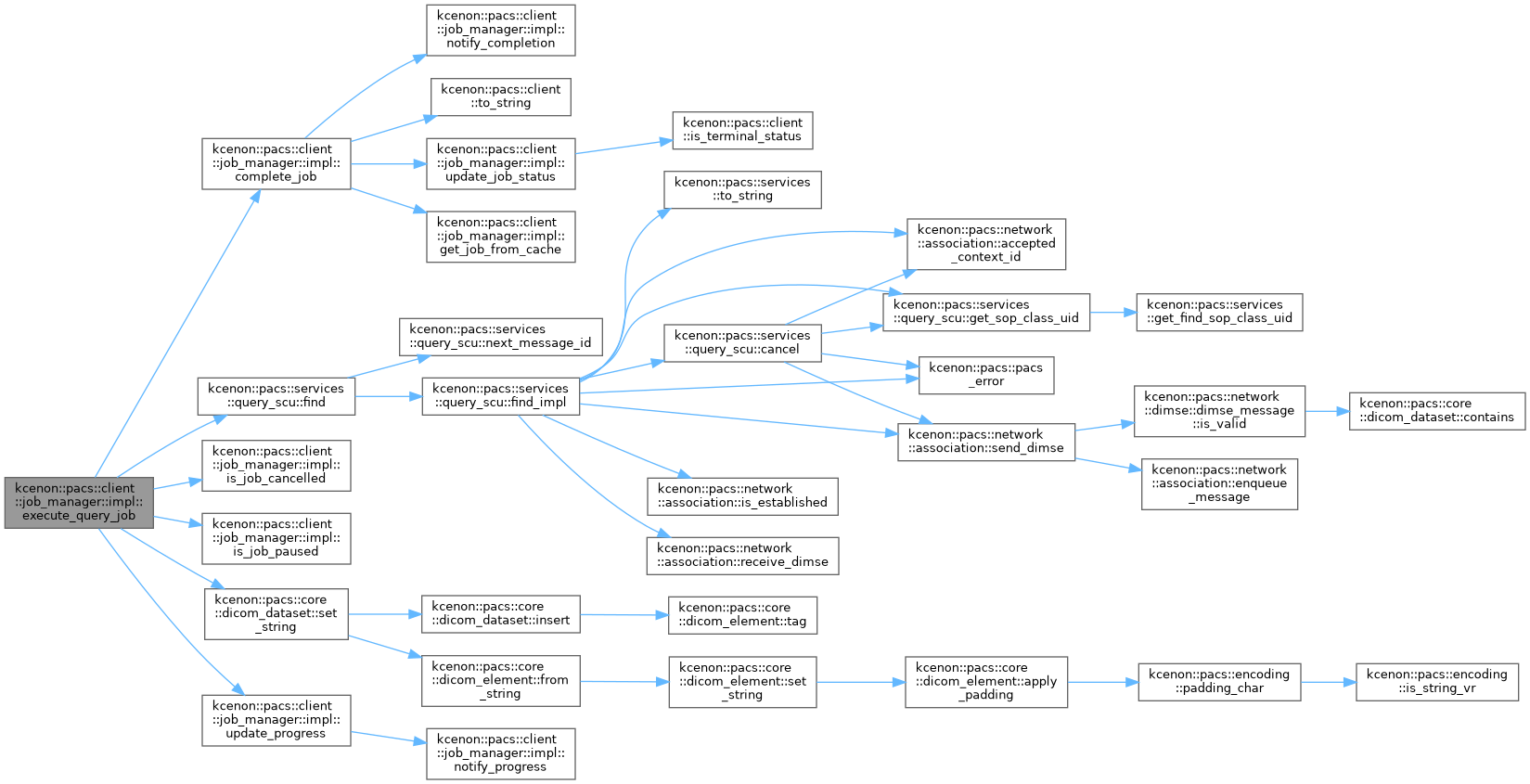

◆ execute_query_job()

|

inline |

Definition at line 647 of file job_manager.cpp.

References kcenon::pacs::core::tags::accession_number, cache_mutex, kcenon::pacs::client::cancelled, complete_job(), kcenon::pacs::client::completed, kcenon::pacs::client::job_progress::completed_items, kcenon::pacs::encoding::CS, kcenon::pacs::client::job_progress::current_item_description, kcenon::pacs::encoding::DA, kcenon::pacs::client::failed, kcenon::pacs::services::query_scu::find(), kcenon::pacs::services::image, is_job_cancelled(), is_job_paused(), job_cache, kcenon::pacs::services::query_scu_config::level, kcenon::pacs::encoding::LO, logger, kcenon::pacs::core::tags::modality, kcenon::pacs::services::query_scu_config::model, node_manager, kcenon::pacs::services::patient, kcenon::pacs::core::tags::patient_id, kcenon::pacs::core::tags::patient_name, kcenon::pacs::client::job_progress::percent_complete, kcenon::pacs::encoding::PN, kcenon::pacs::core::tags::query_retrieve_level, kcenon::pacs::services::series, kcenon::pacs::core::tags::series_instance_uid, kcenon::pacs::core::dicom_dataset::set_string(), kcenon::pacs::encoding::SH, kcenon::pacs::services::study, kcenon::pacs::core::tags::study_date, kcenon::pacs::core::tags::study_instance_uid, kcenon::pacs::services::study_root, kcenon::pacs::services::study_root_find_sop_class_uid, kcenon::pacs::client::job_progress::total_items, kcenon::pacs::encoding::UI, and update_progress().

Referenced by execute_job().

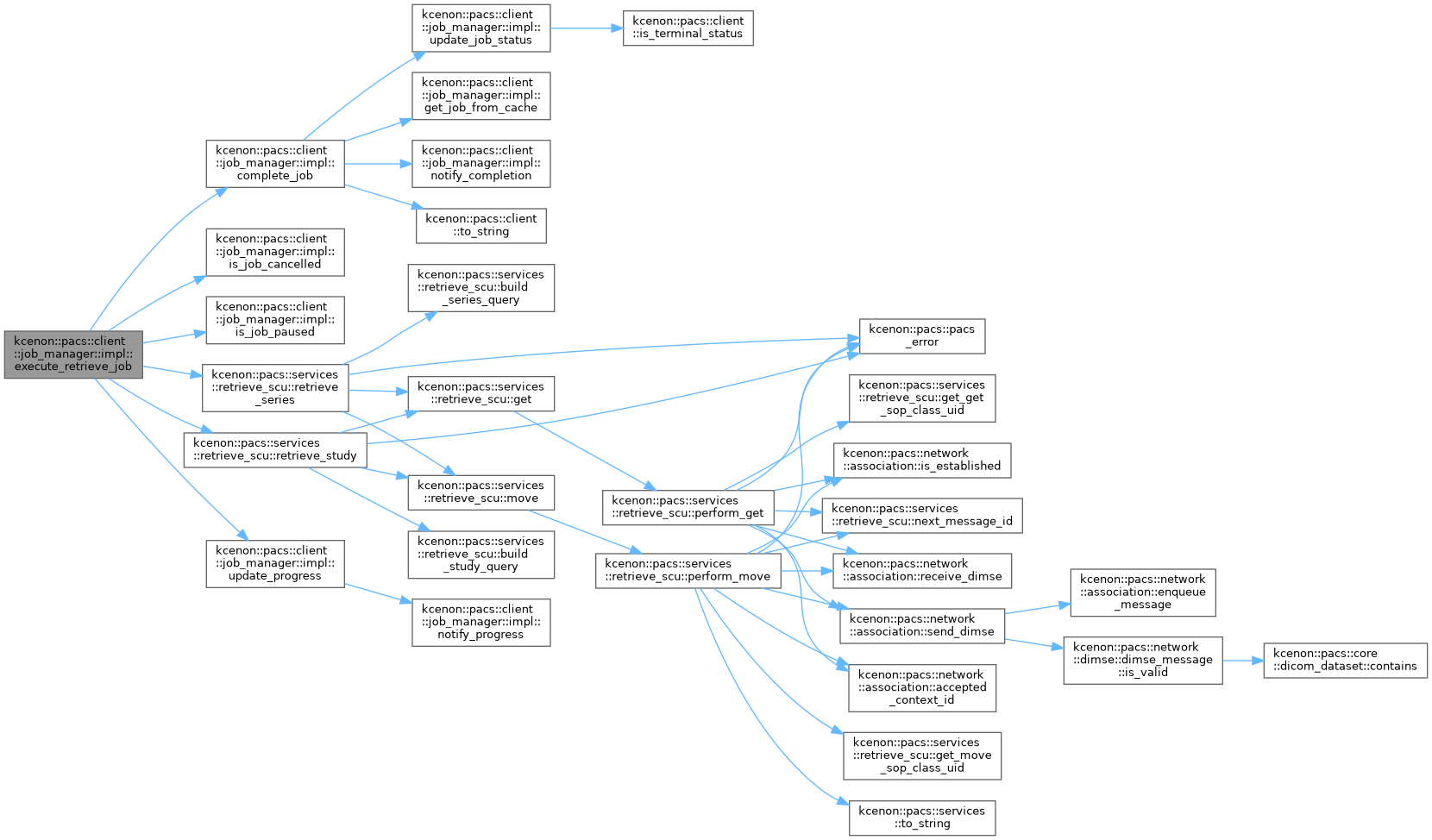

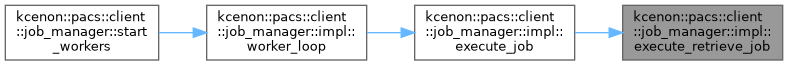

◆ execute_retrieve_job()

|

inline |

Definition at line 358 of file job_manager.cpp.

References kcenon::pacs::services::c_get, kcenon::pacs::services::c_move, kcenon::pacs::client::cancelled, complete_job(), kcenon::pacs::client::completed, kcenon::pacs::client::job_progress::completed_items, config, kcenon::pacs::client::job_progress::elapsed, kcenon::pacs::client::failed, kcenon::pacs::client::job_progress::failed_items, kcenon::pacs::services::image, is_job_cancelled(), is_job_paused(), kcenon::pacs::services::retrieve_scu_config::level, kcenon::pacs::client::job_manager_config::local_ae_title, logger, kcenon::pacs::services::retrieve_scu_config::mode, kcenon::pacs::services::retrieve_scu_config::model, kcenon::pacs::services::retrieve_scu_config::move_destination, node_manager, kcenon::pacs::client::job_progress::percent_complete, progress_callback, kcenon::pacs::services::retrieve_scu::retrieve_series(), kcenon::pacs::services::retrieve_scu::retrieve_study(), kcenon::pacs::services::series, kcenon::pacs::client::job_progress::skipped_items, kcenon::pacs::services::study, kcenon::pacs::services::study_root, kcenon::pacs::services::study_root_get_sop_class_uid, kcenon::pacs::services::study_root_move_sop_class_uid, kcenon::pacs::client::job_progress::total_items, and update_progress().

Referenced by execute_job().

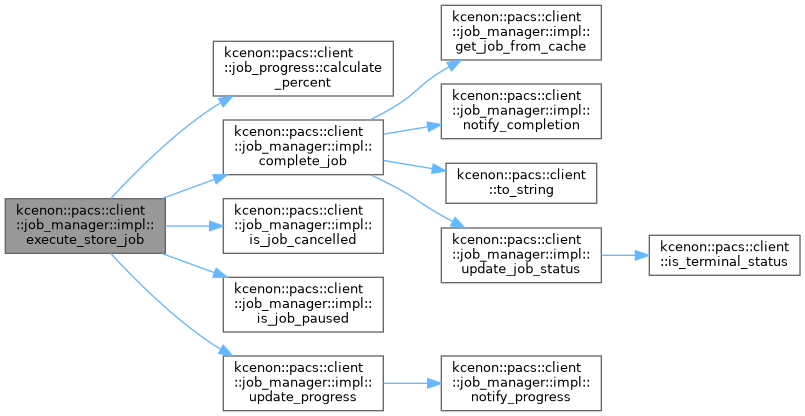

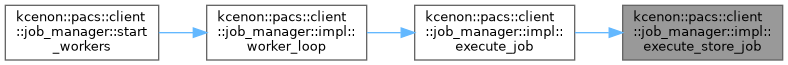

◆ execute_store_job()

|

inline |

Definition at line 511 of file job_manager.cpp.

References kcenon::pacs::client::job_progress::calculate_percent(), kcenon::pacs::client::cancelled, complete_job(), kcenon::pacs::client::completed, kcenon::pacs::client::job_progress::completed_items, kcenon::pacs::services::storage_scu_config::continue_on_error, kcenon::pacs::client::job_progress::current_item, kcenon::pacs::client::job_progress::current_item_description, kcenon::pacs::client::failed, kcenon::pacs::client::job_progress::failed_items, is_job_cancelled(), is_job_paused(), logger, node_manager, kcenon::pacs::client::job_progress::percent_complete, kcenon::pacs::client::job_progress::total_items, and update_progress().

Referenced by execute_job().

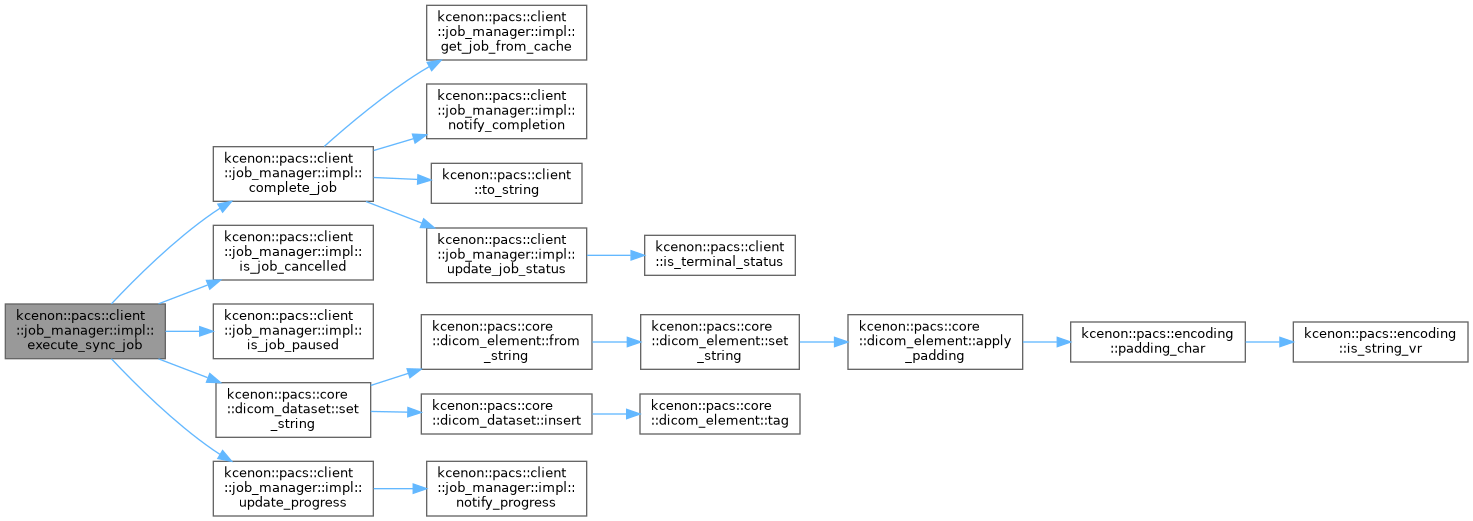

◆ execute_sync_job()

|

inline |

Definition at line 802 of file job_manager.cpp.

References cache_mutex, kcenon::pacs::client::cancelled, complete_job(), kcenon::pacs::client::completed, kcenon::pacs::client::job_progress::completed_items, kcenon::pacs::encoding::CS, kcenon::pacs::client::job_progress::current_item_description, kcenon::pacs::encoding::DA, kcenon::pacs::client::failed, is_job_cancelled(), is_job_paused(), job_cache, kcenon::pacs::services::query_scu_config::level, kcenon::pacs::encoding::LO, logger, kcenon::pacs::services::query_scu_config::model, node_manager, kcenon::pacs::core::tags::patient_id, kcenon::pacs::core::tags::patient_name, kcenon::pacs::client::job_progress::percent_complete, kcenon::pacs::encoding::PN, kcenon::pacs::core::tags::query_retrieve_level, kcenon::pacs::core::dicom_dataset::set_string(), kcenon::pacs::services::study, kcenon::pacs::core::tags::study_date, kcenon::pacs::core::tags::study_instance_uid, kcenon::pacs::services::study_root, kcenon::pacs::services::study_root_find_sop_class_uid, kcenon::pacs::client::job_progress::total_items, kcenon::pacs::encoding::UI, and update_progress().

Referenced by execute_job().

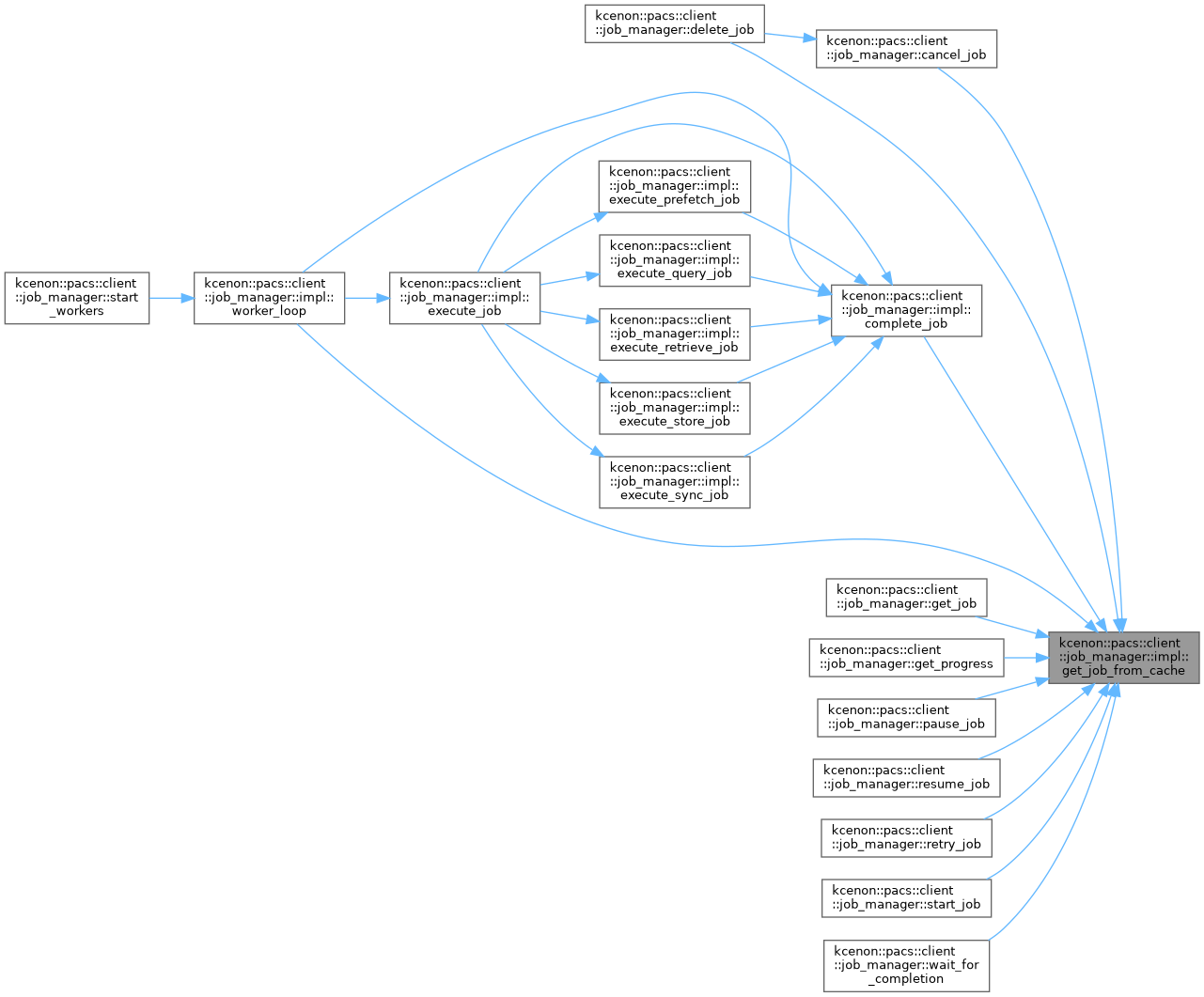

◆ get_job_from_cache()

|

inline |

Definition at line 162 of file job_manager.cpp.

References cache_mutex, and job_cache.

Referenced by kcenon::pacs::client::job_manager::cancel_job(), complete_job(), kcenon::pacs::client::job_manager::delete_job(), kcenon::pacs::client::job_manager::get_job(), kcenon::pacs::client::job_manager::get_progress(), kcenon::pacs::client::job_manager::pause_job(), kcenon::pacs::client::job_manager::resume_job(), kcenon::pacs::client::job_manager::retry_job(), kcenon::pacs::client::job_manager::start_job(), kcenon::pacs::client::job_manager::wait_for_completion(), and worker_loop().

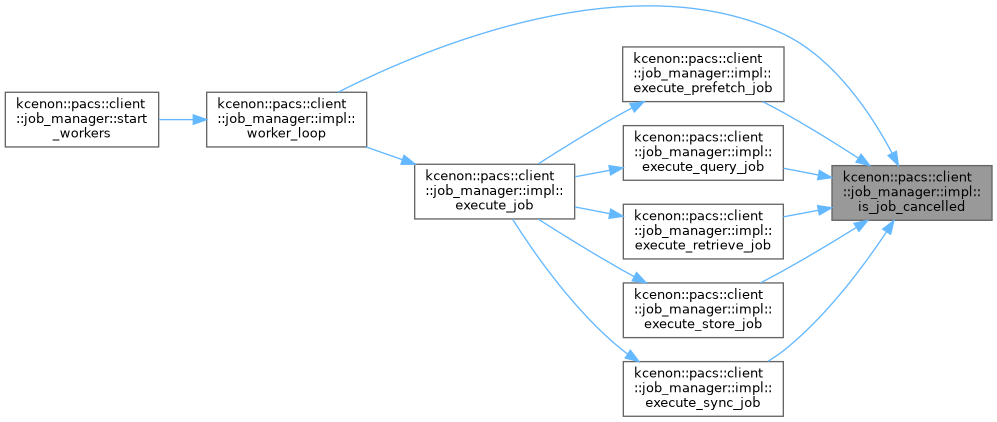

◆ is_job_cancelled()

|

inline |

Definition at line 237 of file job_manager.cpp.

References cancelled_job_ids, and cancelled_mutex.

Referenced by execute_prefetch_job(), execute_query_job(), execute_retrieve_job(), execute_store_job(), execute_sync_job(), and worker_loop().

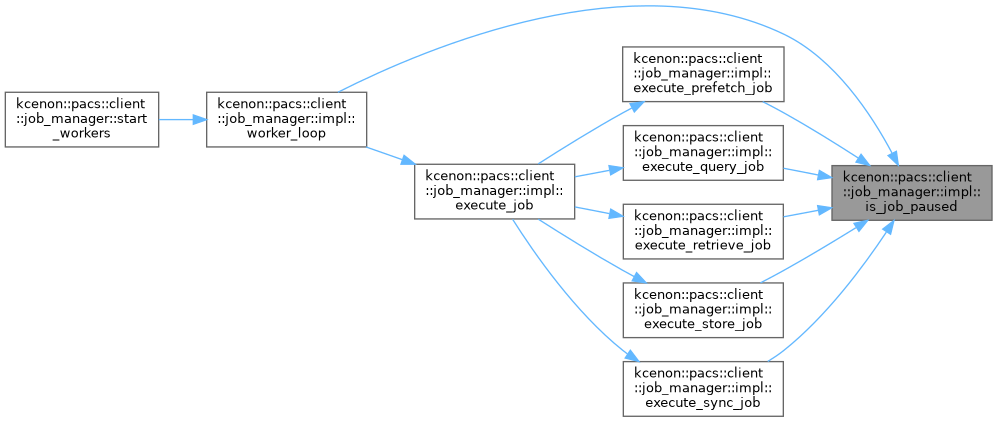

◆ is_job_paused()

|

inline |

Definition at line 242 of file job_manager.cpp.

References paused_job_ids, and paused_mutex.

Referenced by execute_prefetch_job(), execute_query_job(), execute_retrieve_job(), execute_store_job(), execute_sync_job(), and worker_loop().

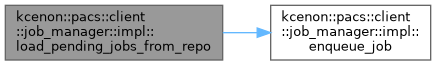

◆ load_pending_jobs_from_repo()

|

inline |

Definition at line 1099 of file job_manager.cpp.

References cache_mutex, config, enqueue_job(), job_cache, logger, kcenon::pacs::client::job_manager_config::max_queue_size, kcenon::pacs::client::pending, and repo.

Referenced by kcenon::pacs::client::job_manager::job_manager().

◆ mark_job_active()

|

inline |

Definition at line 247 of file job_manager.cpp.

References active_job_ids, and active_mutex.

Referenced by worker_loop().

◆ mark_job_inactive()

|

inline |

Definition at line 252 of file job_manager.cpp.

References active_job_ids, and active_mutex.

Referenced by worker_loop().

◆ notify_completion()

|

inline |

Definition at line 217 of file job_manager.cpp.

References callbacks_mutex, completion_callback, completion_promises, and promises_mutex.

Referenced by kcenon::pacs::client::job_manager::cancel_job(), and complete_job().

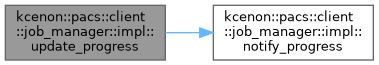

◆ notify_progress()

|

inline |

Definition at line 210 of file job_manager.cpp.

References callbacks_mutex, and progress_callback.

Referenced by update_progress().

◆ save_job()

|

inline |

Definition at line 149 of file job_manager.cpp.

References cache_mutex, job_cache, and repo.

Referenced by kcenon::pacs::client::job_manager::create_prefetch_job(), kcenon::pacs::client::job_manager::create_query_job(), kcenon::pacs::client::job_manager::create_retrieve_job(), kcenon::pacs::client::job_manager::create_store_job(), and kcenon::pacs::client::job_manager::create_sync_job().

◆ update_job_status()

|

inline |

Definition at line 171 of file job_manager.cpp.

References cache_mutex, kcenon::pacs::client::completed, kcenon::pacs::client::failed, kcenon::pacs::client::is_terminal_status(), job_cache, repo, and kcenon::pacs::client::running.

Referenced by kcenon::pacs::client::job_manager::cancel_job(), complete_job(), kcenon::pacs::client::job_manager::pause_job(), kcenon::pacs::client::job_manager::resume_job(), kcenon::pacs::client::job_manager::start_job(), and worker_loop().

◆ update_progress()

|

inline |

Definition at line 1061 of file job_manager.cpp.

References cache_mutex, job_cache, notify_progress(), and repo.

Referenced by execute_prefetch_job(), execute_query_job(), execute_retrieve_job(), execute_store_job(), and execute_sync_job().

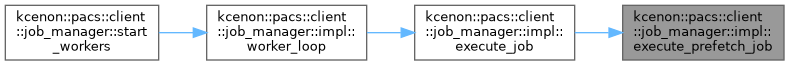

◆ worker_loop()

|

inline |

Definition at line 269 of file job_manager.cpp.

References complete_job(), enqueue_job(), execute_job(), kcenon::pacs::client::failed, get_job_from_cache(), is_job_cancelled(), is_job_paused(), job_queue, logger, mark_job_active(), mark_job_inactive(), queue_cv, queue_mutex, running, kcenon::pacs::client::running, kcenon::pacs::client::to_string(), and update_job_status().

Referenced by kcenon::pacs::client::job_manager::start_workers().

Member Data Documentation

◆ active_job_ids

| std::unordered_set<std::string> kcenon::pacs::client::job_manager::impl::active_job_ids |

Definition at line 121 of file job_manager.cpp.

Referenced by kcenon::pacs::client::job_manager::active_jobs(), kcenon::pacs::client::job_manager::cancel_job(), mark_job_active(), and mark_job_inactive().

◆ active_mutex

|

mutable |

Definition at line 122 of file job_manager.cpp.

Referenced by kcenon::pacs::client::job_manager::active_jobs(), kcenon::pacs::client::job_manager::cancel_job(), mark_job_active(), and mark_job_inactive().

◆ cache_mutex

|

mutable |

Definition at line 113 of file job_manager.cpp.

Referenced by kcenon::pacs::client::job_manager::delete_job(), execute_prefetch_job(), execute_query_job(), execute_sync_job(), get_job_from_cache(), kcenon::pacs::client::job_manager::list_jobs(), kcenon::pacs::client::job_manager::list_jobs_by_node(), load_pending_jobs_from_repo(), kcenon::pacs::client::job_manager::retry_job(), save_job(), kcenon::pacs::client::job_manager::start_job(), update_job_status(), and update_progress().

◆ callbacks_mutex

|

mutable |

Definition at line 139 of file job_manager.cpp.

Referenced by notify_completion(), notify_progress(), kcenon::pacs::client::job_manager::set_completion_callback(), and kcenon::pacs::client::job_manager::set_progress_callback().

◆ cancelled_job_ids

| std::unordered_set<std::string> kcenon::pacs::client::job_manager::impl::cancelled_job_ids |

Definition at line 129 of file job_manager.cpp.

Referenced by kcenon::pacs::client::job_manager::cancel_job(), complete_job(), and is_job_cancelled().

◆ cancelled_mutex

|

mutable |

Definition at line 130 of file job_manager.cpp.

Referenced by kcenon::pacs::client::job_manager::cancel_job(), complete_job(), and is_job_cancelled().

◆ completion_callback

| job_completion_callback kcenon::pacs::client::job_manager::impl::completion_callback |

Definition at line 138 of file job_manager.cpp.

Referenced by notify_completion(), and kcenon::pacs::client::job_manager::set_completion_callback().

◆ completion_promises

| std::unordered_map<std::string, std::shared_ptr<std::promise<job_record> > > kcenon::pacs::client::job_manager::impl::completion_promises |

Definition at line 142 of file job_manager.cpp.

Referenced by notify_completion(), and kcenon::pacs::client::job_manager::wait_for_completion().

◆ config

| job_manager_config kcenon::pacs::client::job_manager::impl::config |

Definition at line 104 of file job_manager.cpp.

Referenced by kcenon::pacs::client::job_manager::config(), execute_retrieve_job(), kcenon::pacs::client::job_manager::job_manager(), load_pending_jobs_from_repo(), and kcenon::pacs::client::job_manager::start_workers().

◆ job_cache

| std::unordered_map<std::string, job_record> kcenon::pacs::client::job_manager::impl::job_cache |

Definition at line 112 of file job_manager.cpp.

Referenced by kcenon::pacs::client::job_manager::delete_job(), execute_prefetch_job(), execute_query_job(), execute_sync_job(), get_job_from_cache(), kcenon::pacs::client::job_manager::list_jobs(), kcenon::pacs::client::job_manager::list_jobs_by_node(), load_pending_jobs_from_repo(), kcenon::pacs::client::job_manager::retry_job(), save_job(), kcenon::pacs::client::job_manager::start_job(), update_job_status(), and update_progress().

◆ job_queue

| std::priority_queue<queue_entry> kcenon::pacs::client::job_manager::impl::job_queue |

Definition at line 116 of file job_manager.cpp.

Referenced by enqueue_job(), kcenon::pacs::client::job_manager::pending_jobs(), and worker_loop().

◆ logger

| std::shared_ptr<di::ILogger> kcenon::pacs::client::job_manager::impl::logger |

Definition at line 109 of file job_manager.cpp.

Referenced by kcenon::pacs::client::job_manager::cancel_job(), complete_job(), kcenon::pacs::client::job_manager::create_prefetch_job(), kcenon::pacs::client::job_manager::create_query_job(), kcenon::pacs::client::job_manager::create_retrieve_job(), kcenon::pacs::client::job_manager::create_store_job(), kcenon::pacs::client::job_manager::create_sync_job(), kcenon::pacs::client::job_manager::delete_job(), execute_prefetch_job(), execute_query_job(), execute_retrieve_job(), execute_store_job(), execute_sync_job(), kcenon::pacs::client::job_manager::job_manager(), load_pending_jobs_from_repo(), kcenon::pacs::client::job_manager::pause_job(), kcenon::pacs::client::job_manager::resume_job(), kcenon::pacs::client::job_manager::retry_job(), kcenon::pacs::client::job_manager::start_job(), kcenon::pacs::client::job_manager::start_workers(), kcenon::pacs::client::job_manager::stop_workers(), and worker_loop().

◆ node_manager

| std::shared_ptr<remote_node_manager> kcenon::pacs::client::job_manager::impl::node_manager |

Definition at line 108 of file job_manager.cpp.

Referenced by execute_prefetch_job(), execute_query_job(), execute_retrieve_job(), execute_store_job(), execute_sync_job(), and kcenon::pacs::client::job_manager::job_manager().

◆ paused_job_ids

| std::unordered_set<std::string> kcenon::pacs::client::job_manager::impl::paused_job_ids |

Definition at line 125 of file job_manager.cpp.

Referenced by is_job_paused(), kcenon::pacs::client::job_manager::pause_job(), and kcenon::pacs::client::job_manager::resume_job().

◆ paused_mutex

|

mutable |

Definition at line 126 of file job_manager.cpp.

Referenced by is_job_paused(), kcenon::pacs::client::job_manager::pause_job(), and kcenon::pacs::client::job_manager::resume_job().

◆ progress_callback

| job_progress_callback kcenon::pacs::client::job_manager::impl::progress_callback |

Definition at line 137 of file job_manager.cpp.

Referenced by execute_retrieve_job(), notify_progress(), and kcenon::pacs::client::job_manager::set_progress_callback().

◆ promises_mutex

|

mutable |

Definition at line 143 of file job_manager.cpp.

Referenced by notify_completion(), and kcenon::pacs::client::job_manager::wait_for_completion().

◆ queue_cv

| std::condition_variable kcenon::pacs::client::job_manager::impl::queue_cv |

Definition at line 118 of file job_manager.cpp.

Referenced by enqueue_job(), kcenon::pacs::client::job_manager::stop_workers(), and worker_loop().

◆ queue_mutex

|

mutable |

Definition at line 117 of file job_manager.cpp.

Referenced by enqueue_job(), kcenon::pacs::client::job_manager::pending_jobs(), and worker_loop().

◆ repo

| std::shared_ptr<storage::job_repository> kcenon::pacs::client::job_manager::impl::repo |

Definition at line 107 of file job_manager.cpp.

Referenced by kcenon::pacs::client::job_manager::completed_jobs_today(), kcenon::pacs::client::job_manager::delete_job(), kcenon::pacs::client::job_manager::failed_jobs_today(), kcenon::pacs::client::job_manager::job_manager(), kcenon::pacs::client::job_manager::list_jobs(), kcenon::pacs::client::job_manager::list_jobs_by_node(), load_pending_jobs_from_repo(), kcenon::pacs::client::job_manager::retry_job(), save_job(), update_job_status(), and update_progress().

◆ running

| std::atomic<bool> kcenon::pacs::client::job_manager::impl::running {false} |

Definition at line 134 of file job_manager.cpp.

Referenced by kcenon::pacs::client::job_manager::is_running(), kcenon::pacs::client::job_manager::start_workers(), kcenon::pacs::client::job_manager::stop_workers(), and worker_loop().

◆ workers

| std::vector<std::thread> kcenon::pacs::client::job_manager::impl::workers |

Definition at line 133 of file job_manager.cpp.

Referenced by kcenon::pacs::client::job_manager::start_workers(), and kcenon::pacs::client::job_manager::stop_workers().

The documentation for this struct was generated from the following file:

- src/client/job_manager.cpp