Lock-free typed job queue with MPMC patterns and performance benchmarks. More...

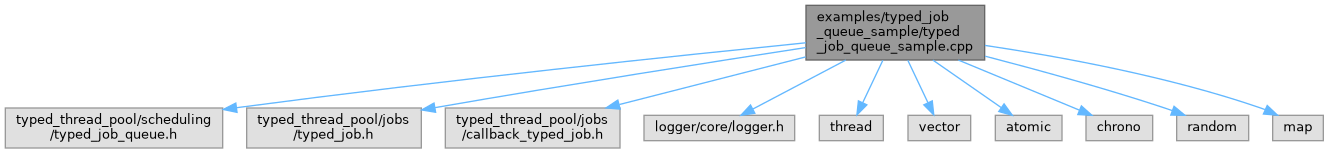

#include "typed_thread_pool/scheduling/typed_job_queue.h"#include "typed_thread_pool/jobs/typed_job.h"#include "typed_thread_pool/jobs/callback_typed_job.h"#include "logger/core/logger.h"#include <thread>#include <vector>#include <atomic>#include <chrono>#include <random>#include <map>

Go to the source code of this file.

Functions | |

| void | basic_typed_queue_example () |

| void | mpmc_typed_queue_example () |

| void | performance_comparison_example () |

| void | task_scheduling_example () |

| void | stress_test_example () |

| int | main () |

Detailed Description

Lock-free typed job queue with MPMC patterns and performance benchmarks.

Definition in file typed_job_queue_sample.cpp.

Function Documentation

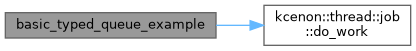

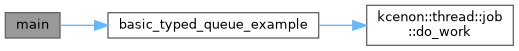

◆ basic_typed_queue_example()

| void basic_typed_queue_example | ( | ) |

- Examples

- typed_job_queue_sample.cpp.

Definition at line 35 of file typed_job_queue_sample.cpp.

References kcenon::thread::job::do_work().

Referenced by main().

◆ main()

| int main | ( | ) |

Definition at line 507 of file typed_job_queue_sample.cpp.

References basic_typed_queue_example().

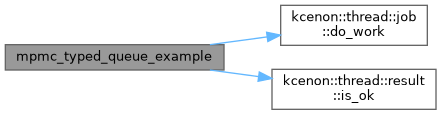

◆ mpmc_typed_queue_example()

| void mpmc_typed_queue_example | ( | ) |

- Examples

- typed_job_queue_sample.cpp.

Definition at line 116 of file typed_job_queue_sample.cpp.

References kcenon::thread::job::do_work(), and kcenon::thread::result< T >::is_ok().

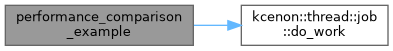

◆ performance_comparison_example()

| void performance_comparison_example | ( | ) |

- Examples

- typed_job_queue_sample.cpp.

Definition at line 241 of file typed_job_queue_sample.cpp.

References kcenon::thread::completed, and kcenon::thread::job::do_work().

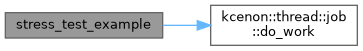

◆ stress_test_example()

| void stress_test_example | ( | ) |

- Examples

- typed_job_queue_sample.cpp.

Definition at line 436 of file typed_job_queue_sample.cpp.

References kcenon::thread::job::do_work().

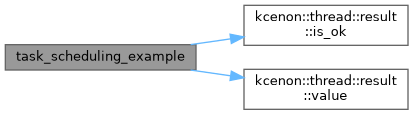

◆ task_scheduling_example()

| void task_scheduling_example | ( | ) |

- Examples

- typed_job_queue_sample.cpp.

Definition at line 309 of file typed_job_queue_sample.cpp.

References kcenon::thread::completed, kcenon::thread::created, kcenon::thread::failed, kcenon::thread::result< T >::is_ok(), kcenon::thread::latency, system_running, and kcenon::thread::result< T >::value().