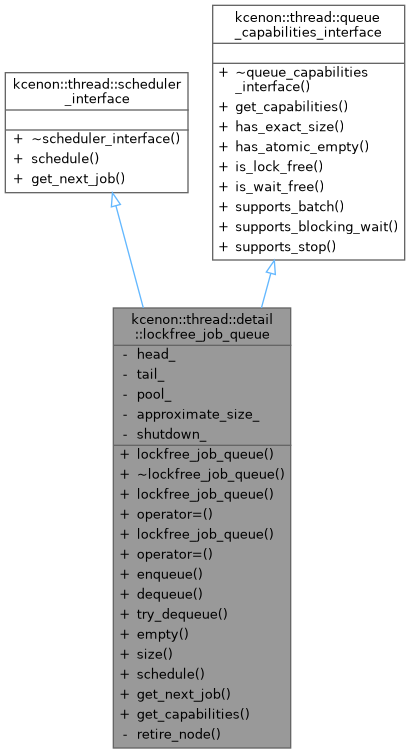

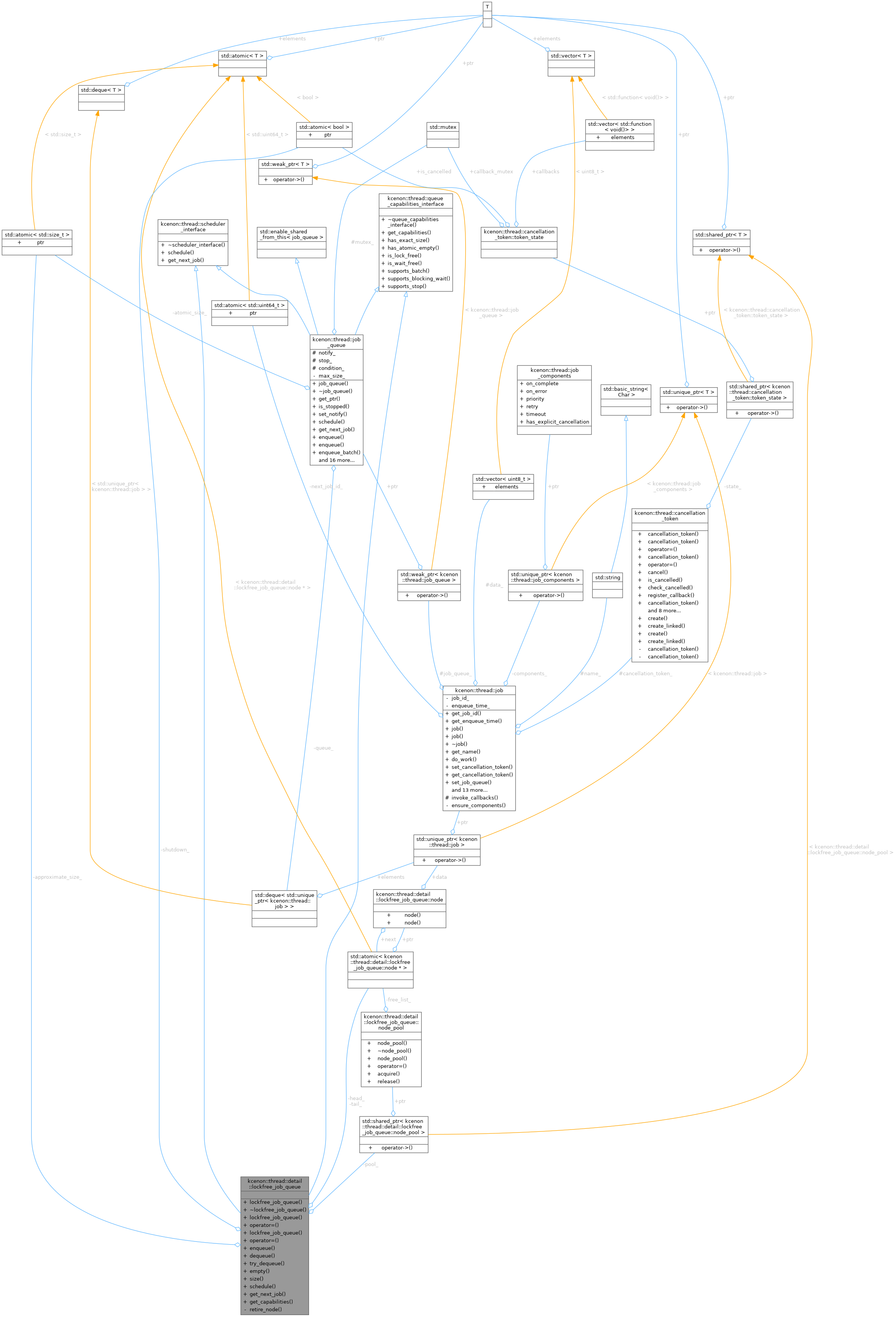

Lock-free Multi-Producer Multi-Consumer (MPMC) job queue (Internal implementation) More...

#include <lockfree_job_queue.h>

Classes | |

| struct | node |

| Internal queue node structure. More... | |

| class | node_pool |

| Lock-free node freelist (Treiber stack) for node recycling. More... | |

Public Member Functions | |

| lockfree_job_queue () | |

| Constructs an empty lock-free job queue. | |

| ~lockfree_job_queue () | |

| Destructor. | |

| lockfree_job_queue (const lockfree_job_queue &)=delete | |

| lockfree_job_queue & | operator= (const lockfree_job_queue &)=delete |

| lockfree_job_queue (lockfree_job_queue &&)=delete | |

| lockfree_job_queue & | operator= (lockfree_job_queue &&)=delete |

| auto | enqueue (std::unique_ptr< job > &&job) -> common::VoidResult |

| Enqueues a job into the queue (thread-safe) | |

| auto | dequeue () -> common::Result< std::unique_ptr< job > > |

| Dequeues a job from the queue (thread-safe) | |

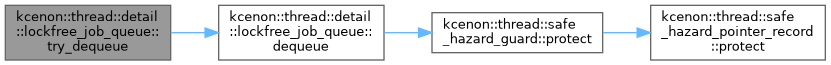

| auto | try_dequeue () -> common::Result< std::unique_ptr< job > > |

| Tries to dequeue a job without blocking. | |

| auto | empty () const -> bool |

| Checks if the queue is empty. | |

| auto | size () const -> std::size_t |

| Gets approximate queue size. | |

| auto | schedule (std::unique_ptr< job > &&work) -> common::VoidResult override |

| Schedule a job (delegates to enqueue) | |

| auto | get_next_job () -> common::Result< std::unique_ptr< job > > override |

| Get next job (delegates to dequeue) | |

| auto | get_capabilities () const -> queue_capabilities override |

| Returns capabilities of lockfree_job_queue. | |

Public Member Functions inherited from kcenon::thread::scheduler_interface Public Member Functions inherited from kcenon::thread::scheduler_interface | |

| virtual | ~scheduler_interface ()=default |

Public Member Functions inherited from kcenon::thread::queue_capabilities_interface Public Member Functions inherited from kcenon::thread::queue_capabilities_interface | |

| virtual | ~queue_capabilities_interface ()=default |

| auto | has_exact_size () const -> bool |

| Check if size() returns exact values. | |

| auto | has_atomic_empty () const -> bool |

| Check if empty() check is atomic. | |

| auto | is_lock_free () const -> bool |

| Check if this is a lock-free implementation. | |

| auto | is_wait_free () const -> bool |

| Check if this is a wait-free implementation. | |

| auto | supports_batch () const -> bool |

| Check if batch operations are supported. | |

| auto | supports_blocking_wait () const -> bool |

| Check if blocking wait is supported. | |

| auto | supports_stop () const -> bool |

| Check if stop signaling is supported. | |

Private Types | |

| using | node_hp_domain = typed_safe_hazard_domain<node> |

Private Member Functions | |

| void | retire_node (node *n) |

| Retire a node through hazard pointers, recycling via pool on reclamation. | |

Private Attributes | |

| std::atomic< node * > | head_ |

| std::atomic< node * > | tail_ |

| std::shared_ptr< node_pool > | pool_ |

| std::atomic< std::size_t > | approximate_size_ {0} |

| std::atomic< bool > | shutdown_ {false} |

Detailed Description

Lock-free Multi-Producer Multi-Consumer (MPMC) job queue (Internal implementation)

This class implements a lock-free MPMC queue using the Michael-Scott algorithm with Safe Hazard Pointers for memory reclamation. It uses explicit memory ordering to ensure correctness on weak memory model architectures (ARM, etc.)

Algorithm: Michael-Scott Queue (1996) Memory Reclamation: Safe Hazard Pointers with explicit memory ordering

Key Features:

- True lock-free operation (no mutexes, no locks)

- Safe concurrent access from multiple producers and consumers

- Automatic memory reclamation using Safe Hazard Pointers

- Correct memory ordering for weak memory model architectures (ARM)

- No TLS node pool (eliminates destructor ordering issues)

- ABA problem prevention through HP-based protection

Performance Characteristics:

- Enqueue: O(1) amortized, wait-free

- Dequeue: O(1) amortized, lock-free

- Memory overhead: ~256 bytes per thread (hazard pointers)

Thread Safety:

- All methods are thread-safe

- Can be called concurrently from any number of threads

- Uses atomic operations with acquire/release semantics

- Note

- This implementation is production-safe and resolves TICKET-001 (TLS bug) and TICKET-002 (weak memory model safety).

- See also

- lockfree_job_queue_test.cpp for usage examples

- Examples

- queue_capabilities_sample.cpp.

Definition at line 63 of file lockfree_job_queue.h.

Member Typedef Documentation

◆ node_hp_domain

|

private |

Definition at line 312 of file lockfree_job_queue.h.

Constructor & Destructor Documentation

◆ lockfree_job_queue() [1/3]

| kcenon::thread::detail::lockfree_job_queue::lockfree_job_queue | ( | ) |

Constructs an empty lock-free job queue.

Initializes the queue with a dummy node to simplify the algorithm. The dummy node is never removed, allowing concurrent enqueue/dequeue.

Definition at line 41 of file lockfree_job_queue.cpp.

References approximate_size_, head_, and tail_.

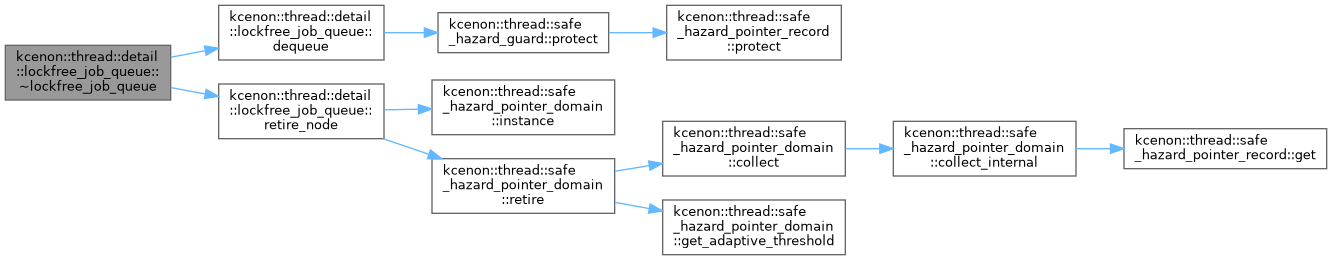

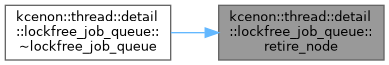

◆ ~lockfree_job_queue()

| kcenon::thread::detail::lockfree_job_queue::~lockfree_job_queue | ( | ) |

Destructor.

Drains the queue and reclaims all nodes. Thread-safe even if other threads are still accessing the queue (they will get errors).

Definition at line 53 of file lockfree_job_queue.cpp.

References dequeue(), head_, retire_node(), and shutdown_.

◆ lockfree_job_queue() [2/3]

|

delete |

◆ lockfree_job_queue() [3/3]

|

delete |

Member Function Documentation

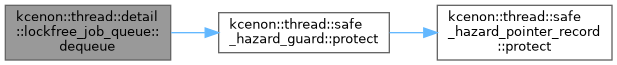

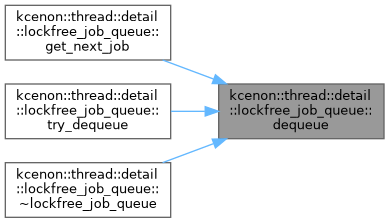

◆ dequeue()

|

nodiscard |

Dequeues a job from the queue (thread-safe)

- Returns

- common::Result<std::unique_ptr<job>> The dequeued job or error

- Note

- Lock-free operation (system-wide progress guaranteed)

- Returns empty result if queue is empty (not an error)

- Uses Hazard Pointers to protect nodes from premature deletion

- Retired nodes are eventually reclaimed by the HP domain

Time Complexity: O(1) amortized Memory Ordering: acquire/release semantics

Definition at line 144 of file lockfree_job_queue.cpp.

References kcenon::thread::detail::lockfree_job_queue::node::data, kcenon::thread::detail::lockfree_job_queue::node::next, kcenon::thread::safe_hazard_guard::protect(), and kcenon::thread::queue_empty.

Referenced by get_next_job(), try_dequeue(), and ~lockfree_job_queue().

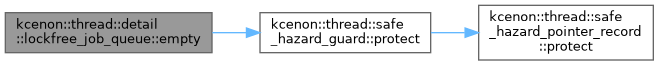

◆ empty()

|

nodiscard |

Checks if the queue is empty.

- Returns

- true if queue appears empty, false otherwise

- Note

- This is a snapshot view; queue may change immediately after

- Use for hints only, not for synchronization

Definition at line 248 of file lockfree_job_queue.cpp.

References head_, kcenon::thread::detail::lockfree_job_queue::node::next, kcenon::thread::safe_hazard_guard::protect(), and kcenon::thread::retry.

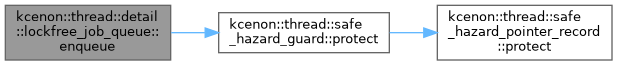

◆ enqueue()

|

nodiscard |

Enqueues a job into the queue (thread-safe)

- Parameters

-

job Unique pointer to the job to enqueue

- Returns

- common::VoidResult Success or error

- Note

- Wait-free operation (bounded number of steps)

- Takes ownership of the job pointer

- Never blocks, always makes progress

Time Complexity: O(1) amortized Memory Ordering: release semantics for visibility

Definition at line 76 of file lockfree_job_queue.cpp.

References kcenon::thread::invalid_argument, kcenon::thread::detail::lockfree_job_queue::node::next, kcenon::thread::safe_hazard_guard::protect(), kcenon::thread::queue_busy, and kcenon::thread::retry.

Referenced by schedule().

◆ get_capabilities()

|

inlinenodiscardoverridevirtual |

Returns capabilities of lockfree_job_queue.

- Returns

- queue_capabilities with lock-free characteristics

Capabilities:

- exact_size: false (approximate only due to concurrent modifications)

- atomic_empty_check: false (snapshot view, may change immediately)

- lock_free: true (uses lock-free Michael-Scott algorithm)

- wait_free: false (enqueue is wait-free, dequeue is lock-free)

- supports_batch: false (no batch operations available)

- supports_blocking_wait: false (spin-wait only via try_dequeue)

- supports_stop: false (no stop() method available)

Reimplemented from kcenon::thread::queue_capabilities_interface.

Definition at line 197 of file lockfree_job_queue.h.

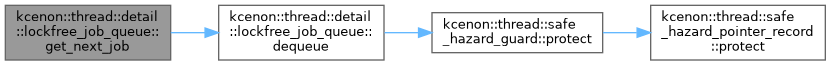

◆ get_next_job()

|

inlineoverridevirtual |

Get next job (delegates to dequeue)

- Returns

- common::Result<std::unique_ptr<job>> The dequeued job or error

- Note

- Part of scheduler_interface

Implements kcenon::thread::scheduler_interface.

Definition at line 173 of file lockfree_job_queue.h.

References dequeue().

◆ operator=() [1/2]

|

delete |

◆ operator=() [2/2]

|

delete |

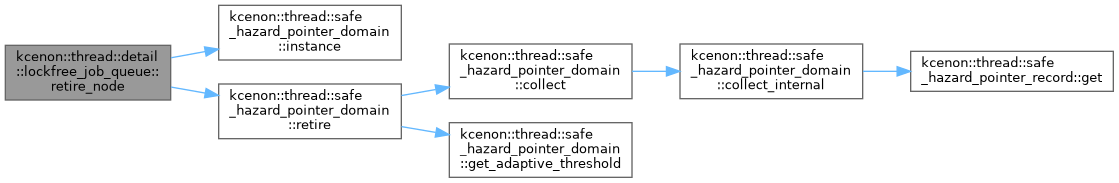

◆ retire_node()

|

private |

Retire a node through hazard pointers, recycling via pool on reclamation.

Definition at line 286 of file lockfree_job_queue.cpp.

References kcenon::thread::safe_hazard_pointer_domain::instance(), pool_, and kcenon::thread::safe_hazard_pointer_domain::retire().

Referenced by ~lockfree_job_queue().

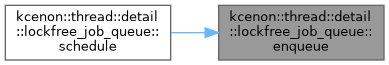

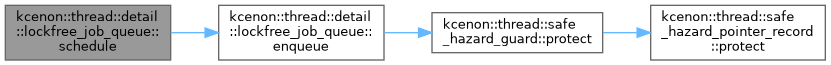

◆ schedule()

|

inlineoverridevirtual |

Schedule a job (delegates to enqueue)

- Parameters

-

work Job to schedule

- Returns

- common::VoidResult Success or error

- Note

- Part of scheduler_interface

Implements kcenon::thread::scheduler_interface.

Definition at line 162 of file lockfree_job_queue.h.

References enqueue().

◆ size()

|

nodiscard |

Gets approximate queue size.

- Returns

- Approximate number of jobs in queue

- Note

- This is a best-effort estimate due to concurrent modifications

- Use for monitoring/debugging, not for correctness

Definition at line 280 of file lockfree_job_queue.cpp.

References approximate_size_.

◆ try_dequeue()

|

inlinenodiscard |

Tries to dequeue a job without blocking.

- Returns

- common::Result<std::unique_ptr<job>> The dequeued job or empty

- Note

- Alias for dequeue() (lock-free queues never block)

- Provided for API compatibility with mutex-based queue

Definition at line 126 of file lockfree_job_queue.h.

References dequeue().

Member Data Documentation

◆ approximate_size_

|

mutableprivate |

Definition at line 315 of file lockfree_job_queue.h.

Referenced by lockfree_job_queue(), and size().

◆ head_

|

private |

Definition at line 304 of file lockfree_job_queue.h.

Referenced by empty(), lockfree_job_queue(), and ~lockfree_job_queue().

◆ pool_

|

private |

Definition at line 308 of file lockfree_job_queue.h.

Referenced by retire_node().

◆ shutdown_

|

private |

Definition at line 318 of file lockfree_job_queue.h.

Referenced by ~lockfree_job_queue().

◆ tail_

|

private |

Definition at line 305 of file lockfree_job_queue.h.

Referenced by lockfree_job_queue().

The documentation for this class was generated from the following files:

- include/kcenon/thread/lockfree/lockfree_job_queue.h

- src/lockfree/lockfree_job_queue.cpp