Manages automatic scaling of thread pool workers based on load metrics. More...

#include <autoscaler.h>

Public Member Functions | |

| autoscaler (thread_pool &pool, autoscaling_policy policy={}) | |

| Constructs an autoscaler for the given thread pool. | |

| ~autoscaler () | |

| Destructor. Stops the monitor thread if running. | |

| autoscaler (const autoscaler &)=delete | |

| autoscaler & | operator= (const autoscaler &)=delete |

| autoscaler (autoscaler &&)=delete | |

| autoscaler & | operator= (autoscaler &&)=delete |

| auto | start () -> void |

| Starts the autoscaling monitor thread. | |

| auto | stop () -> void |

| Stops the autoscaling monitor thread. | |

| auto | is_active () const -> bool |

| Checks if the autoscaler is currently active. | |

| auto | evaluate_now () -> scaling_decision |

| Manually triggers a scaling evaluation. | |

| auto | scale_to (std::size_t target_workers) -> common::VoidResult |

| Manually scales to a specific worker count. | |

| auto | scale_up () -> common::VoidResult |

| Manually scales up by the configured increment. | |

| auto | scale_down () -> common::VoidResult |

| Manually scales down by the configured increment. | |

| auto | set_policy (autoscaling_policy policy) -> void |

| Updates the autoscaling policy. | |

| auto | get_policy () const -> const autoscaling_policy & |

| Gets the current autoscaling policy. | |

| auto | get_current_metrics () const -> scaling_metrics_sample |

| Collects current metrics from the thread pool. | |

| auto | get_metrics_history (std::size_t count=60) const -> std::vector< scaling_metrics_sample > |

| Gets historical metrics samples. | |

| auto | get_stats () const -> autoscaling_stats |

| Gets autoscaling statistics. | |

| auto | reset_stats () -> void |

| Resets autoscaling statistics. | |

Private Member Functions | |

| auto | monitor_loop () -> void |

| Main monitoring loop running in the background thread. | |

| auto | collect_metrics () const -> scaling_metrics_sample |

| Collects current metrics from the pool. | |

| auto | make_decision (const std::vector< scaling_metrics_sample > &samples) const -> scaling_decision |

| Makes a scaling decision based on recent samples. | |

| auto | execute_scaling (const scaling_decision &decision) -> void |

| Executes a scaling decision. | |

| auto | can_scale_up () const -> bool |

| Checks if scale-up cooldown has elapsed. | |

| auto | can_scale_down () const -> bool |

| Checks if scale-down cooldown has elapsed. | |

| auto | add_workers (std::size_t count) -> common::VoidResult |

| Adds workers to the pool. | |

| auto | remove_workers (std::size_t count) -> common::VoidResult |

| Removes workers from the pool. | |

Private Attributes | |

| thread_pool & | pool_ |

| autoscaling_policy | policy_ |

| std::atomic< bool > | running_ {false} |

| std::unique_ptr< std::thread > | monitor_thread_ |

| std::mutex | mutex_ |

| std::condition_variable | cv_ |

| std::deque< scaling_metrics_sample > | metrics_history_ |

| std::mutex | history_mutex_ |

| std::chrono::steady_clock::time_point | last_scale_up_time_ |

| std::chrono::steady_clock::time_point | last_scale_down_time_ |

| autoscaling_stats | stats_ |

| std::mutex | stats_mutex_ |

| std::uint64_t | last_jobs_completed_ {0} |

| std::uint64_t | last_jobs_submitted_ {0} |

| std::chrono::steady_clock::time_point | last_sample_time_ |

Detailed Description

Manages automatic scaling of thread pool workers based on load metrics.

The autoscaler monitors thread pool metrics and automatically adjusts the number of workers to match workload demands. It uses a background monitor thread to periodically collect metrics and make scaling decisions.

Design Principles

- Non-intrusive: Scaling decisions are made asynchronously

- Configurable: All thresholds and behaviors are customizable

- Graceful: Scale-down removes workers only when safe

- Observable: Provides statistics and callbacks for monitoring

State Machine

Thread Safety

All public methods are thread-safe and can be called from any thread.

Usage Example

Definition at line 94 of file autoscaler.h.

Constructor & Destructor Documentation

◆ autoscaler() [1/3]

|

explicit |

Constructs an autoscaler for the given thread pool.

- Parameters

-

pool Reference to the thread pool to manage. policy Autoscaling policy configuration.

Definition at line 16 of file autoscaler.cpp.

References kcenon::thread::thread_pool::get_active_worker_count(), kcenon::thread::autoscaling_stats::min_workers, kcenon::thread::autoscaling_stats::peak_workers, pool_, stats_, and stats_mutex_.

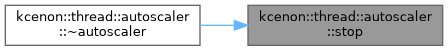

◆ ~autoscaler()

| kcenon::thread::autoscaler::~autoscaler | ( | ) |

Destructor. Stops the monitor thread if running.

Definition at line 27 of file autoscaler.cpp.

References stop().

◆ autoscaler() [2/3]

|

delete |

◆ autoscaler() [3/3]

|

delete |

Member Function Documentation

◆ add_workers()

|

private |

Adds workers to the pool.

- Parameters

-

count Number of workers to add.

- Returns

- Error if operation fails.

Definition at line 542 of file autoscaler.cpp.

◆ can_scale_down()

|

nodiscardprivate |

Checks if scale-down cooldown has elapsed.

- Returns

- true if scale-down is allowed.

Definition at line 528 of file autoscaler.cpp.

References kcenon::thread::thread_pool::get_active_worker_count(), last_scale_down_time_, kcenon::thread::autoscaling_policy::min_workers, policy_, pool_, and kcenon::thread::autoscaling_policy::scale_down_cooldown.

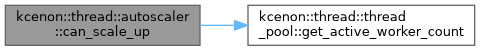

◆ can_scale_up()

|

nodiscardprivate |

Checks if scale-up cooldown has elapsed.

- Returns

- true if scale-up is allowed.

Definition at line 514 of file autoscaler.cpp.

References kcenon::thread::thread_pool::get_active_worker_count(), last_scale_up_time_, kcenon::thread::autoscaling_policy::max_workers, policy_, pool_, and kcenon::thread::autoscaling_policy::scale_up_cooldown.

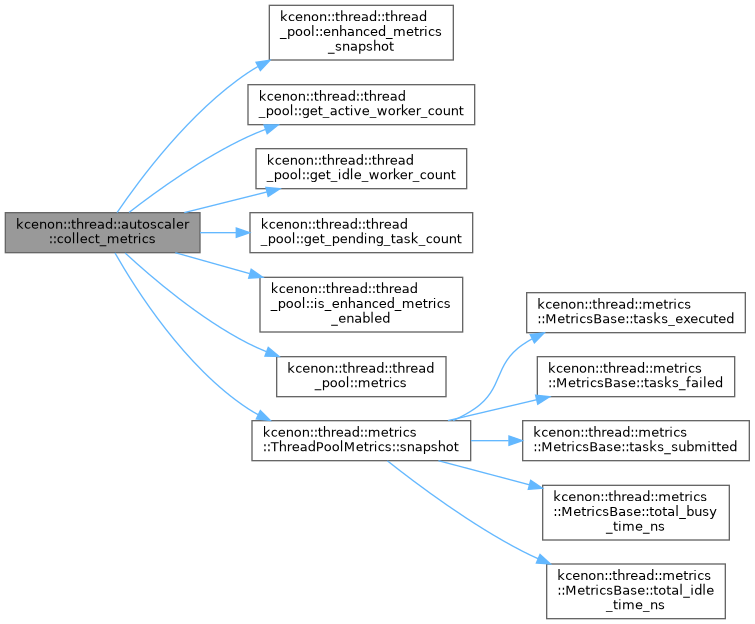

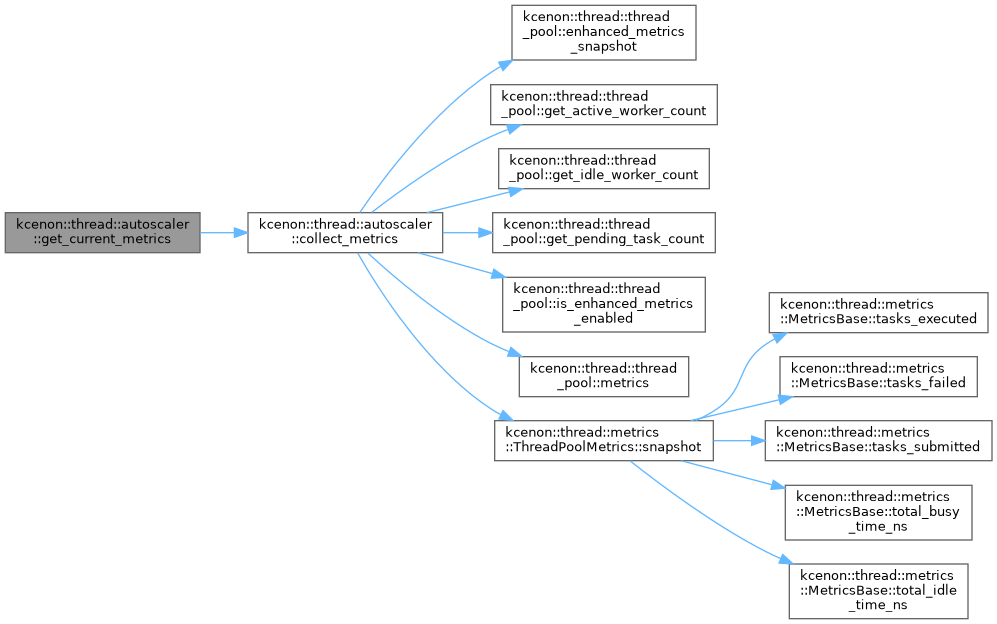

◆ collect_metrics()

|

nodiscardprivate |

Collects current metrics from the pool.

- Returns

- Collected metrics sample.

Definition at line 289 of file autoscaler.cpp.

References kcenon::thread::scaling_metrics_sample::active_workers, kcenon::thread::thread_pool::enhanced_metrics_snapshot(), kcenon::thread::thread_pool::get_active_worker_count(), kcenon::thread::thread_pool::get_idle_worker_count(), kcenon::thread::thread_pool::get_pending_task_count(), kcenon::thread::thread_pool::is_enhanced_metrics_enabled(), kcenon::thread::scaling_metrics_sample::jobs_completed, kcenon::thread::scaling_metrics_sample::jobs_submitted, last_jobs_completed_, last_jobs_submitted_, last_sample_time_, kcenon::thread::thread_pool::metrics(), kcenon::thread::scaling_metrics_sample::p95_latency_ms, pool_, kcenon::thread::scaling_metrics_sample::queue_depth, kcenon::thread::scaling_metrics_sample::queue_depth_per_worker, kcenon::thread::metrics::ThreadPoolMetrics::snapshot(), kcenon::thread::scaling_metrics_sample::throughput_per_second, kcenon::thread::scaling_metrics_sample::timestamp, kcenon::thread::scaling_metrics_sample::utilization, and kcenon::thread::scaling_metrics_sample::worker_count.

Referenced by get_current_metrics().

◆ evaluate_now()

|

nodiscard |

Manually triggers a scaling evaluation.

- Returns

- The scaling decision that would be made.

This does not actually execute the scaling; use scale_to() or scale_up()/scale_down() to actually modify worker count.

Definition at line 75 of file autoscaler.cpp.

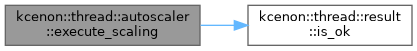

◆ execute_scaling()

|

private |

Executes a scaling decision.

- Parameters

-

decision The decision to execute.

Definition at line 465 of file autoscaler.cpp.

References kcenon::thread::down, kcenon::thread::result< T >::is_ok(), and kcenon::thread::up.

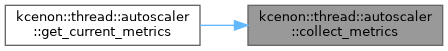

◆ get_current_metrics()

|

nodiscard |

Collects current metrics from the thread pool.

- Returns

- Current metrics sample.

Definition at line 165 of file autoscaler.cpp.

References collect_metrics().

◆ get_metrics_history()

|

nodiscard |

Gets historical metrics samples.

- Parameters

-

count Maximum number of samples to return.

- Returns

- Vector of recent metrics samples.

Definition at line 170 of file autoscaler.cpp.

◆ get_policy()

|

nodiscard |

Gets the current autoscaling policy.

- Returns

- Const reference to the policy.

Definition at line 160 of file autoscaler.cpp.

References policy_.

◆ get_stats()

|

nodiscard |

Gets autoscaling statistics.

- Returns

- Statistics about scaling operations.

Definition at line 189 of file autoscaler.cpp.

References stats_, and stats_mutex_.

◆ is_active()

|

nodiscard |

Checks if the autoscaler is currently active.

- Returns

- true if the monitor thread is running.

Definition at line 70 of file autoscaler.cpp.

References running_.

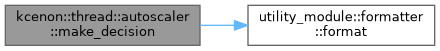

◆ make_decision()

|

nodiscardprivate |

Makes a scaling decision based on recent samples.

- Parameters

-

samples Recent metrics samples.

- Returns

- Scaling decision.

Definition at line 340 of file autoscaler.cpp.

References kcenon::thread::down, utility_module::formatter::format(), kcenon::thread::latency, kcenon::thread::queue_depth, kcenon::thread::up, and kcenon::thread::worker_utilization.

◆ monitor_loop()

|

private |

Main monitoring loop running in the background thread.

Definition at line 203 of file autoscaler.cpp.

References kcenon::thread::autoscaling_policy::automatic.

◆ operator=() [1/2]

|

delete |

◆ operator=() [2/2]

|

delete |

◆ remove_workers()

|

private |

Removes workers from the pool.

- Parameters

-

count Number of workers to remove.

- Returns

- Error if operation fails.

Definition at line 565 of file autoscaler.cpp.

◆ reset_stats()

| auto kcenon::thread::autoscaler::reset_stats | ( | ) | -> void |

Resets autoscaling statistics.

Definition at line 195 of file autoscaler.cpp.

References kcenon::thread::autoscaling_stats::min_workers.

◆ scale_down()

| auto kcenon::thread::autoscaler::scale_down | ( | ) | -> common::VoidResult |

Manually scales down by the configured increment.

- Returns

- Error if scaling fails.

Definition at line 143 of file autoscaler.cpp.

◆ scale_to()

| auto kcenon::thread::autoscaler::scale_to | ( | std::size_t | target_workers | ) | -> common::VoidResult |

Manually scales to a specific worker count.

- Parameters

-

target_workers Desired number of workers.

- Returns

- Error if scaling fails.

The target is clamped to [min_workers, max_workers] from the policy.

Definition at line 108 of file autoscaler.cpp.

◆ scale_up()

| auto kcenon::thread::autoscaler::scale_up | ( | ) | -> common::VoidResult |

Manually scales up by the configured increment.

- Returns

- Error if scaling fails.

Definition at line 127 of file autoscaler.cpp.

◆ set_policy()

| auto kcenon::thread::autoscaler::set_policy | ( | autoscaling_policy | policy | ) | -> void |

Updates the autoscaling policy.

- Parameters

-

policy New policy configuration.

Definition at line 154 of file autoscaler.cpp.

◆ start()

| auto kcenon::thread::autoscaler::start | ( | ) | -> void |

Starts the autoscaling monitor thread.

The monitor thread periodically collects metrics and makes scaling decisions based on the configured policy.

Definition at line 32 of file autoscaler.cpp.

◆ stop()

| auto kcenon::thread::autoscaler::stop | ( | ) | -> void |

Stops the autoscaling monitor thread.

Waits for the monitor thread to complete before returning.

Definition at line 47 of file autoscaler.cpp.

Referenced by ~autoscaler().

Member Data Documentation

◆ cv_

|

private |

Definition at line 278 of file autoscaler.h.

◆ history_mutex_

|

mutableprivate |

Definition at line 281 of file autoscaler.h.

◆ last_jobs_completed_

|

private |

◆ last_jobs_submitted_

|

private |

◆ last_sample_time_

|

private |

Definition at line 292 of file autoscaler.h.

Referenced by collect_metrics().

◆ last_scale_down_time_

|

private |

Definition at line 284 of file autoscaler.h.

Referenced by can_scale_down().

◆ last_scale_up_time_

|

private |

Definition at line 283 of file autoscaler.h.

Referenced by can_scale_up().

◆ metrics_history_

|

private |

Definition at line 280 of file autoscaler.h.

◆ monitor_thread_

|

private |

Definition at line 275 of file autoscaler.h.

◆ mutex_

|

mutableprivate |

Definition at line 277 of file autoscaler.h.

◆ policy_

|

private |

Definition at line 272 of file autoscaler.h.

Referenced by can_scale_down(), can_scale_up(), and get_policy().

◆ pool_

|

private |

Definition at line 271 of file autoscaler.h.

Referenced by autoscaler(), can_scale_down(), can_scale_up(), and collect_metrics().

◆ running_

|

private |

◆ stats_

|

private |

Definition at line 286 of file autoscaler.h.

Referenced by autoscaler(), and get_stats().

◆ stats_mutex_

|

mutableprivate |

Definition at line 287 of file autoscaler.h.

Referenced by autoscaler(), and get_stats().

The documentation for this class was generated from the following files:

- include/kcenon/thread/scaling/autoscaler.h

- src/scaling/autoscaler.cpp