Public Member Functions | |

| impl ()=default | |

| ~impl () | |

| auto | initialize (const ai_service_config &config) -> Result< std::monostate > |

| void | shutdown () |

| bool | is_initialized () const noexcept |

| auto | request_inference (const inference_request &request) -> Result< std::string > |

| auto | check_status (const std::string &job_id) -> Result< inference_status > |

| auto | cancel (const std::string &job_id) -> Result< std::monostate > |

| auto | wait_for_completion (const std::string &job_id, std::chrono::milliseconds timeout, status_callback callback) -> Result< inference_status > |

| auto | list_active_jobs () -> Result< std::vector< inference_status > > |

| auto | list_models () -> Result< std::vector< model_info > > |

| auto | get_model_info (const std::string &model_id) -> Result< model_info > |

| bool | check_health () |

| auto | get_latency () -> std::optional< std::chrono::milliseconds > |

| const ai_service_config & | get_config () const |

| auto | update_credentials (authentication_type auth_type, const std::string &credentials) -> Result< std::monostate > |

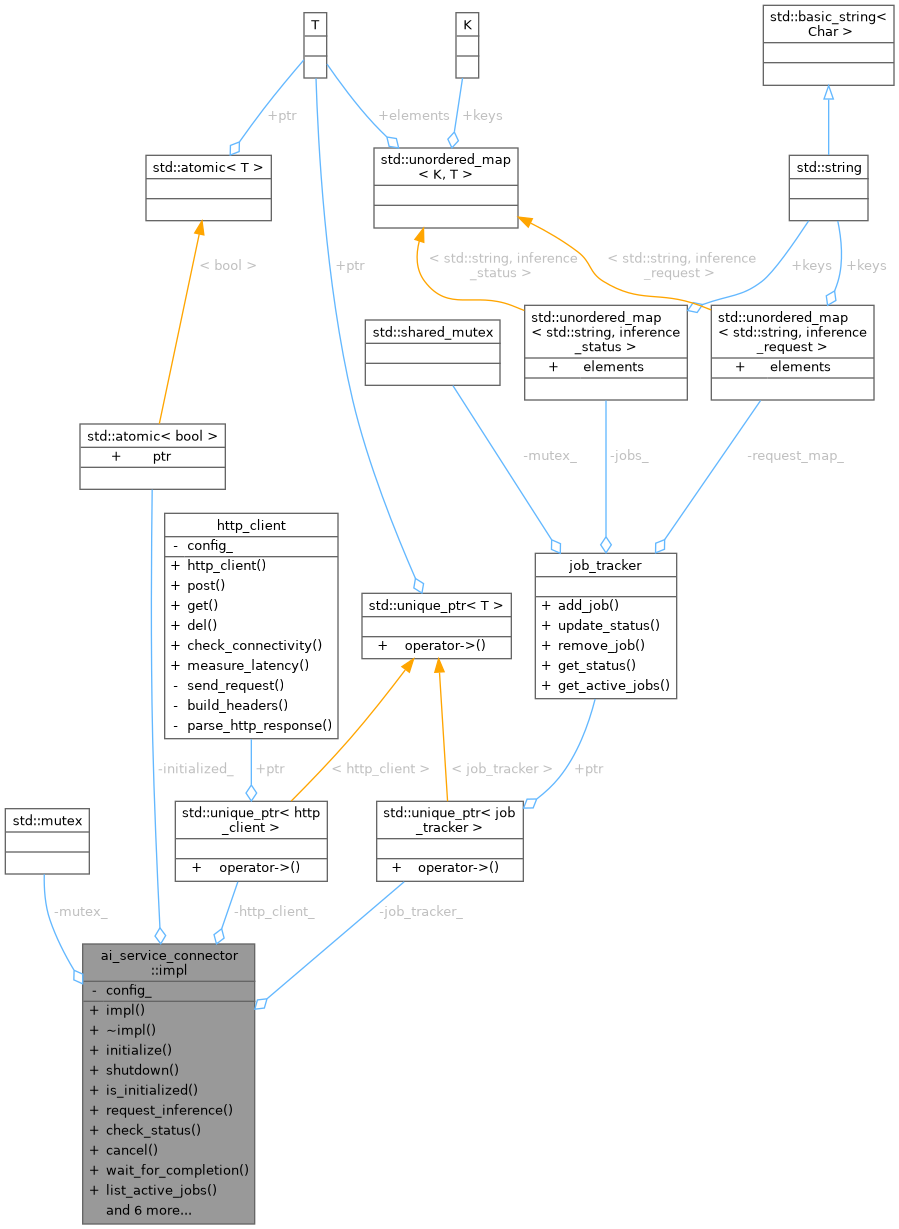

Private Attributes | |

| std::mutex | mutex_ |

| std::atomic< bool > | initialized_ {false} |

| ai_service_config | config_ |

| std::unique_ptr< http_client > | http_client_ |

| std::unique_ptr< job_tracker > | job_tracker_ |

Detailed Description

Definition at line 899 of file ai_service_connector.cpp.

Constructor & Destructor Documentation

◆ impl()

|

default |

◆ ~impl()

|

inline |

Definition at line 903 of file ai_service_connector.cpp.

Member Function Documentation

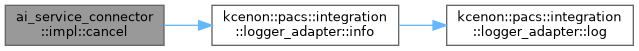

◆ cancel()

|

inline |

Definition at line 1055 of file ai_service_connector.cpp.

References kcenon::pacs::integration::logger_adapter::info().

◆ check_health()

|

inlinenodiscard |

Definition at line 1193 of file ai_service_connector.cpp.

◆ check_status()

|

inline |

Definition at line 1014 of file ai_service_connector.cpp.

◆ get_config()

|

inlinenodiscard |

Definition at line 1207 of file ai_service_connector.cpp.

◆ get_latency()

|

inlinenodiscard |

Definition at line 1200 of file ai_service_connector.cpp.

◆ get_model_info()

|

inline |

Definition at line 1168 of file ai_service_connector.cpp.

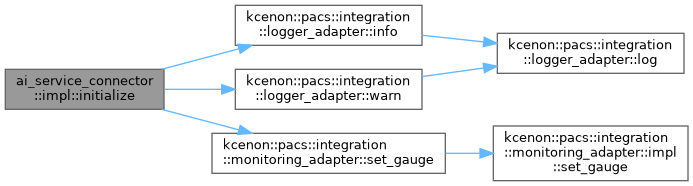

◆ initialize()

|

inline |

Definition at line 907 of file ai_service_connector.cpp.

References kcenon::pacs::integration::logger_adapter::info(), kcenon::pacs::integration::monitoring_adapter::set_gauge(), and kcenon::pacs::integration::logger_adapter::warn().

◆ is_initialized()

|

inlinenodiscardnoexcept |

Definition at line 959 of file ai_service_connector.cpp.

◆ list_active_jobs()

|

inline |

Definition at line 1128 of file ai_service_connector.cpp.

◆ list_models()

|

inline |

Definition at line 1136 of file ai_service_connector.cpp.

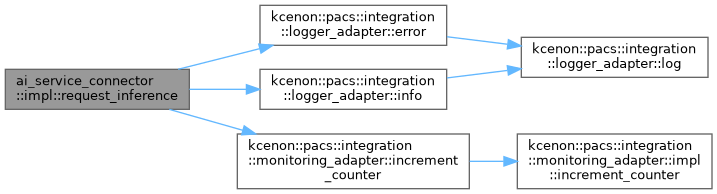

◆ request_inference()

|

inline |

Definition at line 963 of file ai_service_connector.cpp.

References kcenon::pacs::integration::logger_adapter::error(), kcenon::pacs::integration::monitoring_adapter::increment_counter(), kcenon::pacs::integration::logger_adapter::info(), kcenon::pacs::ai::inference_request::model_id, and kcenon::pacs::ai::inference_request::study_instance_uid.

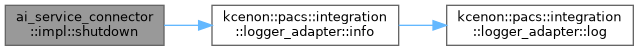

◆ shutdown()

|

inline |

Definition at line 945 of file ai_service_connector.cpp.

References kcenon::pacs::integration::logger_adapter::info().

◆ update_credentials()

|

inline |

Definition at line 1211 of file ai_service_connector.cpp.

References kcenon::pacs::integration::logger_adapter::info().

◆ wait_for_completion()

|

inline |

Definition at line 1085 of file ai_service_connector.cpp.

Member Data Documentation

◆ config_

|

private |

Definition at line 1252 of file ai_service_connector.cpp.

◆ http_client_

|

private |

Definition at line 1253 of file ai_service_connector.cpp.

◆ initialized_

|

private |

Definition at line 1251 of file ai_service_connector.cpp.

◆ job_tracker_

|

private |

Definition at line 1254 of file ai_service_connector.cpp.

◆ mutex_

|

mutableprivate |

Definition at line 1250 of file ai_service_connector.cpp.

The documentation for this class was generated from the following file:

- src/ai/ai_service_connector.cpp