Stress testing for the monitoring system. More...

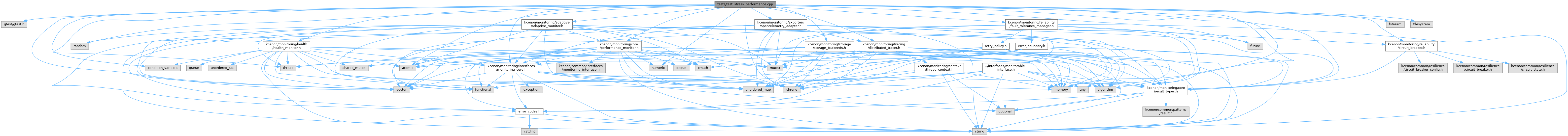

#include <gtest/gtest.h>#include <thread>#include <chrono>#include <vector>#include <atomic>#include <random>#include <memory>#include <future>#include <condition_variable>#include <algorithm>#include <numeric>#include <fstream>#include <filesystem>#include <kcenon/monitoring/core/result_types.h>#include <kcenon/monitoring/interfaces/monitoring_core.h>#include <kcenon/monitoring/tracing/distributed_tracer.h>#include <kcenon/monitoring/core/performance_monitor.h>#include <kcenon/monitoring/adaptive/adaptive_monitor.h>#include <kcenon/monitoring/health/health_monitor.h>#include <kcenon/monitoring/reliability/circuit_breaker.h>#include <kcenon/monitoring/reliability/fault_tolerance_manager.h>#include <kcenon/monitoring/exporters/opentelemetry_adapter.h>#include <kcenon/monitoring/storage/storage_backends.h>

Go to the source code of this file.

Classes | |

| class | StressPerformanceTest |

Macros | |

| #define | RUNNING_WITH_ASAN 0 |

Functions | |

| TEST_F (StressPerformanceTest, HighLoadStressTest) | |

| TEST_F (StressPerformanceTest, MemoryLeakDetectionTest) | |

| TEST_F (StressPerformanceTest, ConcurrencyStressTest) | |

| TEST_F (StressPerformanceTest, ResourceExhaustionTest) | |

| TEST_F (StressPerformanceTest, SustainedLoadTest) | |

| TEST_F (StressPerformanceTest, BurstLoadTest) | |

| TEST_F (StressPerformanceTest, DeadlockDetectionTest) | |

| TEST_F (StressPerformanceTest, PerformanceDegradationTest) | |

Variables | |

| constexpr double | SANITIZER_OVERHEAD_FACTOR = 0 ? 2.0 : 1.0 |

| constexpr bool | IS_RELEASE_BUILD = false |

| constexpr double | DEBUG_BUILD_OVERHEAD_FACTOR = IS_RELEASE_BUILD ? 1.0 : 2.0 |

Detailed Description

Stress testing for the monitoring system.

Tests system behavior under extreme conditions:

- High load scenarios

- Memory leak detection

- Concurrency stress tests

- Resource exhaustion handling

- Performance degradation analysis

Definition in file test_stress_performance.cpp.

Macro Definition Documentation

◆ RUNNING_WITH_ASAN

| #define RUNNING_WITH_ASAN 0 |

Definition at line 59 of file test_stress_performance.cpp.

Function Documentation

◆ TEST_F() [1/8]

| TEST_F | ( | StressPerformanceTest | , |

| BurstLoadTest | ) |

Test 6: Burst Load Test Tests system response to sudden load spikes

Definition at line 458 of file test_stress_performance.cpp.

References SANITIZER_OVERHEAD_FACTOR.

◆ TEST_F() [2/8]

| TEST_F | ( | StressPerformanceTest | , |

| ConcurrencyStressTest | ) |

Test 3: Concurrency Stress Test Tests thread safety and race conditions

Definition at line 300 of file test_stress_performance.cpp.

References kcenon::monitoring::metrics_snapshot::add_metric(), kcenon::monitoring::counter, kcenon::monitoring::storage_config::max_capacity, kcenon::monitoring::storage, and kcenon::monitoring::storage_config::type.

◆ TEST_F() [3/8]

| TEST_F | ( | StressPerformanceTest | , |

| DeadlockDetectionTest | ) |

Test 7: Deadlock Detection Test Tests for potential deadlocks in concurrent operations

Definition at line 521 of file test_stress_performance.cpp.

◆ TEST_F() [4/8]

| TEST_F | ( | StressPerformanceTest | , |

| HighLoadStressTest | ) |

Test 1: High Load Stress Test Tests system behavior under sustained high load

Definition at line 120 of file test_stress_performance.cpp.

References DEBUG_BUILD_OVERHEAD_FACTOR.

◆ TEST_F() [5/8]

| TEST_F | ( | StressPerformanceTest | , |

| MemoryLeakDetectionTest | ) |

Test 2: Memory Leak Detection Test Tests for memory leaks under repeated allocation/deallocation

Definition at line 237 of file test_stress_performance.cpp.

◆ TEST_F() [6/8]

| TEST_F | ( | StressPerformanceTest | , |

| PerformanceDegradationTest | ) |

Test 8: Performance Degradation Test Tests how performance degrades under increasing load

Definition at line 591 of file test_stress_performance.cpp.

◆ TEST_F() [7/8]

| TEST_F | ( | StressPerformanceTest | , |

| ResourceExhaustionTest | ) |

Test 4: Resource Exhaustion Test Tests behavior when resources are exhausted

Definition at line 366 of file test_stress_performance.cpp.

References kcenon::monitoring::metrics_snapshot::add_metric(), kcenon::monitoring::storage_config::max_capacity, kcenon::monitoring::storage, and kcenon::monitoring::storage_config::type.

◆ TEST_F() [8/8]

| TEST_F | ( | StressPerformanceTest | , |

| SustainedLoadTest | ) |

Test 5: Sustained Load Test Tests system stability under sustained moderate load

Definition at line 398 of file test_stress_performance.cpp.

Variable Documentation

◆ DEBUG_BUILD_OVERHEAD_FACTOR

|

constexpr |

Definition at line 74 of file test_stress_performance.cpp.

Referenced by TEST_F().

◆ IS_RELEASE_BUILD

|

constexpr |

Definition at line 70 of file test_stress_performance.cpp.

◆ SANITIZER_OVERHEAD_FACTOR

|

constexpr |

Definition at line 63 of file test_stress_performance.cpp.

Referenced by TEST_F().