Loading...

Searching...

No Matches

Lock-free node pool for high-performance memory allocation. More...

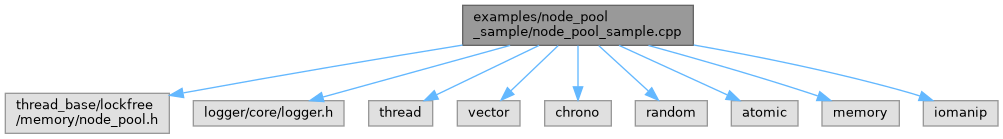

#include "thread_base/lockfree/memory/node_pool.h"#include "logger/core/logger.h"#include <thread>#include <vector>#include <chrono>#include <random>#include <atomic>#include <memory>#include <iomanip>

Include dependency graph for node_pool_sample.cpp:

Go to the source code of this file.

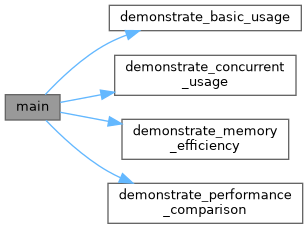

Classes | |

| struct | TestData |

Functions | |

| void | demonstrate_basic_usage () |

| void | demonstrate_concurrent_usage () |

| void | demonstrate_performance_comparison () |

| void | demonstrate_memory_efficiency () |

| int | main () |

Detailed Description

Lock-free node pool for high-performance memory allocation.

Definition in file node_pool_sample.cpp.

Function Documentation

◆ demonstrate_basic_usage()

| void demonstrate_basic_usage | ( | ) |

Definition at line 38 of file node_pool_sample.cpp.

38 {

39 log_module::write_information("\n=== Basic Node Pool Usage Demo ===");

40

41 // Create a node pool with 2 initial chunks, 512 nodes per chunk

42 node_pool<TestData> pool(2, 512);

43

44 // Show initial statistics

45 auto stats = pool.get_statistics();

46 log_module::write_information("Initial pool statistics:");

47 log_module::write_information(" Total chunks: {}", stats.total_chunks);

48 log_module::write_information(" Total nodes: {}", stats.total_nodes);

49 log_module::write_information(" Allocated nodes: {}", stats.allocated_nodes);

50 log_module::write_information(" Free list size: {}", stats.free_list_size);

51

52 // Allocate some nodes

53 std::vector<TestData*> allocated_nodes;

54 const int NUM_ALLOCATIONS = 100;

55

56 log_module::write_information("\nAllocating {} nodes...", NUM_ALLOCATIONS);

57 for (int i = 0; i < NUM_ALLOCATIONS; ++i) {

58 auto* node = pool.allocate();

59 node->value = i;

60 node->data = i * 3.14;

61 allocated_nodes.push_back(node);

62 }

63

64 // Show statistics after allocation

65 stats = pool.get_statistics();

66 log_module::write_information("After allocation:");

67 log_module::write_information(" Total chunks: {}", stats.total_chunks);

68 log_module::write_information(" Total nodes: {}", stats.total_nodes);

69 log_module::write_information(" Allocated nodes: {}", stats.allocated_nodes);

70 log_module::write_information(" Free list size: {}", stats.free_list_size);

71

72 // Verify data integrity

73 log_module::write_information("\nVerifying data integrity...");

74 bool integrity_ok = true;

75 for (int i = 0; i < NUM_ALLOCATIONS; ++i) {

76 if (allocated_nodes[i]->value != i ||

77 std::abs(allocated_nodes[i]->data - i * 3.14) > 0.001) {

78 integrity_ok = false;

79 break;

80 }

81 }

82 log_module::write_information("Data integrity: {}", integrity_ok ? "OK" : "FAILED");

83

84 // Deallocate half the nodes

85 log_module::write_information("\nDeallocating half the nodes...");

86 for (int i = 0; i < NUM_ALLOCATIONS / 2; ++i) {

87 pool.deallocate(allocated_nodes[i]);

88 allocated_nodes[i] = nullptr;

89 }

90

91 // Show statistics after partial deallocation

92 stats = pool.get_statistics();

93 log_module::write_information("After partial deallocation:");

94 log_module::write_information(" Total chunks: {}", stats.total_chunks);

95 log_module::write_information(" Total nodes: {}", stats.total_nodes);

96 log_module::write_information(" Allocated nodes: {}", stats.allocated_nodes);

97 log_module::write_information(" Free list size: {}", stats.free_list_size);

98

99 // Deallocate remaining nodes

100 for (int i = NUM_ALLOCATIONS / 2; i < NUM_ALLOCATIONS; ++i) {

101 pool.deallocate(allocated_nodes[i]);

102 }

103

104 // Final statistics

105 stats = pool.get_statistics();

106 log_module::write_information("After full deallocation:");

107 log_module::write_information(" Total chunks: {}", stats.total_chunks);

108 log_module::write_information(" Total nodes: {}", stats.total_nodes);

109 log_module::write_information(" Allocated nodes: {}", stats.allocated_nodes);

110 log_module::write_information(" Free list size: {}", stats.free_list_size);

111}

Referenced by main().

Here is the caller graph for this function:

◆ demonstrate_concurrent_usage()

| void demonstrate_concurrent_usage | ( | ) |

- Examples

- node_pool_sample.cpp.

Definition at line 113 of file node_pool_sample.cpp.

113 {

114 log_module::write_information("\n=== Concurrent Usage Demo ===");

115

116 constexpr int NUM_THREADS = 4;

117 constexpr int OPERATIONS_PER_THREAD = 1000;

118 constexpr int INITIAL_CHUNKS = 2;

119 constexpr int CHUNK_SIZE = 256;

120

121 node_pool<TestData> pool(INITIAL_CHUNKS, CHUNK_SIZE);

122

123 std::atomic<int> total_allocations{0};

124 std::atomic<int> total_deallocations{0};

125 std::atomic<int> allocation_failures{0};

126

127 auto start_time = std::chrono::high_resolution_clock::now();

128

129 std::vector<std::thread> threads;

130 threads.reserve(NUM_THREADS);

131

132 // Create worker threads

133 for (int thread_id = 0; thread_id < NUM_THREADS; ++thread_id) {

134 threads.emplace_back([&, thread_id]() {

135 std::random_device rd;

136 std::mt19937 gen(rd());

137 std::uniform_int_distribution<> dis(0, 100);

138

139 std::vector<TestData*> local_nodes;

140 local_nodes.reserve(OPERATIONS_PER_THREAD / 2);

141

142 for (int op = 0; op < OPERATIONS_PER_THREAD; ++op) {

143 if (dis(gen) < 70 || local_nodes.empty()) { // 70% chance to allocate

144 try {

145 auto* node = pool.allocate();

146 node->value = thread_id * 10000 + op;

147 node->data = thread_id + op * 0.001;

148 local_nodes.push_back(node);

149 total_allocations.fetch_add(1, std::memory_order_relaxed);

150 } catch (const std::bad_alloc&) {

151 allocation_failures.fetch_add(1, std::memory_order_relaxed);

152 }

153 } else { // Deallocate

154 if (!local_nodes.empty()) {

155 auto idx = std::uniform_int_distribution<size_t>(0, local_nodes.size() - 1)(gen);

156 pool.deallocate(local_nodes[idx]);

157 local_nodes.erase(local_nodes.begin() + idx);

158 total_deallocations.fetch_add(1, std::memory_order_relaxed);

159 }

160 }

161 }

162

163 // Clean up remaining nodes

164 for (auto* node : local_nodes) {

165 if (node) {

166 pool.deallocate(node);

167 total_deallocations.fetch_add(1, std::memory_order_relaxed);

168 }

169 }

170 });

171 }

172

173 // Wait for all threads to complete

174 for (auto& thread : threads) {

175 thread.join();

176 }

177

178 auto end_time = std::chrono::high_resolution_clock::now();

179 auto duration = std::chrono::duration_cast<std::chrono::milliseconds>(end_time - start_time);

180

181 log_module::write_information("Concurrent operations completed in {} ms", duration.count());

182 log_module::write_information("Total allocations: {}", total_allocations.load());

183 log_module::write_information("Total deallocations: {}", total_deallocations.load());

184 log_module::write_information("Allocation failures: {}", allocation_failures.load());

185

186 // Final pool statistics

187 auto stats = pool.get_statistics();

188 log_module::write_information("Final pool statistics:");

189 log_module::write_information(" Total chunks: {}", stats.total_chunks);

190 log_module::write_information(" Total nodes: {}", stats.total_nodes);

191 log_module::write_information(" Allocated nodes: {}", stats.allocated_nodes);

192 log_module::write_information(" Free list size: {}", stats.free_list_size);

193

194 // Calculate performance

195 int total_ops = total_allocations.load() + total_deallocations.load();

196 double ops_per_second = (double)total_ops / (duration.count() / 1000.0);

197 log_module::write_information("Performance: {} ops/second", static_cast<int>(ops_per_second));

198}

Referenced by main().

Here is the caller graph for this function:

◆ demonstrate_memory_efficiency()

| void demonstrate_memory_efficiency | ( | ) |

- Examples

- node_pool_sample.cpp.

Definition at line 273 of file node_pool_sample.cpp.

273 {

274 log_module::write_information("\n=== Memory Efficiency Demo ===");

275

276 // Small pool for testing

277 node_pool<TestData> small_pool(1, 256);

278 node_pool<TestData> medium_pool(2, 512);

279 node_pool<TestData> large_pool(4, 1024);

280

281 auto show_pool_info = [](const auto& pool, const char* name) {

282 auto stats = pool.get_statistics();

284 log_module::write_information("{}:", name);

285 log_module::write_information(" Total chunks: {}", stats.total_chunks);

286 log_module::write_information(" Total nodes: {}", stats.total_nodes);

287 log_module::write_information(" Memory usage: {} bytes ({:.1f} KB)", memory_usage, memory_usage / 1024.0);

289 };

290

291 show_pool_info(small_pool, "Small pool (1x256)");

292 show_pool_info(medium_pool, "Medium pool (2x512)");

293 show_pool_info(large_pool, "Large pool (4x1024)");

294

295 // Test fragmentation

296 log_module::write_information("Testing fragmentation scenario...");

297 std::vector<TestData*> nodes;

298

299 // Allocate many nodes

300 for (int i = 0; i < 100; ++i) {

301 nodes.push_back(medium_pool.allocate());

302 }

303

304 // Deallocate every other node (create fragmentation)

305 for (size_t i = 0; i < nodes.size(); i += 2) {

306 medium_pool.deallocate(nodes[i]);

307 nodes[i] = nullptr;

308 }

309

310 auto stats = medium_pool.get_statistics();

311 log_module::write_information("After fragmentation:");

312 log_module::write_information(" Allocated nodes: {}", stats.allocated_nodes);

313 log_module::write_information(" Free list size: {}", stats.free_list_size);

314

315 // Allocate new nodes (should reuse freed nodes)

316 int reused_count = 0;

317 for (size_t i = 0; i < nodes.size() && reused_count < 25; i += 2) {

318 if (!nodes[i]) {

319 nodes[i] = medium_pool.allocate();

320 reused_count++;

321 }

322 }

323

324 stats = medium_pool.get_statistics();

325 log_module::write_information("After reuse ({} nodes):", reused_count);

326 log_module::write_information(" Allocated nodes: {}", stats.allocated_nodes);

327 log_module::write_information(" Free list size: {}", stats.free_list_size);

328

329 // Clean up

330 for (auto* node : nodes) {

331 if (node) {

332 medium_pool.deallocate(node);

333 }

334 }

335}

Definition node_pool_sample.cpp:29

Referenced by main().

Here is the caller graph for this function:

◆ demonstrate_performance_comparison()

| void demonstrate_performance_comparison | ( | ) |

- Examples

- node_pool_sample.cpp.

Definition at line 200 of file node_pool_sample.cpp.

200 {

201 log_module::write_information("\n=== Performance Comparison Demo ===");

202

203 constexpr int NUM_OPERATIONS = 100000;

204 constexpr int WARMUP_OPERATIONS = 10000;

205

206 // Test with node pool

207 log_module::write_information("Testing node pool performance...");

208 node_pool<TestData> pool(4, 1024);

209

210 // Warmup

211 std::vector<TestData*> warmup_nodes;

212 for (int i = 0; i < WARMUP_OPERATIONS; ++i) {

213 warmup_nodes.push_back(pool.allocate());

214 }

215 for (auto* node : warmup_nodes) {

216 pool.deallocate(node);

217 }

218

219 auto start_time = std::chrono::high_resolution_clock::now();

220

221 std::vector<TestData*> pool_nodes;

222 pool_nodes.reserve(NUM_OPERATIONS);

223

224 // Allocate

225 for (int i = 0; i < NUM_OPERATIONS; ++i) {

226 pool_nodes.push_back(pool.allocate());

227 }

228

229 // Deallocate

230 for (auto* node : pool_nodes) {

231 pool.deallocate(node);

232 }

233

234 auto end_time = std::chrono::high_resolution_clock::now();

235 auto pool_duration = std::chrono::duration_cast<std::chrono::microseconds>(end_time - start_time);

236

237 // Test with standard allocation

238 log_module::write_information("Testing standard allocation performance...");

239

240 start_time = std::chrono::high_resolution_clock::now();

241

242 std::vector<std::unique_ptr<TestData>> std_nodes;

243 std_nodes.reserve(NUM_OPERATIONS);

244

245 // Allocate

246 for (int i = 0; i < NUM_OPERATIONS; ++i) {

247 std_nodes.push_back(std::make_unique<TestData>());

248 }

249

250 // Deallocate (automatic with unique_ptr)

251 std_nodes.clear();

252

253 end_time = std::chrono::high_resolution_clock::now();

254 auto std_duration = std::chrono::duration_cast<std::chrono::microseconds>(end_time - start_time);

255

256 log_module::write_information("Results:");

257 log_module::write_information(" Node pool: {} μs", pool_duration.count());

258 log_module::write_information(" Standard allocation: {} μs", std_duration.count());

259

260 if (std_duration.count() > 0) {

261 double speedup = (double)std_duration.count() / pool_duration.count();

262 log_module::write_information(" Speedup: {:.2f}x", speedup);

263 }

264

265 // Calculate operations per second

266 double pool_ops_per_sec = (2.0 * NUM_OPERATIONS) / (pool_duration.count() / 1000000.0);

267 double std_ops_per_sec = (2.0 * NUM_OPERATIONS) / (std_duration.count() / 1000000.0);

268

269 log_module::write_information(" Node pool ops/sec: {}", static_cast<int>(pool_ops_per_sec));

270 log_module::write_information(" Standard ops/sec: {}", static_cast<int>(std_ops_per_sec));

271}

Referenced by main().

Here is the caller graph for this function:

◆ main()

| int main | ( | ) |

Definition at line 337 of file node_pool_sample.cpp.

337 {

338 // Initialize logger

339 log_module::start();

340 log_module::console_target(log_module::log_types::Information);

341

342 log_module::write_information("Node Pool Sample");

343 log_module::write_information("================");

344

345 try {

346 demonstrate_basic_usage();

347 demonstrate_concurrent_usage();

350

351 log_module::write_information("\n=== All demos completed successfully! ===");

352

353 } catch (const std::exception& e) {

354 log_module::write_error("Error: {}", e.what());

355 log_module::stop();

356 return 1;

357 }

358

359 // Cleanup logger

360 log_module::stop();

361 return 0;

362}

void demonstrate_memory_efficiency()

Definition node_pool_sample.cpp:273

void demonstrate_performance_comparison()

Definition node_pool_sample.cpp:200

References demonstrate_basic_usage(), demonstrate_concurrent_usage(), demonstrate_memory_efficiency(), and demonstrate_performance_comparison().

Here is the call graph for this function: