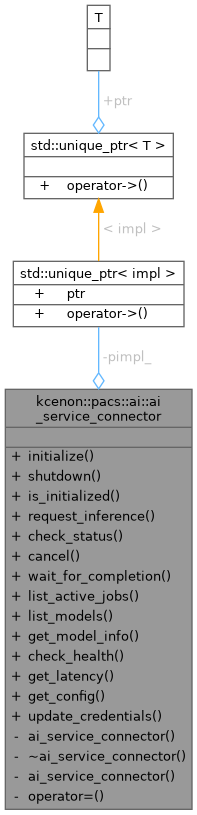

Connector for external AI inference services. More...

#include <ai_service_connector.h>

Public Types | |

| using | status_callback = std::function<void(const inference_status&)> |

| Callback type for status updates. | |

| using | completion_callback |

| Callback type for completion notification. | |

Static Public Member Functions | |

| static auto | initialize (const ai_service_config &config) -> Result< std::monostate > |

| Initialize the AI service connector. | |

| static void | shutdown () |

| Shutdown the AI service connector. | |

| static auto | is_initialized () noexcept -> bool |

| Check if the connector is initialized. | |

| static auto | request_inference (const inference_request &request) -> Result< std::string > |

| Request AI inference for a study. | |

| static auto | check_status (const std::string &job_id) -> Result< inference_status > |

| Check the status of an inference job. | |

| static auto | cancel (const std::string &job_id) -> Result< std::monostate > |

| Cancel an inference job. | |

| static auto | wait_for_completion (const std::string &job_id, std::chrono::milliseconds timeout=std::chrono::minutes{30}, status_callback callback=nullptr) -> Result< inference_status > |

| Wait for a job to complete. | |

| static auto | list_active_jobs () -> Result< std::vector< inference_status > > |

| List active inference jobs. | |

| static auto | list_models () -> Result< std::vector< model_info > > |

| List available AI models. | |

| static auto | get_model_info (const std::string &model_id) -> Result< model_info > |

| Get information about a specific model. | |

| static auto | check_health () -> bool |

| Check AI service health. | |

| static auto | get_latency () -> std::optional< std::chrono::milliseconds > |

| Get current latency to the AI service. | |

| static auto | get_config () -> const ai_service_config & |

| Get the current configuration. | |

| static auto | update_credentials (authentication_type auth_type, const std::string &credentials) -> Result< std::monostate > |

| Update authentication credentials. | |

Private Member Functions | |

| ai_service_connector ()=delete | |

| ~ai_service_connector ()=delete | |

| ai_service_connector (const ai_service_connector &)=delete | |

| ai_service_connector & | operator= (const ai_service_connector &)=delete |

Static Private Attributes | |

| static std::unique_ptr< impl > | pimpl_ |

Detailed Description

Connector for external AI inference services.

This class provides a unified interface for interacting with external AI inference services, including:

- Sending DICOM studies for AI processing

- Tracking inference job status

- Cancelling running jobs

- Listing available AI models

The connector uses network_system for HTTP communication and integrates with logger_system for audit logging and monitoring_system for metrics.

Thread Safety: All methods are thread-safe.

Definition at line 311 of file ai_service_connector.h.

Member Typedef Documentation

◆ completion_callback

Callback type for completion notification.

Definition at line 321 of file ai_service_connector.h.

◆ status_callback

| using kcenon::pacs::ai::ai_service_connector::status_callback = std::function<void(const inference_status&)> |

Callback type for status updates.

Definition at line 318 of file ai_service_connector.h.

Constructor & Destructor Documentation

◆ ai_service_connector() [1/2]

|

privatedelete |

◆ ~ai_service_connector()

|

privatedelete |

◆ ai_service_connector() [2/2]

|

privatedelete |

Member Function Documentation

◆ cancel()

|

staticnodiscard |

Cancel an inference job.

Attempts to cancel a pending or running job. Jobs that have already completed cannot be cancelled.

- Parameters

-

job_id The job identifier to cancel

- Returns

- Result indicating success or failure

◆ check_health()

|

staticnodiscard |

Check AI service health.

- Returns

- true if the service is healthy and accessible

◆ check_status()

|

staticnodiscard |

Check the status of an inference job.

- Parameters

-

job_id The job identifier returned from request_inference

- Returns

- Result containing current status on success

◆ get_config()

|

staticnodiscard |

Get the current configuration.

- Returns

- Current AI service configuration

◆ get_latency()

|

staticnodiscard |

Get current latency to the AI service.

- Returns

- Round-trip time to the service, or nullopt if unavailable

◆ get_model_info()

|

staticnodiscard |

Get information about a specific model.

- Parameters

-

model_id The model identifier

- Returns

- Result containing model information

◆ initialize()

|

staticnodiscard |

Initialize the AI service connector.

Must be called before any other operations. Sets up HTTP client, configures authentication, and validates connection.

- Parameters

-

config Configuration options

- Returns

- Result indicating success or initialization error

◆ is_initialized()

|

staticnodiscardnoexcept |

Check if the connector is initialized.

- Returns

- true if initialized, false otherwise

◆ list_active_jobs()

|

staticnodiscard |

List active inference jobs.

Returns all jobs that are currently pending or running.

- Returns

- Result containing list of active job statuses

◆ list_models()

|

staticnodiscard |

List available AI models.

- Returns

- Result containing list of available models

◆ operator=()

|

privatedelete |

◆ request_inference()

|

staticnodiscard |

Request AI inference for a study.

Submits a study for AI processing and returns a job ID for tracking.

- Parameters

-

request Inference request parameters

- Returns

- Result containing job ID on success, or error on failure

- Note

- The study must be accessible to the AI service (either via DICOM C-MOVE or DICOMweb WADO-RS)

◆ shutdown()

|

static |

Shutdown the AI service connector.

Cancels pending requests and releases resources.

◆ update_credentials()

|

staticnodiscard |

Update authentication credentials.

- Parameters

-

auth_type New authentication type credentials New credentials (API key, token, or username:password)

- Returns

- Result indicating success or failure

◆ wait_for_completion()

|

staticnodiscard |

Wait for a job to complete.

Blocks until the job completes, fails, or times out.

- Parameters

-

job_id The job identifier to wait for timeout Maximum time to wait status_callback Optional callback for status updates

- Returns

- Result containing final status on completion

Member Data Documentation

◆ pimpl_

|

staticprivate |

Definition at line 490 of file ai_service_connector.h.

The documentation for this class was generated from the following file:

- include/kcenon/pacs/ai/ai_service_connector.h