51 {

52 std::cout << "=== Alert Pipeline Example ===" << std::endl;

53 std::cout << std::endl;

54

55

56

57

58 std::cout << "1. Configuring AlertManager" << std::endl;

59 std::cout << " -------------------------" << std::endl;

60

69

70 std::cout << " Evaluation interval: 1s" << std::endl;

71 std::cout << " Repeat interval: 5s" << std::endl;

72 std::cout << " Grouping enabled: true" << std::endl;

73 std::cout << std::endl;

74

75

77 std::cerr << "Invalid configuration!" << std::endl;

78 return 1;

79 }

80

81

83

84

85

86

87 std::cout << "2. Creating Alert Rules" << std::endl;

88 std::cout << " ---------------------" << std::endl;

89

90

91 auto cpu_rule = std::make_shared<alert_rule>("high_cpu_usage");

92 cpu_rule->set_metric_name("cpu_usage")

93 .set_severity(alert_severity::critical)

94 .set_summary("CPU usage is critically high")

95 .set_description("CPU usage exceeded 80% threshold")

96 .add_label("team", "infrastructure")

97 .add_label("service", "compute")

98 .set_evaluation_interval(1000ms)

99 .set_for_duration(2000ms)

100 .set_repeat_interval(5000ms)

102

103 if (auto result = manager.add_rule(cpu_rule); result.is_err()) {

104 std::cerr << "Failed to add CPU rule: " << result.error().message << std::endl;

105 return 1;

106 }

107 std::cout << " Added rule: high_cpu_usage (threshold > 80%)" << std::endl;

108

109

110 auto memory_rule = std::make_shared<alert_rule>("low_memory");

111 memory_rule->set_metric_name("memory_available")

112 .set_severity(alert_severity::warning)

113 .set_summary("Available memory is low")

114 .set_description("Available memory dropped below 10%")

115 .add_label("team", "infrastructure")

116 .add_label("service", "memory")

117 .set_evaluation_interval(1000ms)

118 .set_for_duration(1000ms)

120

121 if (auto result = manager.add_rule(memory_rule); result.is_err()) {

122 std::cerr << "Failed to add memory rule: " << result.error().message << std::endl;

123 return 1;

124 }

125 std::cout << " Added rule: low_memory (threshold < 10%)" << std::endl;

126

127

128 auto io_rule_group = std::make_shared<alert_rule_group>("disk_io_group");

129

130 auto disk_read_rule = std::make_shared<alert_rule>("high_disk_read");

131 disk_read_rule->set_metric_name("disk_read_iops")

132 .set_severity(alert_severity::warning)

133 .set_summary("Disk read IOPS is high")

134 .add_label("team", "storage")

136

137 auto disk_write_rule = std::make_shared<alert_rule>("high_disk_write");

138 disk_write_rule->set_metric_name("disk_write_iops")

139 .set_severity(alert_severity::warning)

140 .set_summary("Disk write IOPS is high")

141 .add_label("team", "storage")

143

144 io_rule_group->add_rule(disk_read_rule);

145 io_rule_group->add_rule(disk_write_rule);

146 io_rule_group->set_common_interval(2000ms);

147

148 if (auto result = manager.add_rule_group(io_rule_group); result.is_err()) {

149 std::cerr << "Failed to add IO rule group" << std::endl;

150 return 1;

151 }

152 std::cout << " Added rule group: disk_io_group (2 rules)" << std::endl;

153

154

155 auto rules = manager.get_rules();

156 std::cout << " Total rules configured: " << rules.size() << std::endl;

157 std::cout << std::endl;

158

159

160

161

162 std::cout << "3. Setting Up Notifiers" << std::endl;

163 std::cout << " ---------------------" << std::endl;

164

165

166 auto log_notifier_ptr = std::make_shared<log_notifier>("console_logger");

167 if (auto result = manager.add_notifier(log_notifier_ptr); result.is_err()) {

168 std::cerr << "Failed to add log notifier" << std::endl;

169 return 1;

170 }

171 std::cout << " Added notifier: console_logger (log_notifier)" << std::endl;

172

173

174 auto callback_notifier_ptr = std::make_shared<callback_notifier>(

175 "custom_handler",

177 std::cout <<

" [CALLBACK] Alert received: " << a.

name

179 }

180 );

181 if (auto result = manager.add_notifier(callback_notifier_ptr); result.is_err()) {

182 std::cerr << "Failed to add callback notifier" << std::endl;

183 return 1;

184 }

185 std::cout << " Added notifier: custom_handler (callback_notifier)" << std::endl;

186 std::cout << std::endl;

187

188

189

190

191 std::cout << "4. Configuring Alert Aggregator" << std::endl;

192 std::cout << " -----------------------------" << std::endl;

193

199

201 std::cout << " Group by labels: team, service" << std::endl;

202 std::cout << " Group wait: 1s, interval: 3s" << std::endl;

203 std::cout << std::endl;

204

205

206

207

208 std::cout << "5. Setting Up Cooldown Tracker" << std::endl;

209 std::cout << " ----------------------------" << std::endl;

210

212 std::cout << " Default cooldown: 3s" << std::endl;

213

214

215 cooldown.set_cooldown("high_cpu_usage{}", 1000ms);

216 std::cout << " Custom cooldown for high_cpu_usage: 1s" << std::endl;

217 std::cout << std::endl;

218

219

220

221

222 std::cout << "6. Setting Up Alert Deduplicator" << std::endl;

223 std::cout << " ------------------------------" << std::endl;

224

226 std::cout << " Deduplication cache duration: 10s" << std::endl;

227 std::cout << std::endl;

228

229

230

231

232 std::cout << "7. Configuring Alert Inhibition" << std::endl;

233 std::cout << " -----------------------------" << std::endl;

234

236

237

239 critical_inhibits_warning.

name =

"critical_inhibits_warning";

242 critical_inhibits_warning.

equal = {

"team"};

243

244 inhibitor.

add_rule(critical_inhibits_warning);

245 std::cout << " Added rule: critical alerts inhibit warning alerts (same team)" << std::endl;

246 std::cout << std::endl;

247

248

249

250

251 std::cout << "8. Simulating Alert Lifecycle" << std::endl;

252 std::cout << " ---------------------------" << std::endl;

253

254

255 if (auto result = manager.start(); result.is_err()) {

256 std::cerr << "Failed to start alert manager: " << result.error().message << std::endl;

257 return 1;

258 }

259 std::cout << " Alert manager started" << std::endl;

260 std::cout << std::endl;

261

262

263 std::cout << " Simulating metric values..." << std::endl;

264 std::cout << std::endl;

265

266

267 std::cout << " [Phase 1] Normal operation (CPU: 50%, Memory: 80%)" << std::endl;

268 manager.process_metric("cpu_usage", 50.0);

269 manager.process_metric("memory_available", 80.0);

271 std::this_thread::sleep_for(1500ms);

272

273

274 std::cout << std::endl;

275 std::cout << " [Phase 2] CPU spike detected (CPU: 85%)" << std::endl;

276 manager.process_metric("cpu_usage", 85.0);

278 std::this_thread::sleep_for(1500ms);

279

280

281 std::cout << std::endl;

282 std::cout << " [Phase 3] CPU remains high (CPU: 90%)" << std::endl;

283 manager.process_metric("cpu_usage", 90.0);

285 std::this_thread::sleep_for(1500ms);

286

287

288 std::cout << std::endl;

289 std::cout << " [Phase 4] Memory drops (Memory: 5%)" << std::endl;

290 manager.process_metric("memory_available", 5.0);

292

293

294 auto active = manager.get_active_alerts();

296 for (

const auto& a :

active) {

298 std::cout <<

" Note: " << a.

name <<

" would be inhibited" << std::endl;

299 }

300 }

301 }

302 std::this_thread::sleep_for(1500ms);

303

304

305 std::cout << std::endl;

306 std::cout << " [Phase 5] CPU normalizes (CPU: 40%)" << std::endl;

307 manager.process_metric("cpu_usage", 40.0);

309 std::this_thread::sleep_for(1500ms);

310

311

312 std::cout << std::endl;

313 std::cout << " [Phase 6] Memory recovers (Memory: 50%)" << std::endl;

314 manager.process_metric("memory_available", 50.0);

316 std::cout << std::endl;

317

318

319

320

321 std::cout << "9. Alert Grouping Demonstration" << std::endl;

322 std::cout << " -----------------------------" << std::endl;

323

324

326 alert1.labels.set("team", "infrastructure");

327 alert1.labels.set("service", "compute");

328 alert1.severity = alert_severity::warning;

329 alert1.state = alert_state::firing;

330 alert1.value = 85.0;

331

333 alert2.labels.set("team", "infrastructure");

334 alert2.labels.set("service", "compute");

335 alert2.severity = alert_severity::warning;

336 alert2.state = alert_state::firing;

337 alert2.value = 92.0;

338

340 alert3.labels.set("team", "infrastructure");

341 alert3.labels.set("service", "memory");

342 alert3.severity = alert_severity::critical;

343 alert3.state = alert_state::firing;

344 alert3.value = 5.0;

345

346

347 std::string group1 = aggregator.add_alert(alert1);

348 std::string group2 = aggregator.add_alert(alert2);

349 std::string group3 = aggregator.add_alert(alert3);

350

351 std::cout << " Added 3 alerts to aggregator" << std::endl;

352 std::cout << " Total groups: " << aggregator.group_count() << std::endl;

353 std::cout << " Total alerts: " << aggregator.total_alert_count() << std::endl;

354

355

356 std::this_thread::sleep_for(1500ms);

357

358

359 auto ready_groups = aggregator.get_ready_groups();

360 std::cout << " Ready groups: " << ready_groups.size() << std::endl;

361 for (const auto& group : ready_groups) {

362 std::cout << " - Group: " << group.group_key

363 << " (alerts: " << group.size()

365 << ")" << std::endl;

366 aggregator.mark_sent(group.group_key);

367 }

368 std::cout << std::endl;

369

370

371

372

373 std::cout << "10. Cooldown and Deduplication Check" << std::endl;

374 std::cout << " ----------------------------------" << std::endl;

375

376 std::string test_fingerprint = "test_alert{}";

377

378

379 if (!cooldown.is_in_cooldown(test_fingerprint)) {

380 std::cout << " First notification sent for: " << test_fingerprint << std::endl;

381 cooldown.record_notification(test_fingerprint);

382 }

383

384

385 if (cooldown.is_in_cooldown(test_fingerprint)) {

386 auto remaining = cooldown.remaining_cooldown(test_fingerprint);

387 std::cout << " In cooldown, remaining: "

388 << std::chrono::duration_cast<std::chrono::milliseconds>(remaining).count()

389 << "ms" << std::endl;

390 }

391

392

394 dup_alert.state = alert_state::firing;

395

396 bool is_dup1 = deduplicator.is_duplicate(dup_alert);

397 std::cout << " First occurrence duplicate check: "

398 << (is_dup1 ? "duplicate" : "new") << std::endl;

399

400 bool is_dup2 = deduplicator.is_duplicate(dup_alert);

401 std::cout << " Second occurrence duplicate check: "

402 << (is_dup2 ? "duplicate" : "new") << std::endl;

403

404

405 dup_alert.state = alert_state::resolved;

406 bool is_dup3 = deduplicator.is_duplicate(dup_alert);

407 std::cout << " After state change duplicate check: "

408 << (is_dup3 ? "duplicate" : "new") << std::endl;

409 std::cout << std::endl;

410

411

412

413

414 std::cout << "11. Cleanup" << std::endl;

415 std::cout << " -------" << std::endl;

416

417

418 if (auto result = manager.stop(); result.is_err()) {

419 std::cerr << "Failed to stop alert manager: " << result.error().message << std::endl;

420 return 1;

421 }

422 std::cout << " Alert manager stopped" << std::endl;

423

424

425 auto metrics = manager.get_metrics();

426 std::cout << " Final metrics:" << std::endl;

427 std::cout << " Rules evaluated: " << metrics.rules_evaluated << std::endl;

428 std::cout << " Alerts created: " << metrics.alerts_created << std::endl;

429 std::cout << " Alerts resolved: " << metrics.alerts_resolved << std::endl;

430 std::cout << " Alerts suppressed: " << metrics.alerts_suppressed << std::endl;

431 std::cout << " Notifications sent: " << metrics.notifications_sent << std::endl;

432 std::cout << std::endl;

433

434

435 aggregator.cleanup();

436 std::cout << " Aggregator cleaned up" << std::endl;

437

438

439 deduplicator.reset();

440 std::cout << " Deduplicator reset" << std::endl;

441

442

443 cooldown.reset();

444 std::cout << " Cooldown tracker reset" << std::endl;

445 std::cout << std::endl;

446

447 std::cout << "=== Alert Pipeline Example Completed ===" << std::endl;

448

449 return 0;

450}

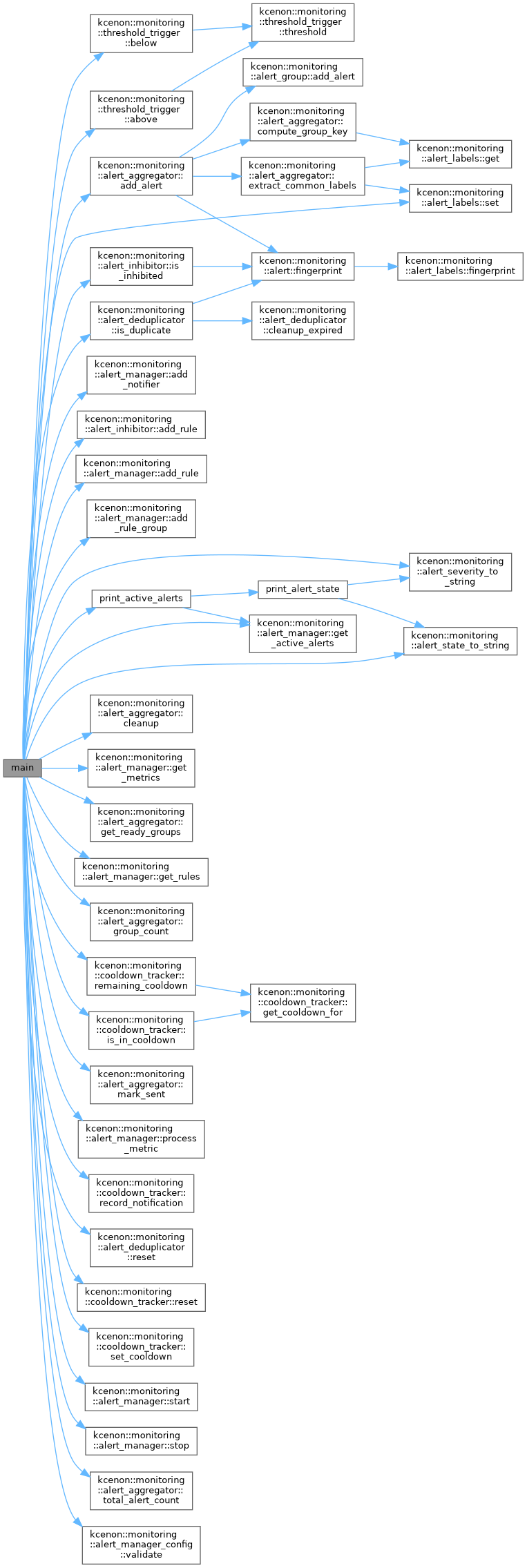

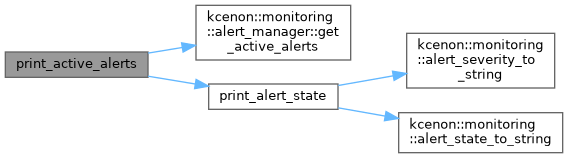

void print_active_alerts(const alert_manager &manager)

Groups and deduplicates alerts.

Deduplicates alerts based on fingerprint.

Manages alert inhibition rules.

bool is_inhibited(const alert &target, const std::vector< alert > &active_alerts) const

Check if an alert is inhibited by any active alerts.

void add_rule(const inhibition_rule &rule)

Add an inhibition rule.

Central coordinator for the alert pipeline.

Tracks cooldown periods for alert notifications.

static std::shared_ptr< threshold_trigger > below(double threshold)

Create trigger for value < threshold.

static std::shared_ptr< threshold_trigger > above(double threshold)

Create trigger for value > threshold.

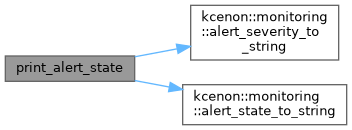

constexpr const char * alert_state_to_string(alert_state state) noexcept

Convert alert state to string.

constexpr const char * alert_severity_to_string(alert_severity severity) noexcept

Convert alert severity to string.

Configuration for alert aggregation.

std::chrono::milliseconds group_interval

Interval between group sends.

std::chrono::milliseconds group_wait

Initial wait before sending.

std::chrono::milliseconds resolve_timeout

Time before removing resolved.

std::vector< std::string > group_by_labels

Labels to group by.

Key-value labels for alert identification and routing.

void set(const std::string &key, const std::string &value)

Add or update a label.

Configuration for the alert manager.

std::chrono::milliseconds resolve_timeout

Auto-resolve timeout.

std::chrono::milliseconds default_evaluation_interval

Default eval interval.

size_t max_alerts_per_rule

Max alerts per rule.

bool validate() const

Validate configuration.

std::chrono::milliseconds group_wait

Wait time before group send.

bool enable_grouping

Enable alert grouping.

std::chrono::milliseconds default_repeat_interval

Default repeat interval.

std::chrono::milliseconds group_interval

Group batch interval.

Core alert data structure.

alert_state state

Current state.

std::string name

Alert name/identifier.

Rule for inhibiting alerts based on other alerts.

std::vector< std::string > equal

Labels that must be equal on both.

alert_labels target_match

Labels that target alert must have.

alert_labels source_match

Labels that source alert must have.